Research Article

The Knowledge, Attitudes, and Practices of Academic Librarians Toward Open Educational Resources: Developing and Validating the OpenEd-LibKAP Scale

Riley Richards

Assistant Professor, Communication

Oregon Institute of Technology

Klamath Falls, Oregon, United States of America

Email: riley.richards@oit.edu

Jennifer Monnin

Research Support and Engagement Librarian

Assistant University Librarian

Health Sciences Library

West Virginia University

Morgantown, West Virginia, United States of America

Email: jennifer.monnin@mail.wvu.edu

Kristin Whitman

Head of Learning and Information Services

OHSU Library

Oregon Health & Science University

Portland, Oregon, United States of America

Email: whitmank@ohsu.edu

Received: 15 Jan. 2025 Accepted: 21 Oct. 2025

![]() 2026

Richards, Monnin, and Whitman. This is an Open Access article

distributed under the terms of the Creative Commons‐Attribution‐Noncommercial‐Share

Alike License 4.0 International (http://creativecommons.org/licenses/by-nc-sa/4.0/),

which permits unrestricted use, distribution, and reproduction in any medium,

provided the original work is properly attributed, not used for commercial

purposes, and, if transformed, the resulting work is redistributed under the

same or similar license to this one.

2026

Richards, Monnin, and Whitman. This is an Open Access article

distributed under the terms of the Creative Commons‐Attribution‐Noncommercial‐Share

Alike License 4.0 International (http://creativecommons.org/licenses/by-nc-sa/4.0/),

which permits unrestricted use, distribution, and reproduction in any medium,

provided the original work is properly attributed, not used for commercial

purposes, and, if transformed, the resulting work is redistributed under the

same or similar license to this one.

DOI: 10.18438/eblip30707

Abstract

Objective – The aims of the open education (OE) movement can be supported by academic librarians, although most librarians have not had formal training on open educational resources (OER). The objective of this study is to design and validate a survey instrument to measure the knowledge, attitudes, and practices (KAP) of academic librarians toward OER to be used in future research projects.

Methods – The Open Education - Librarian Knowledge, Attitudes, and Practices (OpenEd-LibKAP) scale was developed by experienced academic librarians and assessed for content validity by OER subject experts. A pilot study was conducted to assess internal consistency, and a second round of the survey was administered to an international group of current academic librarians. Exploratory factor analysis (EFA) was performed on the results.

Results – The final instrument includes 50 questions, with 22 items in the knowledge domain, 16 items in the attitude domain, and 12 items in the practices domain. The KAP factors positively correlate in an expected manner, with a range from .404 to .591. The individual domains have high markers of reliability, implying a degree of confidence in our findings and future uses of the tool.

Conclusion – The OpenEd-LibKAP Scale developed through this study can be used by library administrators, OER program administrators, librarian researchers, and OER researchers to more accurately measure and assess academic librarian OER competencies, beliefs, and behaviours related to OER.

Introduction

The aims of the open education (OE) movement include the creation of openly licensed learning materials, or open educational resources (OER), that can be freely adopted and shared, making education more accessible and affordable for students. Librarians are well-positioned to participate in the OE movement, as their expertise encompasses copyright knowledge for open licensing, searching expertise for identifying OER for adoption, and assisting with the cataloguing, hosting, and dissemination of OER materials in digital repositories (Okamoto, 2013).

Braddlee and VanScoy (2019) found that librarians’ most-recognized OER-supporting functions include raising awareness on campus, educating faculty about copyright and creative commons licensing, and helping faculty discover resources to adopt in their courses. Librarians also play a role in the collection and curation of available open resources and may create and maintain repositories of OER created by faculty (Braddlee & VanScoy, 2019). Depending on the scope of their role, academic librarians may also have impacts at the institutional level, such as driving policy development or working with institutional OER grant programs.

Despite this potential, it is unknown how much academic librarians in general are aware of OER and relevant OER concepts. To that end, the current study draws on OE and librarian literature to frame and initially propose a social scientific self-report measure to fill in this knowledge gap. Additionally, the current study provides the initial validation of the proposed scale.

Literature Review

While librarians clearly have a role to play in the OE movement, the extent to which relevant knowledge and skills have been acquired by academic librarians across the discipline has not been systematically quantified. Some researchers have addressed the topic using instruments that were created for the purpose of individual studies, and have not tested their measures through psychometric processes (i.e., scale development); various research teams have conducted studies of specific geographical groups of librarians, including Nigerian, Scottish, and Ukrainian librarians (Kolesnykova & Matveyeva, 2021; Nwaohiri, 2021; Thompson & Muir, 2020), however, survey validation attempts among these researchers have varied and the resulting instruments are either not available, or not easily generalized to further research designs.

Academic librarians have varied levels of exposure and knowledge to OER, as OE is not considered a required competency for graduation at most library schools. Therefore, structured professional development may be necessary to help librarians gain necessary skills beyond their graduate programs (Thornton, 2021). Thomson and Peach (2023) described an effort to make OER efforts sustainable in academic libraries through homegrown professional development efforts, with the goal of “integrating the philosophy of open education… into librarians’ everyday work” (p. 253) and characterize this as a necessary precondition for the sustainability of OER efforts within their library system.

According to Larson (2020), researchers are beginning to ask what knowledge and skills librarians should possess to effectively support OER in higher education, and as more academic libraries begin to assume OE roles within their institutions, new OER-specific positions are being created within libraries. Larson performed a thematic analysis to determine skills that academic librarians are expected to possess in order to qualify for these roles, and determined that a standard scope for expected knowledge and skills in an academic OER librarian had not yet emerged; common themes in position descriptions included scholarly communication skills (such as copyright and licensing expertise) and instructional design experience, which would logically correlate to supporting faculty with OER adoption and open pedagogy.

Professional development opportunities exist for librarians to learn OER skills, but most target interested individuals rather than providing training on an institutional level. For example, the Open Education Network (OEN) (n.d.) runs a “Certificate in Open Education Librarianship” program which aims not only to impart necessary OER knowledge, but also to prepare librarians to grow new initiatives and advocate to stakeholders within their institutions. Since 2019, the OEN program alone has trained librarians at over 275 institutes of higher education. Although individual librarians may be growing their skills through this or similar programs, no validated instrument exists to measure what impact these programs may be having on the level of OER knowledge, attitudes, or practices (KAP) within academic libraries in general.

Surveys related to OER do exist outside the library context. Various companies and consulting firms have created surveys to assess the OER awareness of faculty in higher education settings, such as the reports produced by Bay View Analytics (formerly the Babson Survey Research Group). Although these reports frequently contain OER-related awareness questions, they are not tailored to academic librarians and not primarily intended to gauge faculty members’ knowledge of key topics such as open licensing methods, or participation in practices such as OER advocacy (Bay View Analytics, n.d.; Seaman & Seaman, 2020).

Knowledge, Attitudes, and Practices (KAP)

The KAP framework is often used in health fields to determine a baseline of what is known (knowledge), what is believed (attitudes), and what is done (practices) by which to measure the success of a subsequent educational intervention, e.g., in a pre/posttest experimental research design (Andrade et al., 2020). KAP surveys have also been used to study librarians, as for example in Tummon and McKinnon (2018), who used this lens to investigate the attitudes and practices of librarians toward online privacy topics. This method has also been used to investigate the KAP of university students toward Web 2.0 tools (Matingwina, 2014) and of faculty members toward integrated library tools in higher education settings (Goharinezhad et al., 2012).

A validated KAP survey instrument for use in an academic library setting would be a valuable addition to the literature on librarian expertise in OER and could enable further study of this population. For example, it could be used to investigate the relative levels of KAP within sub-groups, e.g., medical librarians vs. engineering librarians, librarians within institutions of different Carnegie classification types, and so on. Because of their use in establishing baseline measurements, such an instrument could also be used in a pre/posttest situation within a single institution to study the success of educational interventions to raise the KAP of their librarians on OER topics.

Aims

The objective of the study is to design and validate a survey instrument to measure the knowledge, attitudes and practices (KAP) of academic librarians toward OER in order to more fully understand the educational needs of academic librarians with respect to OER KAP, either within institutions or across academic library populations.

Methods

To construct and test the intended OpenEd-LibKAP Scale the team conducted a three-phase process. First, the team identified items and then assessed content validity with the help of subject experts. Second, we conducted a pilot study to assess internal consistency, address any problematic items, and reduce items before fully collecting data. Third, we conducted a full international study of current librarians to assess the overall factor structure of the measurement. Those steps are detailed below.

Phase 1: Item Generation and Content Validity

We identified and defined higher-order ideas (i.e., KAP) and sub-themes within them. The team then used deductive and inductive methods to create the initial set of items following the best-practice recommendation from Boateng, et al. (2018). Deductively-generated items were gathered from a literature survey on KAP, OER, and related areas. The goal of item generation was to be inclusive of all sub-fields of librarianship regardless of whether they are traditionally thought of as having a role to play in OER. The team added deductively and inductively identified higher-order ideas and sub-themes into the survey, organizing them into "knowledge," "attitudes," and "practices" as appropriate.

In addition to the inventory of relevant library OER knowledge and practices compiled by Braddlee and VanScoy (2019), the literature survey also included gray literature sources that outline OER professional practices for academic librarians, such as the openly available curriculum from the OEN Certificate in OER Librarianship (Open Education Network, n.d.), and a list of common myths about OER from the Tacoma Community College OER LibGuide (Snoek-Brown, n.d.). Learning outcomes from the certificate and myths from the LibGuide were adapted to KAP questions as appropriate for each topic.

We also generated inductive items from two of our colleagues’ personal experiences. These two authors currently work as full-time academic librarians at four-year institutions who support or have supported OER efforts in their roles. One of these is a SPARC Open Education Leadership fellow, has completed the OEN Certificate in OER librarianship, and started the OER program at a previous four-year institution. Combined, they have 11 years of experience as full-time academic librarians.

For initial review, we generated the scale using a deductive and inductive process. We generated a large number of items per prior scale development suggestions to create double the number of items that may be needed (Boateng et al., 2018; DeVellis, 2017) and to have at least three items per construct (Kline, 2016). Ultimately, the team generated 30 items for knowledge, 28 items for attitude, and 29 items for practices.

Content Validity Review and Results

The team then submitted these 87 items to six expert reviewers. The reviewers included OER program managers, prominent members of the OE research field, and experienced researchers in the academic library research community (see the acknowledgment section below). Due to the large number of items we asked these experts to review, we opted for an open-ended qualitative feedback loop rather than the more common tactic of rating each item on a scale to indicate if they agreed that each item aligned with our desired construct (Boateng et al., 2018).

Feedback from the expert reviewers was mostly positive. However, they provided us with multiple suggestions which we incorporated. These included: deleting one item, adding ten items, and editing ten items for the knowledge subscale; deleting one item, adding six items, and editing seven items for attitudes; and, deleting nine items, adding two items, and editing ten items for practices. This resulted in the scale including 39 items for knowledge, 33 items for attitude, and 22 questions for practices.

After completing this initial content validity test, the team completed the Institutional Review Board (IRB) application process. The below studies (phase 2 and 3) were approved by IRB at the Oregon Institute of Technology (Protocol No. 2024-02-14-W) and the West Virginia University (Protocol No. 2402920042).

Phase 2: Pilot Test

During the pilot test we set out to test for internal consistency among items. Due to the size of the initial scale, we sought to identify potential items for deletion in order to reduce participant fatigue. Given that confidence intervals for internal consistency become less impacted by sample size when tested on sample sizes with 24-36 participants (Johanson & Brooks, 2010) we targeted a pilot test of the scale on 30 librarians.

We collected data during the Spring of 2024 via an online survey from a convenience sample of 30 librarians mostly located in the United States (n = 29, 96.7%) at research-intensive (i.e., R1; n = 19, 63.3%) and doctoral grant institutions (n = 23, 76.7%). Additionally, they mostly self-identified as Caucasian (n = 23, 76.7%), not having a disability (n = 24, 80%), and female (n = 19, 63.3%) over male (n = 7, 23.3%). Nearly all participants (n = 29) have their MLS or MLIS and completed it between 1972 and 2021 with an average of 15.21 years since graduation (SD = 10.59). Most self-identified working as a subject liaison (n = 22, 73.3%), having instructional responsibilities (n = 27, 90%), and not having major OER responsibilities (n = 26, 86.7%), not currently working in an upper-level leadership role (n = 27, 90%), or as a solo-librarian (n = 25, 83.3%).

Measures

Instructions for each of the measures, as well as supplied definitions for the attitude and practice sections, can be found in the supplied Appendices. The instructions and definitions were the same in phase two and three.

Knowledge was assessed with 39 items measured on a 5-point Likert scale (1 = strongly disagree, 5 = strongly agree). Sample items include “I can define open pedagogy” and “I am familiar with OER quality rubrics.”

Attitude was assessed with 33 items, also measured on the same 5-point Likert scale (1 = strongly disagree) as the knowledge items. However, due to the various definitions around OE terms, we provided definitions of key concepts to keep ideas across participants consistent (see Appendix). Attitude items were separated into two blocks, general OE attitudes (n = 15) and attitudes that relate to librarians’ potential roles in connection with OE (n = 18). Each set of questions provided different opening instructions to match general attitudes and position-specific attitudes. General OE items include “conducting research around open educational practices is valuable scholarly work” and “open educational practices promote student success.” Librarian role example items included, “educating instructors about how to search for open content” and “educating instructors about open pedagogy.”

Practice was assessed with 22 items on a 4-point Likert scale (0 = never, 1 = rarely, 2 = occasionally, and 3 = frequently) with an option to indicate the item did not apply to them. We supplied participants with the same definitions of key concepts included above in the attitude scale. Example practice items include, “I remain up to date on current issues in open education as they relate to my work as a librarian,” and “Before I create new materials, I search for open content that I can adapt to my needs.”

Pilot Test Results

To assess internal consistency, we calculated reliability for each proposed knowledge (ɑ = .966), attitudes (ɑ = .865), and practice (ɑ = .948) measure. Reliability scores for each subscale were high and results didn’t suggest the reliability score would dramatically increase if item(s) were deleted. Furthermore, the abnormally high reliability scores suggested items may be too similar. Therefore, bivariate correlations among items within each KAP measure were conducted to begin and weed out multicollinearity items.

Multiple items indicated perfect correlation (r = 1.00, p < .01) between them. Examples include knowledge items “I can define the term open educational resources” and “I can define the term open pedagogy,” attitude items “open educational practices promote diversity,” “open educational practices promote equity,” and “open educational practices promote inclusion,” and practice items “I seek opportunities to present on OER to instructors at departmental meetings” and “I seek opportunities to present on OER to instructors at institutional events.”

To tackle the future multicollinearity issue, perfectly correlated items were combined or deleted when combining would cause double barreled issues. Considering the potential for the OpenEd-LibKAP scale at times we considered making items more broad and narrow when appropriate. For example, in the context of studying librarians, we see them interacting with OER more than open pedagogy and therefore keep the knowledge OER item over the pedagogy item. The example attitude items if combined (diversity, equity, and inclusion) would create a triple barreled item. Considering the other items in the attitudes measure, inclusion was the most associated choice with other items. Finally, the example practice items were combined into a single item (“I hold OER awareness events”) to encompass departmental and institutional events. Additionally, after the fact we thought “seeking opportunities” is not a practice but “hold(ing)” is. Collectively we either dropped or combined nine (23%) knowledge, seven (21%) attitude, and four (18%) practice items.

Phase 3: Exploratory Factor Analysis (EFA)

In phase three, we sought to identify the factor structure of the OpenEd-LibKAP scale. We gathered data via an online Qualtrics survey during the Summer and Fall of 2024 and solicited responses on various groups including five electronic mailing lists (Expert Searching, ACRL interest groups, Medlibs, SPARC, and ASEE), two Discord groups (Open Access and Medlibs Land), and two OE groups (CCCOER and Open Education Network). To be eligible for the survey, participants had to be a librarian working in a higher education setting (someone whose job title is librarian, or someone with a library degree working in another type of higher education setting). Participants did not need to be an OER expert in order to participate in the survey. Given the specialized nature of academic librarians, we knew we would not have an ideal sample size for the intended EFA (Barrett & Kline, 1981; Kline, 2016; Memon et al., 2020). Therefore, we set a 150 participant sample minimum to be in line with the statistical limit for factorability (de Winter et al., 2009; Hinkin, 1995).

Initially, 211 participants entered the online survey. We removed 11 participants for completing less than 10% of the questionnaire. Additionally, we removed one participant for self-identifying as a faculty member rather than a librarian. Of the final sample (n = 199), they mostly self-identified as female (n = 158, 79.4%), Caucasian (n = 150, 75.4%), not having a disability (n = 139, 69.8%), and having an MLS or MLIS degree (n = 188, 94.5%). They earned their MLS/MLIS between 1973 and 2024 with an average of earning their degree 15.08 (SD = 10.34) years ago. Participants mostly were currently working in the United States (n = 164, 82.4%), at research-intensive universities (i.e., R1; n = 52, 26.1%), followed by doctoral or professional universities (n = 27, 13.6), and 38.2% (n = 76) did not know their institution’s Carnegie classification. Within their current specific positions, they worked primarily as an academic librarian (n = 180, 90.5%) and subject liaison (n = 129, 64.8%), with instructional responsibilities (n = 156, 78.4%). Additionally, these participants do not currently serve in an upper-level leadership position (n = 161, 80.9%), have major OER responsibilities (n = 142, 71.4%), or work as a solo-librarian (n = 142, 71.4%).

Measures

All measures kept the same 5-point and 4-point Likert scale and participant instructions as discussed in the measures section above in phase 2 and readable in the Appendices.

Knowledge was assessed with 30 items. Attitude was assessed with 26 items evenly split between general open pedagogy attitude and attitude toward the role of librarians in open pedagogy. Lastly, practice was assessed with 18 items.

Results

Exploratory Factor Analysis (EFA)

A priori standards of factor analysis were adopted and tested before advancing onto factor analysis to assess if the data could be used for such analysis. These included Kaiser-Meyer-Olkin (KMO) > .60, significant Bartlett’s chi-square, and the correlation matrix including values of .30 or higher (Carpenter, 2018; Pett et al., 2003). A common factor analysis was conducted across all KAP items and indicated good fit (KMO = .855, Bartlett sphericity test 𝜲2 = 6390.01, df = 2701, p < .001). Furthermore, upon inspection of the anti-image matrices all but three items were greater than .6 (Costello & Osborne, 2005) and accounted for 68.34% of the variance. The same analysis was re-run removing the three items that didn’t meet anti-image matrix rules. Results still indicated factorability (KMO = .869, Bartlett sphericity test 𝜲2 = 6230.67, df = 2701, p < .001) and accounted for 68.50% of the variance. Finally, the initial eigenvalues indicated 12 factors following Kaiser’s (1960) eigenvalue cutoff rule for retaining, including factors with an eigenvalue greater than one. However, it is well documented that eigenvalues tend to over factor. Fabrigar and Wegener (2012) argue for addressing the number of factors holistically between other statistical tests, beyond Kaiser’s criterion, and theoretical understanding of the underlying latent construct being studied.

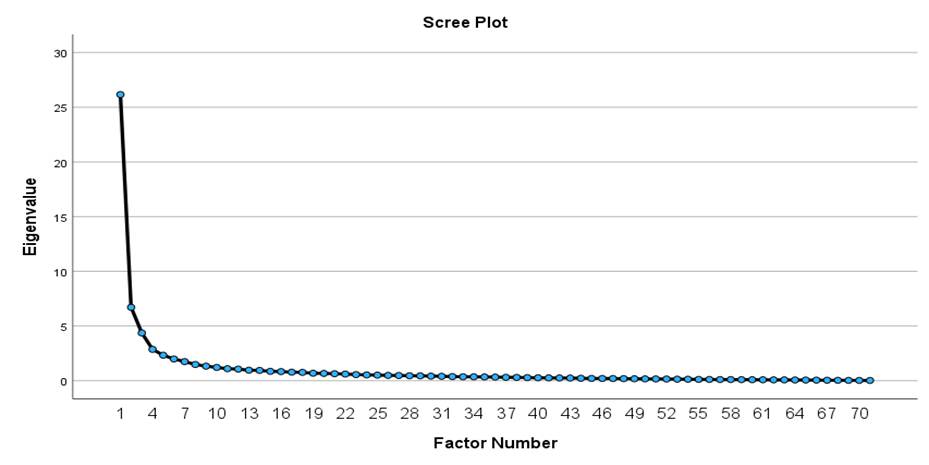

Several alternatives have been developed to counter Kaiser’s rule. These include scree test, parallel analysis, and minimum average partial (MAP; Carpenter, 2018; William et al., 2010). The scree test visualizes eigenvalues relative to one another and not absolute values such as in Kaiser’s rule (DeVellis, 2017). Generally, you look for the elbow or where the curve begins to flatten (Cattell, 1966). The scree test results indicated three factors (see Figure 1). Parallel analysis is often superior to accurately account for the ideal set of factors (Henson & Roberts, 2006). Parallel analysis was conducted using eigenvalues at the 95th percentile over 1,000 permutated samples with the same size as the full sample. Results of the parallel analysis indicated three factors outweighing the eigenvalues obtained by chance. Finally, the MAP test partials out factors by the correlation matrix excluding correlations between items. These squared correlations generally decrease as the shared variance is accounted for until it increases indicating more measurement error is starting. Results of the MAP test indicated nine factors.

Figure 1

EFA scree plot.

Theoretically we predicated three factors (i.e., KAP) and the scree test and parallel analysis also supported three factors. However, we did complete two EFAs forcing the data to fit to 12 (Kaiser’s criterion) and 9 (MAP test) factors to rule out competing ideas. In both cases, multiple factors had single items or items that cross loaded onto two or more factors. Consequently, an EFA was conducted to assess the impact of forcing the data to fit a three factor model (Carpenter, 2018). Specifically, we used principal axis factoring (PAF) with a promax rotation. We chose PAF over principal components analysis (PCA) since PAF is best suited for smaller sample sizes and PCA includes error variance within the retained components often leading to issues in follow up sampling (i.e., confirmatory factor analysis; Carpenter, 2018). Promax, an oblique rotation, was used to allow for underlying factors to correlate. Additionally, promax rotation was chosen over oblique oblimin as it is more robust (Thompson, 2004). Finally, we used a minimum factor loading of .4 based on common usage in the literature and dominant recommendation (Raykov & Marcoulides, 2011; Stevens, 2009).

We considered empirical, theoretical, and prior literature related to KAP and OER before addressing problematic items. We addressed these sequentially one by one and after modification we re-ran the same EFA to interpret the results and identify next steps. We started with the deletion of items that did not load on any factor, starting with the theoretical factor with the most items (knowledge). We next deleted items that had significant cross-loadings starting with the highest. Collectively, nine items were deleted for not loading on a factor, nine items were deleted for cross loading, two knowledge items were deleted for loading on the practice factor, and one item from the practice factor loading onto the knowledge factor. For example, one of the incorrectly loaded knowledge items was “I understand common challenges in creating OER metadata.” Knowing the challenges likely correlates with the ability to address those common challenges but for the purpose of this KAP measurement we opted to delete it.

Ultimately, a three-factor model following the theoretical KAP model was adopted. The first factor on knowledge included broad ideas such as “I can define the term open educational resources (OER)” to more localized knowledge such as “I am aware of OER resources my institution offers, or lack thereof.” The second factor on attitudes included positive values such as “open educational practices promote student success” and the attitudes toward the role librarians should play when it comes to OER, such as believing librarians should be “educating instructors about the equity implications of OER.” The third factor, practices, included sub themes of direct educational efforts such as “I seek opportunities to present on OER to instructors at institutional events” and indirect support efforts such as “I lobby for more OER funding beyond my institution (e.g., state or federal funds).” See Table 1 for the finalized retained items and factor loadings.

Finally, the three factors positively and significantly (p < .001) correlated ranging in value from .404 to .591 (see Table 2) with great (> .90) reliability coefficients among the three factors (see Table 1).

Table 1

Factor Loadings and Descriptive Statistics for OpenEd-LibKAP Scale

|

|

|

|

|

|

Factor loadings |

||

|

Original Item No. |

|

M |

SD |

Cronbach’s ɑ |

1 |

2 |

3 |

|

Factor 1: Knowledge |

3.96 |

0.90 |

.963 |

|

|

|

|

|

1 |

I can define the term “open education resources” (OER) |

4.64 |

0.72 |

|

.823 |

|

|

|

2 |

I can explain the link between textbook affordability and student success. |

4.54 |

0.76 |

|

.721 |

|

|

|

4 |

I know how to assign Creative Commons licenses to my own work. |

4.05 |

1.17 |

|

.686 |

|

|

|

5 |

I know how to help others apply Creative Common licenses to their work. |

3.91 |

1.30 |

|

.690 |

|

|

|

6 |

I can explain how Creative Commons licenses enable the open education movement. |

4.31 |

1.04 |

|

.756 |

|

|

|

7 |

I know how to find open resources by searching OER repositories. |

4.27 |

1.04 |

|

.835 |

|

|

|

8 |

I know how to find open textbooks that have been reviewed for quality. |

3.89 |

1.23 |

|

.763 |

|

|

|

9 |

I know how to use the built-in features of search engines like Google YouTube, and Flicker to limit search results to materials with open licenses. |

3.82 |

1.33 |

|

.609 |

|

|

|

10 |

I can educate instructors on how to create openly licensed content. |

3.52 |

1.43 |

|

.677 |

|

|

|

11 |

I can educate instructors on how to remix openly licensed content. |

3.41 |

1.41 |

|

.668 |

|

|

|

14 |

I am familiar with at least one OER publishing platform. |

4.10 |

1.24 |

|

.810 |

|

|

|

17 |

I can provide instructors with examples of open pedagogy. |

3.73 |

1.33 |

|

.757 |

|

|

|

18 |

I understand how OER are used to engage in open pedagogical practices. |

3.86 |

1.24 |

|

.766 |

|

|

|

19 |

I know how to help instructors use open pedagogical practices in their classroom. |

3.31 |

1.32 |

|

.644 |

|

|

|

20 |

I understand the difference between using OER in the classroom and implementing open pedagogical practices. |

3.35 |

1.47 |

|

.644 |

|

|

|

21 |

I can explain why OER enables the creation of more diverse course materials. |

4.06 |

1.16 |

|

.738 |

|

|

|

22 |

I can explain why OER provides a more equitable learning environment for students. |

4.39 |

0.96 |

|

.806 |

|

|

|

23 |

I am familiar with the scholarly literature on the efficacy of OER in decreasing drop, fail, and withdrawal rates among underserved student populations (e.g., first generation, low socioeconomic status, underrepresented minority students, etc.) |

3.64 |

1.34 |

|

641 |

|

|

|

24 |

I am aware of OER resources my institution offers, or lack thereof. |

4.15 |

1.14 |

|

.785 |

|

|

|

25 |

I am aware of OER resources offered by groups outside my institution (e.g., state, regional, consortial) |

3.80 |

1.26 |

|

.705 |

|

|

|

28 |

I know where to go to ask questions about OER. |

4.18 |

1.15 |

|

.743 |

|

|

|

29 |

I know where to refer instructors seeking support for adopting, adapting, or creating OER at my institution. |

4.08 |

1.28 |

|

.658 |

|

|

|

Factor 2: Attitudes |

4.41 |

0.58 |

.928 |

|

|

|

|

|

41 |

Conducting research around open educational practices is valuable scholarly work. |

4.68 |

0.73 |

|

|

.641 |

|

|

42 |

Open educational practices promote student success. |

4.65 |

0.70 |

|

|

.542 |

|

|

43 |

Open educational practices promote inclusion. |

4.68 |

0.66 |

|

|

.539 |

|

|

44 |

Educating instructors about copyright and Creative Commons licenses |

4.63 |

0.62 |

|

|

.595 |

|

|

45 |

Educating instructors about how to search for open content. |

4.71 |

0.57 |

|

|

.600 |

|

|

46 |

Educating instructors about open pedagogy. |

4.00 |

1.00 |

|

|

.619 |

|

|

47 |

Educating instructors about the equity implications of OER. |

4.32 |

0.92 |

|

|

.740 |

|

|

48 |

Helping instructors adopt OER materials. |

4.40 |

0.90 |

|

|

.703 |

|

|

49 |

Helping instructors create OER materials. |

3.86 |

1.10 |

|

|

.654 |

|

|

50 |

Helping instructors identify appropriate OER publishing platforms. |

4.43 |

0.76 |

|

|

.647 |

|

|

51 |

Cataloging OER |

4.63 |

0.70 |

|

|

.717 |

|

|

52 |

Creating metadata for OER |

4.58 |

0.69 |

|

|

.741 |

|

|

53 |

Curating lists of OER for discovery on the open web (e.g., LibGuides, wikis, Open Science Framework) |

4.51 |

0.81 |

|

|

.666 |

|

|

54 |

Submitting OER to repositories. |

4.15 |

1.00 |

|

|

.651 |

|

|

55 |

Advocating for funding or other support for OER programs/initiatives. |

4.22 |

1.00 |

|

|

.782 |

|

|

56 |

Conducting institutional OER needs assessments. |

4.11 |

1.03 |

|

|

.763 |

|

|

Factor 3: Practices |

1.34 |

0.91 |

.946 |

|

|

|

|

|

60 |

I disseminate opportunities around open education to instructors (e.g., professional development, funding, scholarly opportunities). |

1.74 |

1.00 |

|

|

|

.604 |

|

61 |

I seek opportunities to present on OER to instructors at institutional events. |

1.49 |

1.06 |

|

|

|

.711 |

|

65 |

I create metadata for open content (e.g., when cataloging, publishing, or submitting it to an OER or institutional repository). |

1.23 |

1.16 |

|

|

|

.483 |

|

66 |

When my colleagues create open content, I encourage it to be placed into an appropriate repository. |

1.96 |

1.10 |

|

|

|

.520 |

|

67 |

I encourage participation in open pedagogical activities offered at the disciplinary level, such as NNLM’s “Cite NLM” Wikipedia edit-athon |

1.10 |

0.97 |

|

|

|

.603 |

|

68 |

I advocate for funding and other support for my library’s OER initiatives. |

1.87 |

1.07 |

|

|

|

.743 |

|

69 |

I communicate with library leadership or institutional administration about the benefits of OER. |

1.92 |

1.10 |

|

|

|

.705 |

|

70 |

I lobby for more OER funding beyond my institution (e.g., state or federal funds). |

0.97 |

1.13 |

|

|

|

.849 |

|

71 |

I hold OER awareness events. |

1.36 |

1.16 |

|

|

|

.765 |

|

72 |

I work with student government organizations on OER initiatives. |

0.89 |

1.07 |

|

|

|

.846 |

|

73 |

I work within my institution to raise awareness about the benefits of including OER in promotion and tenure policies. |

1.36 |

1.10 |

|

|

|

.794 |

|

74 |

I work with my institution’s bookstore and/or registrar on initiatives to allow students to see textbook costs when registering for courses. |

1.11 |

1.24 |

|

|

|

.772 |

|

Note. Extraction: Principal axis factoring Rotation: Promax |

|||||||

Table 2

Correlation Matrix for Study Variables After Phase Three

|

|

1 |

2 |

3 |

|

1.Knowledge |

- |

|

|

|

2.Attitude |

.407 |

- |

|

|

3.Practice |

.591 |

.404 |

- |

|

Note. All correlation coefficients significant at the p < .001 level. |

|||

Discussion

As mentioned above, academic librarians are increasingly involved in OER and supporting the aims of the OER movement. The lack of a validated instrument to measure the KAP of librarians was a problem we sought to address with this study. Through three distinct phases, we developed the OpenEd-LibKAP scale. The scale began with 87 items, increasing to 94 based on expert reviewers’ comments in phase one, with 20 items removed after phase two, and 24 items removed after phase three. The final instrument includes 50 questions, with 22 items in the knowledge domain, 16 items in the attitude domain, and 12 items in the practices domain. The KAP factors positively correlate in an expected manner, with a range from .404 to .591.

The survey validation results presented here demonstrate that the OpenEd-LibKAP Scale performs well. The individual domains have high markers of reliability, implying a degree of confidence in our findings and future uses of the tool. The random error point is minimal, suggesting that the validation is not the result of chance.

Librarians or administrators responsible for the professional development of their staff can use this tool to help assess a newly established program, enhance an existing program, or identify and systematically increase the competencies of their librarians in OER. OER training or professional development opportunities can be created or recommended based on the survey results.

In addition, Librarian and/or OER researchers can use the OpenEd-LibKAP Scale to more accurately measure and assess librarian KAP related to OER and provide evidence based recommendations, as well as measure the relationship between academic librarians' knowledge of OER, attitude toward OER, and actual practices in line with the aims of the open movement. Evidence based recommendations arising from the tool might include developing training targeted to address specific knowledge deficiencies or promote specific practices.

We sought to cover as many unique domains of librarianship as possible while developing the OpenEd-LibKAP Scale. The authors kept in mind their own experiences as well as Braddlee and VanScoy's (2019) fourteen unique support roles for academic librarians during the development stage of the tool. As such, our final survey includes questions geared toward the subject specialist, the metadata librarian, librarian curators, campus advocates, and OER librarians, to name a few specialties included in the tool. This broad inclusion provides a unique opportunity for library administrators interested in assessing the KAP of all library workers at their library. With ever shrinking library budgets, more institutions find sustainability in homegrown professional development efforts (Thompson & Peach, 2023), even more true of OER competencies as they are not yet a requirement for library school graduation (Thornton, 2021). While a validated tool is particularly useful to OER researchers, the spectrum of unique librarianship domains included in the OpenEd-LibKAP Scale provides a method of evidence based assessment for a local professional development program. Administrators could survey their library using the OpenEd-LibKAP Scale, provide an OER competencies professional development program, then reassess their library using the scale. Reassessments could take place immediately following the program as well as several months to years later, providing a better measure of the long-term effect of the intervention.

Additionally, the OpenEd-LibKAP Scale includes three independent yet related subscales: knowledge (what is known), attitudes (what is believed), and practices (what is done). By including all three domains in our scale, researchers can successfully measure the interplay between what is known about OER, what is believed about OER, and what a librarian actually incorporates into their practice as a result of OER. All three measures will provide researchers a unique view into the KAP of librarian subgroups across OER topics. Additionally, the inclusion of all three domains allows researchers to further identify if and how the profession's value of access to information is embodied in the everyday work of practicing librarians. As an important value in librarianship, an examination of this type would provide a unique insight into the discipline as a whole and allow for a profession-wide reflection on professional values as it relates to OER and the aims of the open movement more broadly. Alternatively, the OpenEd-LibKAP subscales can be separated and used independently, allowing librarian or OER researchers, library administrators, or other users of the Scale to assess an individual domain as opposed to all three collectively.

Limitations

There are a few limitations to our study. One such limitation is the small number of responses received in phase three. Our final sample included 199 valid responses, a large number for the field of librarianship (Federer, 2018; Glynn & Wu, 2003; Scott et al., 2021) but a small sample compared to other EFA studies outside of the librarian context (Kline, 2016). While we did ensure our participant sample minimum was in line with the statistical limit for factorability (de Winter, et al., 2009; Hinkin, 1995), our limited valid participant responses did not allow for a confirmatory factor analysis (CFA) to be performed. Future studies using the OpenEd-LibKAP Scale would do well to perform an additional validation test (i.e., CFA) of their responses before completing their final data analysis. Another potential limitation is the generalizability of the tool – 82.4% (n = 164) of our phase three respondents were based in libraries within the United States (US) and 8% (n = 16) were based in Canada. It is unclear how many of the 5.5% (n = 11) who identified their country as "Other, please write in:" are in working situations that would closely mirror U.S. contexts. This may impact the generalizability of our results. Additionally, the OpenEd-LibKAP Scale has participants self-assess their KAP as opposed to being an objective measure of assessment. As a result, participant KAP may not be perfectly captured by our tool.

Conclusion

Statistical analysis of the OpenEd-LibKAP Scale confirms that the tool is of high quality. As librarians are increasingly called upon to support the aims of the OER movement, this questionnaire can be used to assess librarian competencies, beliefs, and behaviors related to OER and the aims of the OER movement. It is useful in a pre/posttest experimental research design as well as for internal use by individual institutions or library consortia and can measure the impact of the OER movement across library types, sizes, and Carnegie classifications.

Recommended Reading

We encourage those interested in learning more about EFA to read DeVellis (2017) and Kline (2016) for a holistic overview and Byrne (2016) and Field (2013) for a mathematical overview. For a review of CFA, see DeVellis (2017).

Author Contributions

Riley Richards: Data curation, Formal analysis, Methodology, Writing - original draft, Software, Visualization, Writing - review & editing Jenn Monnin: Conceptualization, Investigation, Writing - original draft, Writing - review & editing Kristin Whitman: Conceptualization, Investigation, Writing - original draft, Writing - review & editing

References

Andrade, C., Menon, V., Ameen, S., & Kumar Praharaj, S. (2020). Designing and conducting knowledge, attitude, and practice surveys in psychiatry: Practical guidance. Indian Journal of Psychological Medicine, 42(5), 478–481. https://doi.org/10.1177/0253717620946111

Barrett, P. T., & Kline, P. (1981). The observation to variable ratio in factor analysis. Personality Study & Group Behaviour, 1(1), 23–33. https://psycnet.apa.org/record/1982-20212-001

Bay View Analytics. (n.d.) Bay View Analytics publications. Retrieved January 12, 2025, from https://www.bayviewanalytics.com/publications.html

Boateng, G. O., Neilands, T. B., Frongillo, E. A., Melgar-Quinonez, H. R., & Young, S. L. (2018). Best practices for developing and validating scales for health, social, and behavioral research: A primer. Frontiers in Public Health, 6, 1–18. https://doi.org/10.3389/fpubh.2018.00149

Braddlee & VanScoy, A. (2019). Bridging the chasm: Faculty support roles for academic librarians in the adoption of open educational resources. College & Research Libraries, 80(4), 426. https://doi.org/10.5860/crl.80.4.426

Byrne, B. M. (2016). Structural equation modeling with AMOS: Basic concepts, applications, and programming (3rd ed.). Routledge.

Carpenter, S. (2018). Ten steps in scale development and reporting: A guide for researchers. Communication Methods and Measures, 12(1), 25–44. https://doi.org/10.1080/19312458.2017.1396583

Cattel, R. B. (1966). The scree test for the number of factors. Multivariate Behavioral Research, 1(2), 245–276.

Costello, A. B., & Osborne, J. (2005). Best practices in exploratory factor analysis: Four recommendations for getting the most from your analysis. Practical Assessment, Research, and Evaluation, 10(7), 1–9. https://doi.org/10.7275/jyj1-4868

de Winter, J., Dodou, D., & Wieringa, P. A. (2009). Exploratory factor analysis with small sample sizes. Multivariate Behavioral Research, 44(2), 147–181. https://doi.org/10.1080/00273170902794206

DeVellis, R. F. (2017). Scale development: Theory and applications. 4th ed. Sage.

Fabrigar, L. R., & Wegener, D. T. (2012). Factor analysis: Understanding statistics. Oxford Press.

Federer, L. (2018). Defining data librarianship: A survey of competencies, skills, and training. Journal of the Medical Library Association, 106(3), 294–303. https://doi.org/10.5195/jmla.2018.306

Field, A. (2013). Discovering statistics using IBM SPSSS statistics. 4th ed. Sage.

Glynn, T., & Wu, C. (2003). New roles and opportunities for academic library liaisons: A survey and recommendations. Reference Services Review, 31(2), 122–128. https://doi.org/10.1108/00907320310476594

Goharinezhad, S., Faraji, Z., & Jameie, B. (2012). Knowledge, attitude and practice (KAP) study of faculty members on Integrated Digital Library (IDL) in Tehran University of Medical Sciences. International Journal of Digital Library Services, 2(4), 51–62. http://www.ijodls.in/uploads/3/6/0/3/3603729/vol-2_issue-4_51-62.pdf

Henson, R. K., & Roberts, J. K. (2006). Use of exploratory factor analysis in published research: Common error and some comment on improved practice. Educational and Psychological Measurement, 66(3).

Hinkin, T. R. (1995). A review of scale development practices in the study of organizations. Journal of Management, 21(5), 967–988.

Johanson, G. A., & Brooks, G. P. (2010). Initial scale development: Sample size for pilot studies. Educational and Psychological Measures, 70(3), 394–400. https://doi.org/10.1177/0013164409355692

Kaiser, H. F. (1960). The application of electronic computers to factor analysis. Educational and Psychology Measurement, 20, 141–151.

Kline, R. B. (2016). Principles and practice of structural equation modeling. 4th ed. Guilford Press.

Kolesnykova, T. O., & Matveyeva, O. V. (2021). First steps before the jump: Ukrainian university librarians survey about OER. University Library at a New Stage of Social Communications Development: Conference Proceedings, 6, 96–107. https://unilibnsd.ust.edu.ua/article/view/248379

Larson, A. (2020). Open education librarianship: A position description analysis of the newly emerging role in academic libraries. The International Journal of Open Educational Resources, 3(1). https://doi.org/10.18278/ijoer.3.1.4

Matingwina, T. (2014). Knowledge, attitudes and practices of University students on Web 2.0 tools: Implications for academic libraries in Zimbabwe. Zimbabwe Journal of Science and Technology, 9(1), 59–72.

Memon, M. A., Ting, H., Cheah, J.-H., Thurasamy, R., Chuah, F., & Cham, T. H. (2020). Sample size for survey research: Review and recommendations. Journal of Applied Structural Equation Modeling, 4(2), 1–20.

Nwaohiri, N. M. (2021). Open educational resources (OER) in Nigerian universities: Promotion and awareness opportunities for academic libraries for a path to higher education success. Library Philosophy and Practice, 5583. https://digitalcommons.unl.edu/libphilprac/5583/

Okamoto, K. (2013). Making higher education more affordable, one course reading at a time: Academic libraries as key advocates for open access textbooks and educational resources. Public Services Quarterly, 9(4), 267–283. https://doi.org/10.1080/15228959.2013.842397

Open Education Network. (n.d.). Open Education Librarianship Certificate. Retrieved on January 13, 2025, from https://open.umn.edu/oen/certificate-in-open-education-librarianship

Pett, M. A., Lackey, N. R., & Sullivan, J. J. (2003). Making sense of factor analysis: The use of factor analysis for instrument development in health care research. Sage.

Raykov, T., & Marcoulides, G. A. (2011). Introduction to psychometric theory. Routledge, Taylor & Francis.

Scott, R. E., Harrington, C., & Dubnjakovic, A. (2021). Exploring open access practices, attitudes, and policies in academic libraries. Portal: Libraries and the Academy, 21(2), 365–388. https://doi.org/10.1353/pla.2021.0020

Seaman, J. E., & Seaman, J. (2020). Digital Texts in the Time of COVID: Educational Resources in U.S. Higher Education. Bay View Analytics. https://www.bayviewanalytics.com/reports/digitaltextsinthetimeofcovid.pdf

Stevens, J. P. (2009). Applied multivariate statistics for the social sciences (5th ed.). Routledge.

Snoek-Brown, J. (n.d.). Tacoma Community College Library: Faculty/staff guide to Open Educational Resources (OER): OER myths. Retrieved June 18, 2025, from https://tacomacc.libguides.com/oer/myths

Thompson, B. (2004). Exploratory and confirmatory analysis: Understanding concepts and applications. American Psychological Association.

Thompson, S. D., & Muir, A. (2020). A case study investigation of academic library support for open educational resources in Scottish universities. Journal of Librarianship and Information Science, 52(3), 685–693. https://doi.org/10.1177/0961000619871604

Thompson, J. & Peach, J., (2023). Making OER sustainable in the library. Journal of Open Educational Resources in Higher Education, 2(1), 253–265. https://doi.org/10.13001/joerhe.v2i1.7203

Thornton, E. (2021). Academic librarian experiences and perceived value of OER professional development: A case study (Publication No. 2864874) [Doctoral dissertation, University of Arkansas]. ProQuest Dissertations & Theses Global.

Appendix A

Final Knowledge Subscale of the Open Education - Librarian Knowledge, Attitudes, and Practices (OpenEd-LibKAP) Scale

Instructions: Each of the statements below asks about your current knowledge around open education. Please read each statement and consider the extent to which you agree or disagree.

|

|

|

1 |

2 |

3 |

4 |

5 |

|

Item No. |

Item |

Strongly Disagree |

Somewhat Disagree |

Neither agree nor disagree |

Somewhat Agree |

Strongly Agree |

|

1 |

I can define the term “open education resources” (OER) |

|

|

|

|

|

|

2 |

I can explain the link between textbook affordability and student success. |

|

|

|

|

|

|

3 |

I know how to assign Creative Commons licenses to my own work. |

|

|

|

|

|

|

4 |

I know how to help others apply Creative Common licenses to their work. |

|

|

|

|

|

|

5 |

I can explain how Creative Commons licenses enable the open education movement. |

|

|

|

|

|

|

6 |

I know how to find open resources by searching OER repositories. |

|

|

|

|

|

|

7 |

I know how to find open textbooks that have been reviewed for quality. |

|

|

|

|

|

|

8 |

I know how to use the built-in features of search engines like Google YouTube, and Flicker to limit search results to materials with open licenses. |

|

|

|

|

|

|

9 |

I can educate instructors on how to create openly licensed content. |

|

|

|

|

|

|

10 |

I can educate instructors on how to remix openly licensed content. |

|

|

|

|

|

|

11 |

I am familiar with at least one OER publishing platform. |

|

|

|

|

|

|

12 |

I can provide instructors with examples of open pedagogy. |

|

|

|

|

|

|

13 |

I understand how OER are used to engage in open pedagogical practices. |

|

|

|

|

|

|

14 |

I know how to help instructors use open pedagogical practices in their classroom. |

|

|

|

|

|

|

15 |

I understand the difference between using OER in the classroom and implementing open pedagogical practices. |

|

|

|

|

|

|

16 |

I can explain why OER enables the creation of more diverse course materials. |

|

|

|

|

|

|

17 |

I can explain why OER provides a more equitable learning environment for students. |

|

|

|

|

|

|

18 |

I am familiar with the scholarly literature on the efficacy of OER in decreasing drop, fail, and withdrawal rates among underserved student populations (e.g., first generation, low socioeconomic status, underrepresented minority students, etc.) |

|

|

|

|

|

|

19 |

I am aware of OER resources my institution offers, or lack thereof. |

|

|

|

|

|

|

20 |

I am aware of OER resources offered by groups outside my institution (e.g., state, regional, consortial) |

|

|

|

|

|

|

21 |

I know where to go to ask questions about OER. |

|

|

|

|

|

|

22 |

I know where to refer instructors seeking support for adopting, adapting, or creating OER at my institution. |

|

|

|

|

|

Appendix B

Final Attitude Subscale of the Open Education - Librarian Knowledge, Attitudes, and Practices (OpenEd-LibKAP) Scale

Instructions: Each of the statements below ask about values you hold on open education practices. Below are key definitions to consider before answering. Please read each statement and consider the extent to which you agree or disagree.

Definitions:

Open Education Practices: Any educational practice enabled by open licenses, such as using OER as course materials, or teaching using open pedagogy.

Open Pedagogy/Open Pedagogical Practice: Teaching by engaging students in the use or creation of open materials.

|

|

|

1 |

2 |

3 |

4 |

5 |

|

Item No. |

Item |

Strongly Disagree |

Somewhat Disagree |

Neither agree nor disagree |

Somewhat Agree |

Strongly Agree |

|

1 |

Conducting research around open educational practices is valuable scholarly work. |

|

|

|

|

|

|

2 |

Open educational practices promote student success. |

|

|

|

|

|

|

3 |

Open educational practices promote inclusion. |

|

|

|

|

|

Instructions: Each of the statements below asks about what role you think librarians should play in open educational resources (OER). Below are key definitions to consider before answering. Please read each statement and consider the extent to which you agree or disagree.

Definitions:

Open Education Resources (OER): Educational materials available under open licenses.

Open Pedagogy/Open Pedagogical Practice: Teaching by engaging students in the use or creation of open materials.

Generally, I believe librarians should play a role in…

|

|

|

1 |

2 |

3 |

4 |

5 |

|

Item No. |

Item |

Strongly Disagree |

Somewhat Disagree |

Neither agree nor disagree |

Somewhat Agree |

Strongly Agree |

|

4 |

Educating instructors about copyright and Creative Commons licenses |

|

|

|

|

|

|

5 |

Educating instructors about how to search for open content. |

|

|

|

|

|

|

6 |

Educating instructors about open pedagogy. |

|

|

|

|

|

|

7 |

Educating instructors about the equity implications of OER. |

|

|

|

|

|

|

8 |

Helping instructors adopt OER materials. |

|

|

|

|

|

|

9 |

Helping instructors create OER materials. |

|

|

|

|

|

|

10 |

Helping instructors identify appropriate OER publishing platforms. |

|

|

|

|

|

|

11 |

Cataloging OER |

|

|

|

|

|

|

12 |

Creating metadata for OER |

|

|

|

|

|

|

13 |

Curating lists of OER for discovery on the open web (e.g., LibGuides, wikis, Open Science Framework) |

|

|

|

|

|

|

14 |

Submitting OER to repositories. |

|

|

|

|

|

|

15 |

Advocating for funding or other support for OER programs/initiatives. |

|

|

|

|

|

|

16 |

Conducting institutional OER needs assessments. |

|

|

|

|

|

Appendix C

Final Practice Subscale of the Open Education - Librarian Knowledge, Attitudes, and Practices (OpenEd-LibKAP) Scale

Instructions: Each of the statements below asks about behaviors and practices you perform related to open education. Below are key definitions to consider before answering. Please read each statement and consider how frequently you perform that action. Choose “not applicable” if you have not performed the behavior because it is not within the scope of your job description or role.

Definition:

Open Education: The movement to create and use educational materials under open license.

|

|

|

0 |

1 |

2 |

3 |

N/A |

|

Item No. |

Item |

Never |

Rarely |

Occasionally |

Frequently |

Not Applicable |

|

1 |

I disseminate opportunities around open education to instructors (e.g., professional development, funding, scholarly opportunities). |

|

|

|

|

|

|

2 |

I seek opportunities to present on OER to instructors at institutional events. |

|

|

|

|

|

|

3 |

I create metadata for open content (e.g., when cataloging, publishing, or submitting it to an OER or institutional repository). |

|

|

|

|

|

|

4 |

When my colleagues create open content, I encourage it to be placed into an appropriate repository. |

|

|

|

|

|

|

5 |

I encourage participation in open pedagogical activities offered at the disciplinary level, such as NNLM’s “Cite NLM” Wikipedia edit-athon |

|

|

|

|

|

|

6 |

I advocate for funding and other support for my library’s OER initiatives. |

|

|

|

|

|

|

7 |

I communicate with library leadership or institutional administration about the benefits of OER. |

|

|

|

|

|

|

8 |

I lobby for more OER funding beyond my institution (e.g., state or federal funds). |

|

|

|

|

|

|

9 |

I hold OER awareness events. |

|

|

|

|

|

|

10 |

I work with student government organizations on OER initiatives. |

|

|

|

|

|

|

11 |

I work within my institution to raise awareness about the benefits of including OER in promotion and tenure policies. |

|

|

|

|

|

|

12 |

I work with my institution’s bookstore and/or registrar on initiatives to allow students to see textbook costs when registering for courses. |

|

|

|

|

|