Research Article

Pragmatic Human-Centred Design in Action: Assessing the Tone and Usability of the Behaviours in Dementia Toolkit Website

Nick

Ubels

Knowledge Broker

Canadian Coalition for Seniors’ Mental Health

Vancouver, British Columbia, Canada

Email: nick.ubels@vpl.ca

Lauren Albrecht

Manager, Knowledge Mobilization

Canadian Coalition for Seniors’ Mental Health

Edmonton,

Alberta, Canada

Email: laurena@ualberta.ca

Woon Chee Koh

MLIS Student

The University of British Columbia

Vancouver,

British Columbia, Canada

Email: wooncheekoh@gmail.com

David Conn

Co-Chair,

Canadian Coalition for Seniors’ Mental Health

Staff Psychiatrist, Baycrest Health Sciences

Toronto, Ontario, Canada

Email: DConn@baycrest.org

Received: 18 Apr. 2025 Accepted: 22 Aug. 2025

![]() 2026

Ubels, Albrecht, Koh, and Conn. This is an Open Access article

distributed under the terms of the Creative Commons‐Attribution‐Noncommercial‐Share

Alike License 4.0 International (http://creativecommons.org/licenses/by-nc-sa/4.0/),

which permits unrestricted use, distribution, and reproduction in any medium,

provided the original work is properly attributed, not used for commercial

purposes, and, if transformed, the resulting work is redistributed under the

same or similar license to this one.

2026

Ubels, Albrecht, Koh, and Conn. This is an Open Access article

distributed under the terms of the Creative Commons‐Attribution‐Noncommercial‐Share

Alike License 4.0 International (http://creativecommons.org/licenses/by-nc-sa/4.0/),

which permits unrestricted use, distribution, and reproduction in any medium,

provided the original work is properly attributed, not used for commercial

purposes, and, if transformed, the resulting work is redistributed under the

same or similar license to this one.

DOI: 10.18438/eblip30754

Abstract

Objective – The Behaviours in Dementia Toolkit is an online library to support dementia-related mood and behaviour changes. It is intended to be used by two key caregiving audiences: 1) formal, professional care partners, and 2) informal care partners. The purpose of this study was to assess the content tone and usability of the website to meet the needs of its two user groups.

Methods – The multi-method design included electronic surveys and interviews. Content tone was assessed using word reaction and highlighter tests. Task-specific usability was assessed with the single-ease question (SEQ), time on task, and completion rate. The System Usability Scale (SUS) measured overall usability before and after design changes based on initial results.

Results – Content tone was friendly, trustworthy, desirable, and clear, but informal care partners found medical jargon confusing. Task-specific usability was generally acceptable. Time one SUS score for formal care partners was assessed at a letter grade A, but for informal care partners was a C. Content tone and usability testing drove critical changes to the library. Time two SUS scores subsequently equalized for the user groups to an A- for formal care partners and a B for informal care partners. Divergent requirements for formal and informal care partners were identified and addressed using pragmatic human-centred design methods.

Conclusion – Jargon, information overload, and search flow require careful attention in an online health information library. Digital libraries and other projects facilitating access to health information seeking groups may find these usability methods useful to consider at the outset of their project planning given the actionable insights they can provide. These techniques are suitable for projects with a limited budget and tight timeframe.

Introduction

Dementia is a term for several neurodegenerative disorders that impact memory, thinking, and performance of everyday activities (World Health Organization, 2023). Most of the estimated 55 million people living with dementia worldwide will experience dementia-related mood or behaviour changes (Kales et al., 2015; Steinberg et al., 2008; World Health Organization, 2023). These changes are known clinically as behavioural and psychological symptoms of dementia (BPSD). They vary widely in their expression and severity, but typically contribute to reduced quality of life for people living with dementia, as well as increased stress and burnout among those involved in their care (Cerejeira et al., 2012). Distressing changes in mood or behaviour arise from many inter-related factors and are best understood, prevented, and addressed using a holistic biopsychosocial-cultural approach (CCSMH, 2024). Increased caregiver knowledge of prevention and intervention strategies can reduce the frequency and severity of dementia-related mood or behaviour changes and therefore enhance the well-being of people living with dementia and their caregivers alike (Isik et al., 2018; Polenick et al., 2018). However, it can be difficult to find high-quality information to enable effective, person-centred approaches to support and care (Jagoda et al., 2023).

Recognizing this need, the Canadian Coalition for Seniors’ Mental Health (CCSMH), a pan-Canadian charitable organization, sought to better equip both formal and informal care partners—many of whom are older adults—with practical, credible, and inclusive information resources to enhance their caregiving knowledge and ability. Most Canadians seek health information online (Statistics Canada, 2021), thus CCSMH created the Behaviours in Dementia Toolkit (behavioursindementia.ca), a website that acts as a digital library with more than 300 resources for users to browse and access based on their specific information needs. To develop the Toolkit, CCSMH engaged in a pragmatic, human-centred design (HCD) process. HCD involves real, prospective users to make interactive systems more useable, accounting for human factors within the design process (ISO, 2019; Nimmanterdwong et al., 2022). This evidence based approach helps ensure digital health information products meet their intended users’ preferences and needs (Denton et al., 2016; Harte et al., 2017; Taylor et al., 2011; van Velson et al., 2022; Ye et al., 2023). We describe our HCD process as pragmatic because we selected meaningful, resource-conscious methods to maximize our usability assessment within the project timeframe and budget. The website was designed to support two main user groups involved in the care of a person living with dementia: informal, often unpaid, care partners (e.g., family members, friends, and neighbors) and formal, professional health care providers (e.g., doctors, nurses, and personal and support workers). Reaching two broad user groups with varied needs, backgrounds, and potentially different and contradictory preferences presented unique challenges for optimizing the website design. Our overarching design process is described in a separate publication (Albrecht et al., 2025), as is our approach to developing a unique, person-centred metadata schema for this library (Ubels et al., 2024). Prior to the usability study, we carried out the following activities to shape the design of the website:

1. Established a multidisciplinary working group that met monthly for the duration of the project;

2. Collected 285 surveys of formal and informal care partners to identify their information needs and preferences related to behaviours in dementia (CCSMH, 2024);

3. Conducted an environmental scan to examine how to accelerate health information flow among older adults (Ubels & Albrecht, 2024);

4. Completed an international environmental scan, which identified 1,408 English-language resources on various aspects of behaviours in dementia;

5. Completed a focus group with five older adults with lived experience as informal care partners;

6. Conducted meetings with organizations representing remote and rural, Indigenous, 2SLGBTQIA, and Black perspectives on dementia care;

7. Completed six personas and empathy maps to identify knowledge gaps (Kaplan, 2023);

8. Hosted 145 people in a webinar on behaviours in dementia where we learned more about information needs of formal and informal care partners through the question-and-answer session and pre-/post- session polls.

Throughout the development process, we recognized that there was meaningful overlap between the information and design needs of both formal and informal care partners that would make a single interface valuable. Each of these user groups are part of a broader network of dementia care partners involved in supporting someone living with dementia, whose information needs and knowledge exist on a continuum (Rutkowski et al., 2021). This crossover also exists in other common scenarios. For example, formal care partners may wish to share brief, clear language explainer videos about responding to a specific behaviour with an informal care partner. Conversely, informal care partners may wish to review medication practice guidelines more commonly used by formal care partners when prescribing. As we learned more about each user group we also recognized emergent challenges, such as divergent terminology preferences and tolerance for and comfort with more complex digital information systems. Our goal was to assess and improve the overall usability of the website for both informal and formal care partners. The focus of this paper is to describe the usability evaluation of our product in development, which is an integral component of the HCD process.

Literature Review

According to industry standard ISO 9241 (ISO, 2018), usability means the extent a product can be used by intended users to achieve its specified goals with effectiveness, efficiency, and satisfaction. Usability studies are conducted during development to determine if a website or online information resource has met its goals (Barbara et al., 2016; Mutatina et al., 2019; van den Haak & van Hooijdonk, 2010). Evaluating this dimension allows website and digital library designers to better understand perspectives of their intended users (Kous et al., 2018; Okhovati et al., 2017). When embedded in an iterative design process, usability evaluation helps address barriers users may experience when attempting to use a website, tool, app, or other kind of interface. It is critical to assess the usability of digital dementia care information interventions to increase their potential impact (Jagoda et al., 2023).

Access to relevant health information does not guarantee it can be read, interpreted, and implemented. Overwhelming and difficult-to-understand content, including medical jargon, can impede understanding (Kous et al., 2018; Ubels & Albrecht, 2024; van den Haak & van Hooijdonk, 2010; Wilson et al., 2021). Additional common digital usability issues can include poor or overly complex functionality, small screens or text, and low colour contrast (Wilson et al., 2021). Usability evaluation allows designers to test the acceptability of a prototype’s content, navigation, and functionality before making user-led changes to enhance its usability (Schmidt et al., 2020).

A variety of methods can be used to evaluate usability. Using multiple methods is recommend in order to improve the designers’ understanding of users’ needs (Ye et al., 2023). Poll (2007) categorized these methods into those that include user participation and those that do not (e.g., heuristic evaluation and cognitive walk-throughs). This study employed usability evaluation methods that involved user participation, including word reaction and highlighter tests which examine content tone (i.e., friendliness, trustworthiness, desirability, and clarity); concurrent think-aloud tasks which look at resource navigation and function; the Single Ease Question (SEQ) which examines the perceived usability of a specific task (i.e., user rating the ease of completion on a scale of one to seven); and the industry standard System Usability Scale (SUS) questionnaire which assesses perceived overall usability of the system (i.e., standardized 10-item scale) (Allison et al., 2019; Brett et al., 2016; Hill et al., 2021; Nielsen, 2001; Poncette et al., 2021; Sauro, 2010; Schmidt et al., 2020; Silva et al., 2021). SUS has become a go-to tool in usability testing with established benchmarks that allow for easy comparison to other systems (Lewis & Sauro, 2018). A SUS score of 68 is widely deemed as acceptable (Hyzy et al., 2022). A combination of quantitative measures (e.g., SEQ, time-on-task, and SUS) and qualitative measures (e.g., concurrent think-aloud, content tone) provide the most robust combination of data to inform rapid improvements to a digital information system (Ye et al., 2023).

Most usability evaluation conducted on digital libraries concerns academic libraries, with an emphasis on student, faculty, and staff user audiences (Emde et al., 2009; Jilani et al., 2025; Kous et al., 2018). Health information products are often more informed by formal care partner needs than the sometimes-divergent needs of informal care partner and older adult users (Reeder et al., 2013). Several studies have noted differences in information and usability needs for formal and informal care partners when it comes to health information systems. Formal care partners’ understanding of informal care partners’ information needs may be significantly different than those expressed by informal care partners themselves (Oakley-Girvan, 2021). While formal care partners are more likely to prioritize speed and precision, drawing on their medical domain knowledge, medical jargon, information overload, and complicated system design are the most likely usability barriers expressed by informal care partners, especially older adults (Neilson & Wilson, 2011; Ubels & Albrecht, 2024). For example, the terminology preferred by each key user group differs, with formal care partners commonly using medical jargon that is more precise, but requires specialized knowledge and high reading level to decipher, which may be a barrier for informal care partners seeking relevant information (Wittenberg et al., 2020). Bridging the communication gap between informal and formal care partners is a commonly noted challenge when creating a shared information resource (Burgdorf et al., 2023). While ease-of-use is important for any user, factors such as personal distress associated with the provision of care or lower digital literacy may make a high level of usability even more important for informal care partners (Hassan, 2020, Ubels and Albrecht, 2024; Ye et al., 2023). This paper provides a unique contribution to the literature by applying these techniques to the development of a health library with two distinct groups, spanning formal and informal care partners of persons living with dementia. Our findings present practical considerations designers may wish to consider when developing digital libraries for user groups likely to express divergent needs and preferences.

Aims

The objective of this study was to evaluate the usability of the Behaviours in Dementia Toolkit website to meet the needs of our two key user groups: formal and informal care partners. To understand its content tone and usability, we assessed the following research questions:

1. Is the content tone of the website suitable for our users?

2. Is the website understandable for both user groups?

3. What are the functional challenges encountered by our users?

Methods

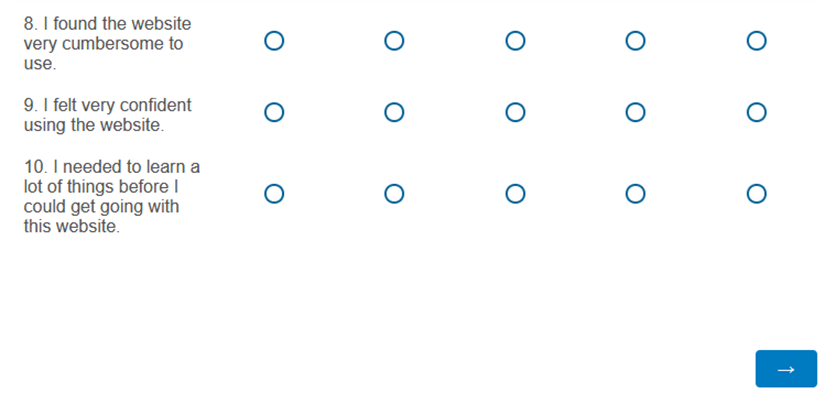

This study was conducted using a multi-method research design with three phases. In Phase One, we distributed an electronic survey and conducted individual structured interviews to assess the prototype of the Behaviours in Dementia Toolkit website. In Phase Two, we used Phase One results to identify, prioritize, and complete changes to the prototype website design. In Phase Three, we launched the Behaviours in Dementia Toolkit to the public and distributed a second electronic survey to its users. Ethics approval for this study was obtained from the Baycrest Research Ethics Board (REB# 23-42 and REB# 24-07). See Figure 1 for a diagram of each phase of this study and methods used.

Figure 1

Phases and methods used in evaluating the Behaviours in Dementia Toolkit website.

The goal of the usability study was to understand the challenges participants face when searching for and accessing resources on our website. To inform design improvements, we required multiple methods to understand why participants behaved the way they did while completing tasks. While quantitative measures (e.g., time on task, error rates) and observations can show what participants did and how they did it, these measures do not provide insight into why these activities were challenging. We used qualitative measures to better understand underlying reasons for challenges captured in the quantitative measures and what changes might alleviate these challenges to best determine effective design changes to the website.

Phase One: Website Prototype Evaluation

Electronic Survey

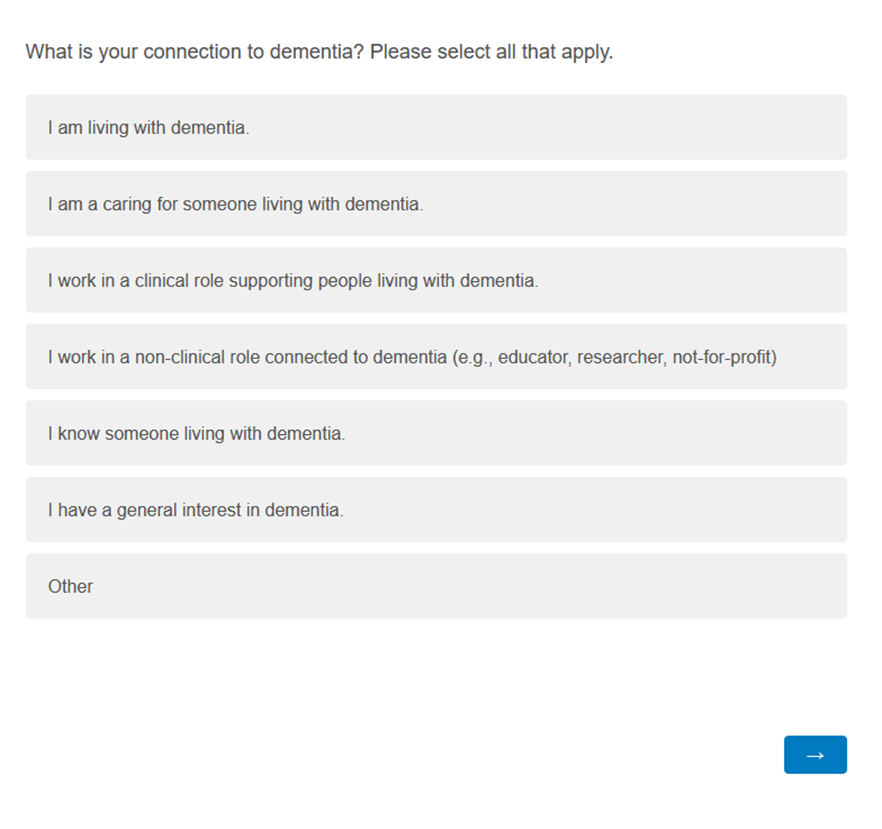

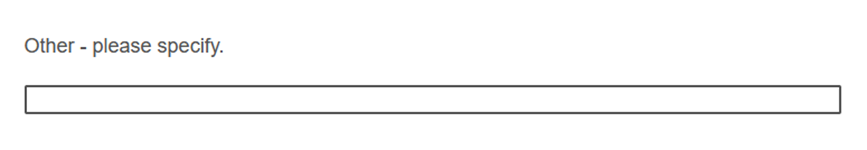

Formal and informal care partners that were English-speaking and living in Canada were recruited to complete an electronic survey using non-probabilistic, convenience sampling through the networks of the Behaviours in Dementia Toolkit working group members (e.g., social media, newsletters). Survey data were collected for 27 days.

The electronic survey (Appendix A) collected demographic information and assessed the content tone of the website content. To evaluate content tone, survey respondents were asked to read two brief passages from the Behaviours in Dementia Toolkit website and complete a word reaction test for each one (Hampshire et al., 2022). Then they were asked to rate the content in terms of friendliness, trustworthiness, and desirability.

Quantitative data were cleaned and analyzed descriptively using Qualtrics and Excel. Surveys were included in the analysis if respondents completed at least one quantitative response following the demographic questions.

Individual Structured Interview

Participants from the electronic survey indicated their interest in attending an interview. Those that expressed willingness were contacted with further information about the interview and if interested, an interview time was scheduled. Due to participants’ varied geographical locations and time zones, the interviews took place using a videoconferencing application (Zoom) over 16 days.

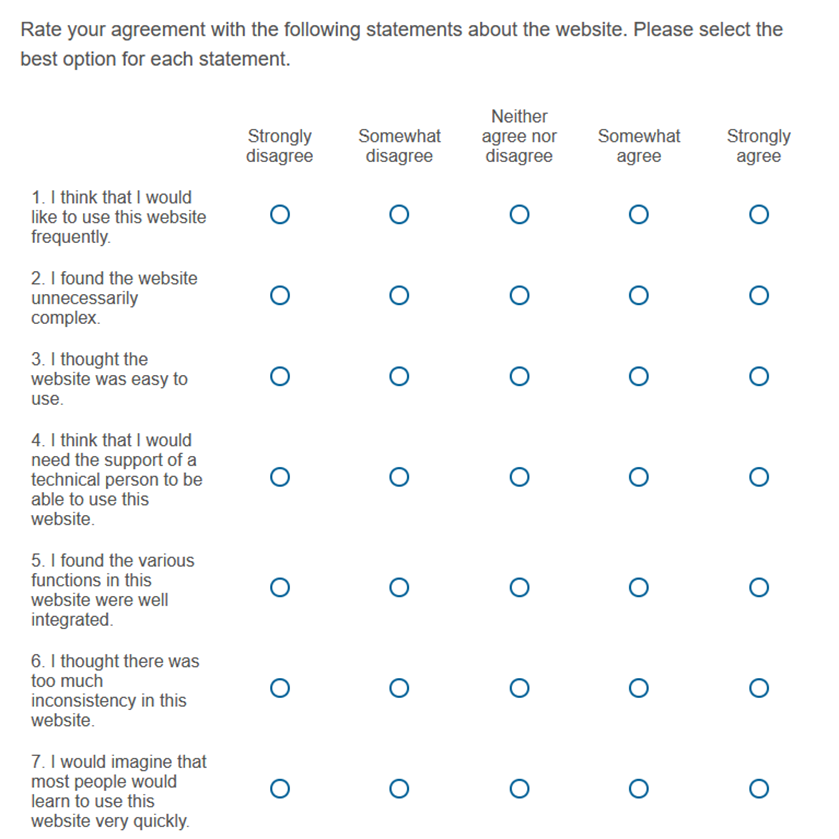

The interviews had a structured script of questions and included two assessments: 1) content tone via the highlighter test (Gale, 2014) and 2) usability of navigation and functionality via completion rate of three tasks; time on task of three tasks; SEQ for three tasks, and SUS for the overall website (Schmidt et al., 2020).

Content Tone Assessment

Using the highlighter test (Gale, 2014), participants were asked to electronically highlight words from passages that they felt were easy to understand in green, highlight words they found confusing in red, and to rate the passage’s clarity (Appendix B). Participants were asked to explain why they highlighted particular words.

Both qualitative and quantitative data were collected. The frequency of phrases that overlapped were tabulated in Excel and MIRO whereas ratings were analyzed in Excel.

Navigation & Functionality Usability Assessment

For the navigation and functionality usability assessment, participants were asked to complete three key website tasks while verbalizing their thoughts: 1) make a search; 2) apply filters to narrow the search, and; 3) access a resource in the collection (Brett et al., 2016). We used a think-aloud protocol to gather more context for participants’ thinking and decision-making processes as they completed these tasks. At the end of each task, participants completed the SEQ (Sauro, 2010). This provided insights on task-specific problem areas in the interface. Completion rate and time on task were also evaluated (Nielsen, 2001). At the end of the set of three tasks, participants completed the SUS questionnaire (Sauro, 2011). This provided a baseline score to track changes across design iterations and determine the effectiveness of any changes made between Phases One and Three.

Both qualitative and quantitative data were collected. Usability metrics were recorded, scored, and analyzed in Excel. Significant statements from users’ verbalization and their thoughts about how easy or difficult they perceived the task to be were highlighted, extracted, and thematically analyzed using affinity diagramming in MIRO (Rosala, 2022). SUS scores were determined using the standard scoring guide (Brooke, 1986). Adjective school grading scale ratings were assigned following accepted practice (Lewis & Sauro, 2018; see Table 1).

Table 1

SUS Curved Grading Scale (Lewis & Sauro, 2018)

|

Grade |

SUS |

Percentile range |

|

A+ |

84.1 – 100 |

96 – 100 |

|

A |

80.8 – 84.0 |

90 – 95 |

|

A- |

78.9 – 80.7 |

85 – 89 |

|

B+ |

77.2 – 78.8 |

80 – 84 |

|

B |

74.1 – 77.1 |

70 – 79 |

|

B- |

72.6 – 74.0 |

65 – 69 |

|

C+ |

71.1 – 72.5 |

60 – 64 |

|

C |

65.0 – 71.0 |

41 – 59 |

|

C- |

62.7 – 64.9 |

35 – 40 |

|

D |

51.7 – 62.6 |

15 – 34 |

|

F |

0 – 51.6 |

0 – 14 |

Phase Two: Website Changes

Based on the results in Phase One, we identified and prioritized changes to the website prototype based on their potential user impact (e.g., reducing barriers to access a resource) and feasibility of implementation within our project timeline and budget. Changes were implemented and the website was launched to the public.

Phase Three: Launch and Follow Up Evaluation

Electronic Survey

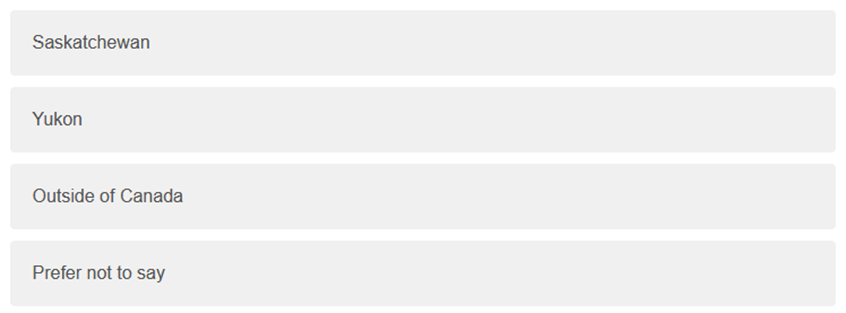

After the website launch, users were invited to complete an electronic survey using non-probabilistic, purposive sampling. Participants were recruited via a banner on the website header, CCSMH social media messages, and an electronic newsletter distributed by CCSMH. Survey data were collected for 20 days.

The survey contained demographic questions and the SUS questionnaire (Sauro, 2011; Appendix C). Quantitative data were collected, cleaned, and then analyzed descriptively using Qualtrics and Excel. SUS was scored using the same protocol as time one (Brooks, 1986; Lewis & Sauro, 2018).

Results

Demographics

For the Phase One surveys, 114 participants provided consent and 82 participants were included in the analysis. Respondents who did not complete at least one non-demographic question were excluded from the analysis. Demographic characteristics of survey participants included in the analysis are presented in Table 2.

Table 2

Survey 1 Participant Demographics (n=82)

|

Role |

|

Age group |

|

I am comfortable using technology on a daily basis |

||||||

|

Select all that apply |

n |

% |

|

n |

% |

Rate your agreement |

n |

% |

||

|

Health care provider |

49 |

59.8 |

18-34 |

7 |

8.5 |

Strongly disagree |

5 |

6.1 |

||

|

Care partner of someone living with dementia |

20 |

24.4 |

35-49 |

22 |

26.8 |

Somewhat disagree |

1 |

1.2 |

||

|

Older adult |

15 |

18.3 |

50-64 |

35 |

42.7 |

Neither agree nor disagree |

1 |

1.2 |

||

|

Educator |

12 |

14.6 |

65-74 |

8 |

9.8 |

Somewhat agree |

22 |

26.8 |

||

|

Other |

10 |

12.2 |

75-84 |

6 |

7.3 |

Strongly agree |

53 |

64.6 |

||

|

Knowledge broker |

7 |

8.5 |

85+ |

4 |

4.9 |

|

||||

|

Researcher |

6 |

7.3 |

|

|||||||

Table

3

Individual

Structured Interview Participant Demographics (n=12)

|

Role |

|

Age group |

|

I am comfortable using technology on a daily basis |

||||||

|

Select all that apply |

n |

% |

|

n |

% |

|

n |

% |

||

|

Health care provider |

7 |

58.3 |

35-49 |

6 |

50.0 |

Somewhat agree |

2 |

16.7 |

||

|

Care partner of someone living with dementia |

7 |

58.3 |

50-64 |

2 |

16.7 |

Strongly agree |

10 |

83.3 |

||

|

Older adult |

1 |

8.3 |

65-74 |

4 |

33.3 |

|

|

|

||

For the Phase Three survey, a total of 230 participants provided consent and 104 people completed the SUS questionnaire. A subset of 46 respondents who self-identified as a member of our two primary user groups (i.e., formal and informal care partner) were included in this analysis. Respondents who were not part of these two groups (e.g., general public with an interest in dementia, researchers, and others) were excluded from this analysis. All participants resided in Canada at the time of their participation. Demographic characteristics of survey participants included in the analysis are presented in Table 4.

Table

4

Survey

2 Respondent Demographics (n=46)

|

What is your connection to dementia? |

||

|

Select all that apply |

n |

% |

|

I am caring for someone living with dementia |

39 |

84.8 |

|

I work in a clinical role supporting people living with dementia |

7 |

15.2 |

Participants in the individual structured interviews were balanced between informal and formal health care providers (Table 3). Survey 1 participant demographics (Table 2) more heavily represent formal care partners, while survey 2 participant demographics (Table 4) more heavily represent informal care partners. While we could not control for the balance of respondents in these surveys, they were sufficiently representative for the purpose of our usability study.

Content Tone Assessment

Content tone was evaluated in both the electronic survey via the word reaction test and the individual structured interview via the highlighter test. In both tests, two passages were examined.

For passage A (Appendix A), the top words selected from the word reaction test indicated that tone of voice was welcoming (59%), friendly (42%), informative (40%), and respectful (38%). For passage B (Appendix A), the top words selected from the word reaction test indicated that tone of voice was informative (69%), professional (46%), and caring (35%) (see Table 5). Respondents rated the passages as somewhat to very friendly (mean=4.3, 3.9), somewhat to very trustworthy (mean=4.6, 4.3), and somewhat to very desirable (mean=4.1, 3.8), with passage A scoring slightly higher than passage B (see Table 5).

Table

5

Top

Five Word Selections for Word Reaction Test

|

Homepage (Passage A) (n=81) |

|

What Are Behaviours in Dementia? (Passage B) (n=72) |

||||

|

|

n |

% |

|

|

n |

% |

|

Welcoming |

48 |

58.5 |

|

Informative |

50 |

69.4% |

|

Friendly |

34 |

41.5 |

|

Professional |

33 |

45.8% |

|

Informative |

34 |

40.2 |

|

Caring |

25 |

34.7% |

|

Respectful |

31 |

37.8 |

|

Clinical |

21 |

29.2% |

|

Professional |

27 |

32.9 |

|

Respectful |

21 |

29.2% |

Mean

ratings for friendliness, trustworthiness, and desirability of content on a

scale of five.

|

Item |

Friendliness |

Trustworthiness |

Desirability |

|

Passage A (n=80) |

4.3 |

4.6 |

4.1 |

|

Passage B (n=73) |

3.9 |

4.3 |

3.8 |

Mean

ratings for clarity of content clarity on a scale of five.

|

|

Informal care partners or older adults (n=6) |

Formal care partners (n=5) |

Overall (n=11) |

|

Passage A |

4.1 |

4.6 † |

4.4 |

|

Passage B |

3.8 |

4.8 † |

4.2 |

† One unit non-response

Generally, interview participants indicated that the content was clear (see Table 5), with some points of confusion. Informal care partners rated both passages as less clear than formal care partners. Table 6 summarizes the frequency count of easy to understand and confusing words highlighted by participants, and additional notes from their explanations.

Table

6

Frequency

Count for Easy to Understand and Confusing Word(s) for Passages A & B

|

Word(s) |

Easy to understand |

Confusing |

Additional notes |

|

Antipsychotic |

2 |

6 |

Technical term. Specialized word for health care provider users; less familiar for care partner users |

|

Non-pharmacological approaches |

3 |

4 |

Technical term. Specialized word for health care provider users; less familiar for care partner users |

|

Evidence informed |

3 |

3 |

Technical term. Specialized word for health care provider users; less familiar for care partner users |

|

Curated |

0 |

5 |

Unfamiliar word. Unsure of the meaning of the word. |

|

General and clinical pathways |

0 |

5 |

Technical term. Specialized word for health care provider users; less familiar for care partner users |

|

Free resources |

5 |

0 |

|

|

Compassionately respond |

5 |

0 |

|

Note. Included words with a frequency count of five or more.

Navigation and Functional Usability Assessment

For the website usability tests, overall completion rate of the three tasks varied from 66 to 100 percent; overall time on task ranged from a mean of 1:02 to 4:08 minutes, and; overall mean SEQ scores ranged from 4.0 to 5.9 (see Table 7). Results are presented for overall participants, formal care partners (abbreviated HCP for health care providers) and informal care partners (abbreviated CP & OA for care partners and older adults). Searching for an item in the collection had the lowest completion rate (66.7%), highest mean time on task (4:08 minutes), and lowest mean SEQ score (4.0).

Table

7

Mean

Completion Rate, Time on Task and SEQ Scores for Three Tasks (n=12)

|

Task |

Completion rate |

Mean time on task |

Mean SEQ score |

||||||

|

|

Overall (n=12) |

HCP (n=6) |

CP & OA (n=6) |

Overall (n=12) |

HCP (n=6) |

CP & OA (n=6) |

Overall (n=12) |

HCP (n=6) |

CP & OA (n=6) |

|

Search for an item in the collection |

66.7 |

83.3 |

50.0 |

4:08 |

3:12 |

5:17 |

4.0 |

4.3 |

3.7 |

|

Apply filters to narrow your search |

75.0 |

100.0 |

50.0 |

1:02 |

0:32 |

1:39 |

5.2 |

6.5 |

3.8 |

|

Access one item |

100.0 |

100.0 |

100.0 |

1:19 |

0:49 |

1:49 |

5.9 |

6.5 |

5.2† |

† One unit non-response.

Qualitative user feedback from the concurrent think-aloud test was highlighted, extracted, and thematically analyzed using affinity diagramming in MIRO agnostic of care partner group (see Table 8). Problems with search and information overload were the most frequently mentioned, followed by problems applying filters.

Table

8

Ranked

Summary of Frequent Usability Issues Identified During Testing

|

Item |

Context |

Problem(s) |

|

1. |

Users feel there is a missing step in flow from “search” to “results”. Expecting keyword search bar. |

a. Clicking “Find resources” or “Start your search” yields results page, rather than prompt to begin keyword searching. b. Users do not see the search bar |

|

2. |

Users feel overwhelmed with search/results page due to information overload |

a. Resources presented in card format require too much zig zag eye movement b. Results page is too difficult to read with too much text |

|

3. |

Users feel they need to “fill in” all filter options |

a. On smaller screens, the filter options are cropped off (Desktop with smaller height, or mobile) b. No results when too many filters are applied |

|

4. |

Users thought filters were not working |

a. Lack of clear visual feedback a change has occurred in results b. No clear action to apply the filters c. Clicked “Reset all” and anticipated this would apply filters – strong contrast of button feels like a call to action. |

|

5. |

Users thought search bar was not working |

a. Lack of clear visual feedback that a change has occurred in search results b. No clear action (e.g., button) to apply keyword search |

|

6. |

[Mobile] keyboard does not disappear after user enters search term |

a. Users may not know how to “remove” keyboard to use whole area for interaction |

|

7. |

Users did not realize there is content after the homepage fold |

a. “Not sure where to start” is not visible for smaller resolutions. |

|

8. |

Homepage category text not displaying properly on iPad (smaller breakpoints) |

a. Difficult to read and understand text |

|

9. |

Users thought placeholder image area on item page is interactive |

a. Misleading visual cues (i.e., play symbol) |

Overall time one SUS had a mean score of 74.6, meaning the overall usability of the website prototype was assigned a letter grade of B which is in the acceptable range of usability. SUS for the formal care partner sub-group was categorized as an A in the good range of usability (mean score of 82.1) and for informal care partner or older adult users it was a C (mean score of 67.1), which was below the average accepted score of 68.

The usability of the launch website via time two SUS showed an overall increase with a mean score of 75.6 (overall change of +1.0), which remained a B grade in the acceptable range. Time two SUS for formal care partners was reduced by a small margin (-2.5) to an acceptable A- (mean score of 79.6). SUS for care partner or older adult users had a substantial increase at time two (+8) to move them to a mean score of 74.9 for an improved classification of a B grade in the acceptable range of usability. See Table 9 for a summary of time one and time two SUS.

Table

9

Summary

of SUS Scores

|

All participants |

|||

|

|

n |

Mean |

Grade |

|

Phase One |

12 |

74.6 |

B |

|

Phase Two |

46 |

75.6 |

B |

|

Change |

|

+1.0 |

|

|

Formal care partner users |

|||

|

|

n |

Mean |

Grade |

|

Phase One |

6 |

82.1 |

A |

|

Phase Two |

7 |

79.6 |

A- |

|

Change |

|

-2.5 |

|

|

Informal care partner and older adult users |

|||

|

|

n |

Mean |

Grade |

|

Phase One |

6 |

67.1 |

C |

|

Phase Two |

39 |

74.9 |

B |

|

Change |

|

+7.8 |

|

Discussion

This usability study illustrated the challenges of addressing the needs and preferences of two different audiences in the design of a health library. Our intention was to create a low-barrier interface for both key audiences; however, our working group consisted of health care providers and researchers and this dominant perspective was subsequently overrepresented in our prototype design. We anticipated informal care partners to provide more actionable feedback on the first iteration of the website. Our usability testing provided a critical opportunity to check our design decisions and course correct before launching the Behaviours in Dementia Toolkit to the public so that it could be successfully implemented as a useful tool to support the care practices of both formal and informal care partners.

The results of the content tone assessment in Phase One demonstrated that initial content was perceived to be relatively friendly, trustworthy, and desirable for both intended user groups. Participants indicated that content for the homepage was positively regarded, aligning with our goal to provide a supportive space for users considering that health information searching can be an emotional experience. The content for the “What Are Behaviours in Dementia?” page conveyed an informational and professional tone that was still perceived to be caring and respectful. This aligned with our goal of communicating credibility of this page’s content while remaining readable and explanatory. However, we discovered that informal care partners ranked the clarity of both sample passages lower than formal care partners. Further, some phrases highlighted as easy to understand by formal care partners were highlighted as confusing to informal care partners, including the words “evidence-informed” and “non-pharmacological approaches.” These phrases signalled values alignment for health care providers, increasing the Toolkit’s credibility in their eyes, but made informal care partners feel like the content was not meant for them, increasing alienation. While we revised the content based on user comprehension, we aimed to keep the tone consistent based on initial user perception.

Regarding navigation and functional usability, the SUS results in Phases One and Three provided an important bird’s eye view for how the website as a whole was functioning. Overall scores in both time one and two were well above the acceptability benchmark score of 68 (Hyzy et al., 2022). Divergence of usability was notable when comparing time one SUS score of formal care partners (grade A, good range) and informal care partners (grade C, below average range). In the other task-specific usability measures, we also noted that formal care partners tended to be more persistent and able to figure it out via higher SEQ scores and task completion rates. Interestingly, the task of searching for a resource in the collection had the lowest completion rate, took the longest time, and had the lowest SEQ scores for both groups. These challenges were more pronounced among informal care partners and older adults. The low scores for informal care partners and older adults and the difference in ability to use the website for its intended purpose between our two user groups necessitated changes to the design and functionality of the website before launch.

The Phase One results drove the critical changes to the website made in Phase Two with the aim to better support care partner and older adult users. Our changes focused on reducing information overload and refining the search process, as these were the two most significant challenges encountered by care partner and older adult users (Ubels & Albrecht, 2024). A lack of visual feedback posed barriers for informal care partner and older adult users when searching, leaving them confused about how to proceed, with some abandoning the assigned task. The gap between our stated purpose of reducing information overload by curating resources and actual user perceptions of information overload led us to recognize that we had not adequately integrated this consideration into the function of the system itself.

Many participants encountered challenges in effectively using the search system in Phase One. There appeared to be a missing, intermediary step between deciding to search and engaging with results from the collection. Thus, the website design changes completed are listed in Table 10 in priority order, with many related to this central challenge.

Table

10

Design Changes Made in Response to Problems Identified in Usability Testing

|

Item |

Solution(s) |

|

1. |

Introduced a search page at the beginning of search with search bar, and exploration by topics |

|

2. |

Displayed results in single column, table-format so there is clear visual grouping |

|

3. |

Removed redundant filter categories. |

|

4. |

Introduced a loading state, where screen lightens to indicate system is retrieving results |

|

5. |

Introduced search button |

|

6. |

Bug fix |

|

7. |

Reduced space between text boxes to signal more content |

|

8. |

Introduced responsiveness for iPad users |

|

9. |

Removed placeholder images from resource details pages |

Limitations

Given the tight project timeline and limited resources we were unable to optimize the content and design of the Behaviours in Dementia Toolkit for the unique needs of people living with dementia (Schnelli et al., 2020). Future funding could look at specific needs and preferences of this important user group and incorporate features to support accessibility and ease-of-use. The Phase One individual structured interviews gave us deep insight into user behaviour in the prototype website, and Phase Three was limited by not including this approach to data collection. A larger sample size would have also strengthened the validity of our research findings. However, we argue that the improvements in SUS scores, particularly for informal care partners and older adults, indicate the pragmatic value of our study and demonstrate the potential benefits of similar methods in comparable online library projects.

Conclusion

The pragmatic HCD methods described in this study helped us better address the needs and preferences of two diverse user groups for a digital health information library. Despite our limited timeline for developing, designing, and launching the Behaviours in Dementia Toolkit, we were able to act on findings from our usability testing to change our website and library design in several ways that improved its usability among formal and informal care partners and older adults. Jargon, information overload, and search flow were the areas that required the most attention. This study highlighted how selective use of HCD techniques that are pragmatic to implement (such as the SUS scale and SEQ) can meaningfully improve the content tone, navigation, and functional usability of an interactive health information system. Other projects concerned with facilitating access to health information among different user groups may find these usability methods useful to consider integrating at the outset of their project planning given the practical, actionable insights they can provide.

Author Contributions

Nick Ubels: Conceptualization, Formal analysis, Investigation, Methodology, Supervision, Writing – original draft, Writing – review & editing Lauren Albrecht: Conceptualization, Methodology, Project administration, Supervision, Writing – review and editing Woon Chee Koh: Conceptualization, Formal analysis, Investigation, Methodology, Writing – original draft David Conn: Funding acquisition, Project administration, Writing – review and editing

References

Albrecht, L., Ubels, N., Martinussen, B., Naglie, G., Rapoport, M., Hatch, S., Seitz, D., Checkland, C., & Conn, D. (2025). The Behaviours in Dementia Toolkit: A descriptive study on the reach and early impact of a digital health resource library about dementia-related mood and behaviour changes. Geriatrics (Basel, Switzerland), 10(3), 79. https://doi.org/10.3390/geriatrics10030079

Allison, R., Hayes, C., McNulty, C., & Young, V. A. (2019). Comprehensive framework to evaluate websites: Literature review and development of GoodWeb. JMIR Formative Research, 3(4). https://doi.org/10.2196/14372

Barbara, A. M., Dobbins, M., Haynes, R. B., Iorio, A., Lavis, J. N., Raina, P., & Levinson, A. J. (2016). The McMaster Optimal Aging Portal: Usability evaluation of a unique evidence-based health information website. JMIR Human Factors, 3(1), e4800. https://doi.org/10.2196/humanfactors.4800

Brett, K. R., Lierman, A., & Turner, C. (2016). Lessons learned: A Primo usability study. Information Technology and Libraries, 35(1), 7–25. https://doi.org/10.6017/ital.v35i1.8965

Brooke, J. (1986). SUS ‘quick and dirty’ usability scale. In P. W. Jordan, B. Thomas, B. A. Weerdmeester, & A. L. McClelland (Eds.), Usability evaluation in industry. Taylor and Francis.

Burgdorf, J. G., Reckrey, J., & Russell, D. (2023). “Care for me, too”: A novel framework for improved communication and support between dementia caregivers and the home health care team. The Gerontologist, 63(5), 874–886. https://doi.org/10.1093/geront/gnac165

Canadian Coalition for Seniors’ Mental Health (CCSMH). (2024, March). Canadian clinical practice guidelines for assessing and managing behavioural and psychological symptoms of dementia (BPSD). https://ccsmh.ca/wp-content/uploads/2024/03/CCSMH-BPSD-Clinical-Guidelines_FINAL_March-2024.pdf

Cerejeira, J., Lagarto, L., & Mukaetova-Ladinska, E. B. (2012). Behavioral and psychological symptoms of dementia. Frontiers in Neurology, 3, 73. https://doi.10.3389/fneur.2012.0007

Denton, A. H., Moody, D. A., & Bennett, J. C. (2016). Usability testing as a method to refine a health sciences library website. Medical Reference Services Quarterly, 35(1), 1–15. https://doi.org/10.1080/02763869.2016.1117280

Emde, J. Z., Morris, S. E., & Claassen-Wilson, M. (2009). Testing an academic library website for usability with faculty and graduate students. Evidence Based Library and Information Practice, 4(4), 24–36. https://doi.org/10.18438/B8TK7Q

Gale, P. (2014, September 2). A simple technique for evaluating content. User Research in Government UK. https://userresearch.blog.gov.uk/2014/09/02/a-simple-technique-for-evaluating-content/

Hampshire, N., Califano, G., & Spinks, D. (2022). Reaction card method. Mastering collaboration in a product team (pp. 94–95). Apress. https://doi.org/10.1007/978-1-4842-8254-0_47

Harte, R., Glynn, L., Rodríguez-Molinero, A., Baker, P., Scharf, T., Quinlan, L., & ÓLaighin, G. (2017). A human-centered design methodology to enhance the usability, human factors, and user experience of connected health systems: A three-phase methodology. JMIR Human Factors, 4(1). https://doi.org/10.2196/humanfactors.5443

Hassan, A. (2020). Challenges and recommendations for the deployment of information and communication technology solutions for informal caregivers: Scoping review. JMIR Aging, 3(2), e20310. https://aging.jmir.org/2020/2/e20310

Hill, J., Brown, J., Campbell, N., & Holden, R. (2021). Usability-in-place—Remote usability testing methods for homebound older adults: Rapid literature review. JMIR Formative Research, 5(11). https://doi.org/10.2196/26181

Hyzy, M., Bond, R., Mulvenna, M., Bai, L., Dix, A., Leigh, S., & Hunt, S. (2022). System Usability Scale benchmarking for digital health apps: Meta-analysis. JMIR Mhealth, 10(8). https://mhealth.jmir.org/2022/8/e37290

Isik, A. T., Soysal, P., Solmi, M., & Veronese, N. (2018). Bidirectional relationship between caregiver burden and neuropsychiatric symptoms in patients with Alzheimer’s disease: A narrative review. International Journal of Geriatric Psychiatry, 34(9), 1326–1334. https://doi.org/10.1002/gps.4965

ISO. (2018). ISO 9241-11:2018(en), Ergonomics of human-system interaction—Part 11: Usability: Definitions and concepts. https://www.iso.org/obp/ui/#iso:std:iso:9241:-11:ed-2:v1:en

ISO. (2019). ISO 9241-210:2019(en), Ergonomics of human-system interaction. Part 210: Human-centred design for interactive systems. https://www.iso.org/standard/77520.html

Jagoda, T., Dharmaratne, S., & Rathnayake, S. (2023). Informal carers’ information needs in managing behavioural and psychological symptoms of people with dementia and related mHealth applications: A systemic integrative review to inform the design of an mHealth application. BMJ Open, 13, e06978. https://doi.org/10.1136/bmjopen-2022-069378

Jilani, M. A., Sheikh, A., Shah, F., & Saqlain, S. M. (2025). Usability evaluation of academic library websites: A systematic literature review. Information Discovery and Delivery, 54(1), 50–70. https://doi.org/10.1108/IDD-09-2024-0144

Kales, H. C., Gitlin, L. N., & Lyketsos, C. G. (2015). Assessment and management of behavioural and psychological symptoms of dementia. British Medical Journal, 350, h369. https://doi.org/10.1136/bmj.h369

Kaplan, K. (2023, February 12). When to use empathy maps: 3 options. NNGroup. https://www.nngroup.com/articles/using-empathy-maps/

Kous, K., Pušnik, M., Heričko, M., & Polančič, G. (2018). Usability evaluation of a library website with different end user groups. Journal of Librarianship and Information Science, 52(1), 75–90. https://doi.org/10.1177/0961000618773133

Lewis, J., & Sauro, J. (2018). Item benchmarks for the System Usability Scale. Journal of User Experience, 13(5), 158–167. https://uxpajournal.org/item-benchmarks-system-usability-scale-sus/

Mutatina, B., Basaza, R., Sewankambo, N. K., & Lavis, J. N. (2019). Evaluating user experiences of a clearing house for health policy and systems. Health Information and Libraries Journal, 36(2), 168–178. https://doi.org/10.1111/hir.12257

Neilson, C., & Wilson, V. (2011). “We want it now and we want it easy”: Usability testing of an online health library for healthcare practitioners. Journal of the Canadian Health Libraries Association, 32, 51–59. https://doi.org/10.5596/c11-024

Nielsen, J. (2001, January 20). Usability metrics. NNGroup. https://www.nngroup.com/articles/usability-metrics

Nimmanterdwong, Z., Boonviriya, S., & Tangkijvanich, P. (2022). Human-centered design of mobile health apps for older adults: Systematic review and narrative synthesis. JMIR Mhealth Uhealth, 10(1). https://doi.org/10.2196/29512

Oakley-Girvan, I., Davis, S. W., Kurian, A., Rosas, L. G., Daniels, J., Palesh, O. G., Mesia, R. J., Kamal, A. H., Longmire, M., & Divi, V. (2021). Development of a mobile health app (TOGETHERCare) to reduce care partner burden: Product design study. JMIR Formative Research, 5(8). https://doi.org/10.2196/22608

Okhovati, M., Karami, F., & Khajouei, R. (2017). Exploring the usability of the central library websites of medical sciences universities. Journal of Librarianship and Information Science, 49(3), 246–255. https://doi.org/10.1177/0961000616650932

Polenick, C. A., Struble, L. M., Stanislawski, B., Broderick, B., Gitlin, L. N., & Kales, H. C. (2018). ‘The filter is kind of broken’: Family caregivers’ attributions about behavioural and psychological symptoms of dementia. The American Journal of Geriatric Psychiatry, 26(5), 548–556. https://doi.org/10.1016/j.jagp.2017.12.004

Poll, R. (2007). Evaluating the library website: Statistics and quality measures. Quality Issues in Libraries, 74. http://www.ifla.org/iv/ifla73/index.htm

Poncette, A. K., Mosch, L., Stablo, L., Spies, C., Schieler, M., Weber-Carstens, S., Feufel, M., & Balzer, F. (2021). Human-centered design with usability evaluation of a remote patient monitoring system for intensive care medicine: A mixed-methods study. JMIR Human Factors, 9(10). https://doi.org/10.2196/30655

Reeder, B., Le, T., Thompson, H. J., & Demiris, G. (2013). Comparing information needs of health care providers and older adults: Findings from a wellness study. Studies in Health Technology and Informatics, 192, 18–22. https://pubmed.ncbi.nlm.nih.gov/23920507

Rosala, M. (2022, August 17). How to analyze qualitative data from UX research: Thematic analysis. NNGroup. https://www.nngroup.com/articles/thematic-analysis/

Rutkowski, R. A., Ponnala, S., Younan, L., Weiler, D. T., Bykovskyi, A. G., & Werner, N. E. (2021). A process-based approach to exploring the information behavior of informal caregivers of people living with dementia. International Journal of Medical Informatics, 145, 10431. https://doi.org/10.1016/j.ijmedinf.2020.104341

Sauro, J. (2010, March 2). If you could only ask one question, use this one. MeasuringU. https://measuringu.com/single-question

Sauro, J. (2011). Measuring usability with the System Usability Scale (SUS). MeasuringU. https://measuringu.com/sus/

Schmidt, M., Earnshaw, Y., Tawfik, A. A., & Jahnke, I. (2020). Methods of user centered design and evaluation for learning designers. In M. Schmidt, A. A. Tawfik, I. Jahnke, & Y. Earnshaw (Eds.), Learner and user experience research: An introduction for the field of learning design and technology. EdTech Books.

Schnelli, A., Hirt, J., & Zeller, A. (2020). Persons with dementia as Internet users: What are their needs? A qualitative study. Journal of Clinical Nursing, 30(5-6), 849–860. https://doi.org/10.1111/jocn.15629

Silva, A., Caravau, H., Martins, A., Almeida, A., Silva, T., Ribeiro, Ó., Santinha, G., & Rocha, N. (2021). Procedures of user-centered usability assessment for digital solutions: Scoping review of reviews reporting on digital solutions relevant for older adults. JMIR Human Factors, 8(1). https://doi.org/10.2196/22774

Steinberg, M., Shao, H., Zandi, P., Lyketsos, C. G., Welsh-Bohmer, K. A., Norton, M. C., Breitner, J. C. S., Steffens, D. C., & Tschanz, J. T. (2008). Point and 5-year period prevalence of neuropsychiatric symptoms in dementia: The Cache County study. International Journal of Geriatric Psychiatry, 23(2), 170–177. https://doi.org/10.1002/gps.1858

Statistics Canada. (2021, December 3). How have Canadians been using the Internet during the COVID-19 pandemic? https://www.statcan.gc.ca/o1/en/plus/198-how-have-canadians-been-using-internet-during-covid-19-pandemic

Taylor, H. A., Sullivan, D., Mullen, C., & Johnson, C. M. (2011). Implementation of a user-centered framework in the development of a web-based health information database and call center. Journal of Biomedical Informatics, 44(5), 897–908. https://doi.org/10.1016/j.jbi.2011.03.001

Ubels, N., & Albrecht, L. (2024). Evidence-based principles to accelerate health information flow and uptake among older adults. Evidence Based Library and Information Practice, 19(2), 109–118. https://doi.org/10.18438/eblip30529

Ubels, N., Tan, L., Albrecht, L., & Long, A. (2024). Developing person-centered metadata: A case study of the Behaviours in Dementia Toolkit. Knowledge Organization, 51(7). https://doi.org/10.5771/0943-7444-2024-7-478

van den Haak, M., & van Hooijdonk, C. (2010). Evaluating consumer health information websites: The importance of collecting observational, user-driven data. 2010 IEEE International Professional Communication Conference, 333–338. https://doi.org/10.1109/IPCC.2010.5530031

van Velsen, L., Ludden, G., & Grünloh, C. (2022). The limitations of user-and human-centered design in an eHealth context and how to move beyond them. Journal of Medical Internet Research, 24(10). https://doi.org/10.2196/37341

Wilson, J., Heinsch, M., Betts, D., Booth, D., & Kay-Lambkin, F. (2021). Barriers and facilitators to the use of e-health by older adults: A scoping review. BMC Public Health, 21(1), 1556. https://doi.org/10.1186/s12889-021-11623-w

Wittenberg, E., Kerr, A. M., & Goldsmith, J. (2020). Exploring family caregiver communication difficulties and caregiver quality of life and anxiety. American Journal of Hospice and Palliative Medicine, 38(2), 147–153. https://doi.org/10.1177/1049909120935371

World Health Organization. (2023, March 31). Dementia factsheet. https://www.who.int/news-room/fact-sheets/detail/dementia

Ye, B., Chu, C. H., Bayat, S., Babineau, J., How, T. V., & Mihailidis, A. (2023). Researched apps used in dementia care for people living with dementia and their informal caregivers: Systematic review on app features, security, and usability. JMIR, 25, e46188. https://doi.org/10.2196/46188

Appendix A

Phase One Electronic Survey Questionnaire

|

No. |

Question |

|

F1 |

Title |

|

F2a |

What is the purpose of this study? |

|

F2b |

I have read and understand the information above. I am eighteen or older and I am willing to participate in this survey. |

|

Yes |

|

|

No |

|

|

S1 |

What is your role? Please select all that apply: |

|

Older adult |

|

|

Care partner of someone living with dementia |

|

|

Heath care provider |

|

|

Researcher |

|

|

Educator |

|

|

Knowledge broker |

|

|

Other - please specify |

|

|

E1a |

What is your profession? Please select all that apply. |

|

Family physician |

|

|

Nurse practitioner |

|

|

Geriatrician |

|

|

Psychiatrist |

|

|

Nurse (RN, LPN) |

|

|

Health care aide |

|

|

Facility manager |

|

|

Allied health professional |

|

|

Other - please specify |

|

|

E1b |

Have you supported or treated someone experiencing changes to mood and behaviour due to dementia? |

|

Yes |

|

|

No |

|

|

Uncertain |

|

|

E2 |

What is or was your relationship to the person with dementia who you are or have been a care partner for? |

|

Partner |

|

|

Child |

|

|

Grandchild |

|

|

Other family member |

|

|

Friend |

|

|

Other relationship - please specify |

|

|

Prefer not to say |

|

|

E2a |

Did you live with the person whose care you are or were involved in? |

|

Yes |

|

|

No |

|

|

Prefer not to say |

|

|

E3 |

Please select the dementia care setting(s) you have experience with. |

|

Community & home care |

|

|

Acute care |

|

|

Assisted living |

|

|

Care home or residential care |

|

|

Other - please specify |

|

|

Prefer not to say |

|

|

S2 |

What is your age group? |

|

18-34 |

|

|

35-49 |

|

|

50-64 |

|

|

65-74 |

|

|

75-84 |

|

|

85+ |

|

|

S5 |

Which province, territory, or country do you currently live in? |

|

Alberta |

|

|

British Columbia |

|

|

Manitoba |

|

|

New Brunswick |

|

|

Newfoundland and Labrador |

|

|

North West Territories |

|

|

Nova Scotia |

|

|

Nunavut |

|

|

Ontario |

|

|

Prince Edward Island |

|

|

Quebec |

|

|

Saskatchewan |

|

|

Yukon |

|

|

Outside of Canada - please specify |

|

|

S6a |

Please indicate your agreement with the following statements about your use of technology. |

|

I am comfortable with using technology on a daily basis. |

|

|

Strongly disagree |

|

|

Somewhat disagree |

|

|

Neither agree not disagree |

|

|

Somewhat agree |

|

|

Strongly agree |

|

|

S6b |

I use various digital devices like smart phones, tablets, and laptops frequently. |

|

Strongly disagree |

|

|

Somewhat disagree |

|

|

Neither agree not disagree |

|

|

Somewhat agree |

|

|

Strongly agree |

|

|

S6c |

I have experience with a variety of software applications, like web browsers, word processors, and social media. |

|

Strongly disagree |

|

|

Somewhat disagree |

|

|

Neither agree not disagree |

|

|

Somewhat agree |

|

|

Strongly agree |

|

|

C1 |

Please spend a moment reading the website homepage content below: |

|

Welcome |

|

|

C1a |

Select up to five words that describe the content above. |

|

Academic |

|

|

Authoritative |

|

|

Caring |

|

|

Casual |

|

|

Cheerful |

|

|

Clinical |

|

|

Coarse |

|

|

Complicated |

|

|

Conservative |

|

|

Conversational |

|

|

Difficult |

|

|

Dry |

|

|

Edgy |

|

|

Enthusiastic |

|

|

Formal |

|

|

Frank |

|

|

Friendly |

|

|

Fun |

|

|

Funny |

|

|

Humorous |

|

|

Jargony |

|

|

Passionate |

|

|

Playful |

|

|

Professional |

|

|

Provocative |

|

|

Quirky |

|

|

Respectful |

|

|

Romantic |

|

|

Sarcastic |

|

|

Serious |

|

|

Smart |

|

|

Snarky |

|

|

Sympathetic |

|

|

Trendy |

|

|

Trustworthy |

|

|

Unapologetic |

|

|

Upbeat |

|

|

Inclusive |

|

|

Informative |

|

|

Irreverent |

|

|

Matter-of-fact |

|

|

Nostalgic |

|

|

Welcoming |

|

|

Witty |

|

|

C1b |

How would you rate the friendliness of the content? |

|

Not at all friendly |

|

|

Not very friendly |

|

|

Neutral |

|

|

Somewhat friendly |

|

|

Very friendly |

|

|

C1c |

How would you rate the trustworthiness of the content? |

|

Not at all trustworthy |

|

|

Not very trustworthy |

|

|

Neutral |

|

|

Somewhat trustworthy |

|

|

Very trustworthy |

|

|

C1d |

If this content were to be published to a website, how likely would you recommend this website to a friend/colleague? |

|

Not at all likely |

|

|

Not very likely |

|

|

Neutral |

|

|

Somewhat likely |

|

|

Very likely |

|

|

C2 |

Please spend a moment reading the website content below: |

|

Treatments to reduce behaviours in

dementia |

|

|

C2a |

Select up to five words that describe the content above. |

|

Academic |

|

|

Authoritative |

|

|

Caring |

|

|

Casual |

|

|

Cheerful |

|

|

Clinical |

|

|

Coarse |

|

|

Complicated |

|

|

Conservative |

|

|

Conversational |

|

|

Difficult |

|

|

Dry |

|

|

Edgy |

|

|

Enthusiastic |

|

|

Formal |

|

|

Frank |

|

|

Friendly |

|

|

Fun |

|

|

Funny |

|

|

Humorous |

|

|

Jargony |

|

|

Passionate |

|

|

Playful |

|

|

Professional |

|

|

Provocative |

|

|

Quirky |

|

|

Respectful |

|

|

Romantic |

|

|

Sarcastic |

|

|

Serious |

|

|

Smart |

|

|

Snarky |

|

|

Sympathetic |

|

|

Trendy |

|

|

Trustworthy |

|

|

Unapologetic |

|

|

Upbeat |

|

|

Inclusive |

|

|

Informative |

|

|

Irreverent |

|

|

Matter-of-fact |

|

|

Nostalgic |

|

|

Welcoming |

|

|

Witty |

|

|

C2b |

How would you rate the friendliness of the content? |

|

Not at all friendly |

|

|

Not very friendly |

|

|

Neutral |

|

|

Somewhat friendly |

|

|

Very friendly |

|

|

C2c |

How would you rate the trustworthiness of the content? |

|

Not at all trustworthy |

|

|

Not very trustworthy |

|

|

Neutral |

|

|

Somewhat trustworthy |

|

|

Very trustworthy |

|

|

C2d |

If this content were to be published to a website, how likely would you recommend this website to a friend/colleague? |

|

Not at all likely |

|

|

Not very likely |

|

|

Neutral |

|

|

Somewhat likely |

|

|

Very likely |

|

|

Thank you for your time. |

Appendix B

Phase One Online Interview Script

|

Introduction (10min) |

|

Hello [PARTICIPANT] |

|

Did you have a chance to read our

informed consent form? |

|

Before we proceed, may I get your verbal consent to continue.

[Notetaker: Note name, date, and time in consent log document]

|

|

Content Evaluation (15min) |

|

[Moderator to share screen using

MIRO] |

|

Great, let's

start our first task. This is the prompt: |

|

[Health care providers] |

|

[Non-clinical users] |

|

[Task 1A on MIRO: Moderator to share

screen, adjust it properly] |

|

We will give some time for the notetaker to take a screenshot. [Notetaker to take screenshot] |

|

On a scale of 1 to 5 (1 = not clear at all, 5 = very clear), how would you rate the clarity of the content? |

|

Why did you mark this word as 'red'? |

|

Why did you mark this as 'green'? |

|

**

[If confused with words] What would be a more suitable word? |

|

Does this page provide too much, too little, or the right amount of information? Why? |

|

On a scale of 1 to 5 (1 = not friendly at all, 5 = very friendly), how would you rate the friendliness of the content? |

|

Why did you give that rating? |

|

On a scale of 1 to 5 (1 = not trustworthy at all, 5 = very trustworthy), how would you rate the trustworthiness of the content? |

|

Why did you give that rating? |

|

On a scale of 1 to 5 (1 = not likely, 5 = very likely), how likely are you to recommend this website to your friend/colleague [moderator to note their participant's role]? |

|

Why did you give that rating? |

|

Let's proceed to the second task. |

|

[Task 2A on MIRO, notetaker to

record the content] |

|

On a scale of 1 to 5 (1 = not clear at all, 5 = very clear), how would you rate the clarity of the content? |

|

Why did you mark this word as 'red'? |

|

Why did you mark this as 'green'? |

|

"**

[If confused with words] What would be a more suitable word? |

|

Does this page provide too much, too little, or the right amount of information? Why? |

|

On a scale of 1 to 5 (1 = not friendly at all, 5 = very friendly), how would you rate the friendliness of the content? |

|

Why did you give that rating? |

|

On a scale of 1 to 5 (1 = not trustworthy at all, 5 = very trustworthy), how would you rate the trustworthiness of the content? |

|

Why did you give that rating? |

|

On a scale of 1 to 5 (1 = not likely, 5 = very likely), how likely are you to recommend this website to your friend/colleague [moderator to note their participant's role]? |

|

Why did you give that rating? |

|

Usability evaluation (20min) |

|

[Moderator to allow share screen in settings] |

|

Task 1: Navigation on the homepage [Moderator to start the lap button for each task] |

|

Spend a few moments to browse through the page. |

|

How would you proceed? [Moderator to take notes - behaviors of the users. E.g., Know where to click to find filters, under which dropdown to select based on the prompt] |

|

On a scale of 1 to 7 (1 = Very Difficult, 7 = Very Easy), how difficult or easy did you find this task? Why did you give this rating? |

|

* Follow up questions: |

|

** What other information would you like to see on this page? |

|

** Noticed that your cursor moved to [X] location, could you tell me more? |

|

** [If they ask for help] What do you expect to happen? OR What do you think? |

|

Note: Is the user able to complete the task? Any errors encountered? |

|

Task 2: Using filters [Moderator to start the lap button for each task] |

|

You have been presented with a list of resources. You'd like to narrow your search by finding resources from Canada. |

|

How would you proceed? [Moderator to take notes - behaviors of the users. E.g., Know where to click to find filters, under which dropdown to select based on the prompt] [Moderator to stop the lap button for each task] |

|

On a scale of 1 to 7 (1 = Very Difficult, 7 = Very Easy), how difficult or easy did you find this task? Why did you give this rating? |

|

* Follow up questions: |

|

** What are your thoughts on our filter options? |

|

** What do you think the term Setting meant? |

|

Note: Is the user able to complete the task? Any errors encountered? |

|

Task 3: Accessing a resource [Moderator to start the lap button for each task] |

|

After narrowing your search, you'd like to browse and access a resource to find out more about insomnia. |

|

Feel free to browse and choose a resource that interest you to proceed. [Moderator to take notes - behaviors of the users, do they spend time reading the resource card? Look of confusion?] [Moderator to stop the lap button for each task] |

|

On a scale of 1 to 7 (1 = Very Difficult, 7 = Very Easy), how difficult or easy did you find this task? Why did you give this rating? |

|

* Follow up questions: |

|

** What are your thoughts on the content on each resource page? |

|

*** Was it helpful? Why or why not? |

|

Note: Is the user able to complete the task? Any errors encountered? |

|

System Usability Scale (10min) |

|

Please spend 5 minutes filling in this form. https://ccsmhkt.yul1.qualtrics.com/jfe/preview/previewId/63125971-b65c-4c4f-b5fd-61aefe4fcf96/SV_9An14wLqPzN2Qpo?Q_CHL=preview&Q_SurveyVersionID=current |

|

1. I think that I would like to use this website frequently. |

|

2. I found the website unnecessarily complex. |

|

3. I thought the website was easy to use. |

|

4. I think that I would need the support of a technical person to be able to use this website. |

|

5. I found the various functions in this website were well integrated. |

|

6. I thought there was too much inconsistency in this website. |

|

7. I would imagine that most people would learn to use this website very quickly. |

|

8. I found the website very cumbersome to use. |

|

9. I felt very confident using the website. |

|

10. I needed to learn a lot of things before I could get going with this website. |

|

Wrap up (5min) |

|

[Optional, ask if have time] If you could change one thing about the design of the website, what would it be and why? |

|

[Optional, ask if have time] Is there any other feedback you'd like to give us about this website? |

|

That will be all for today. We will be offering $50 CAD to thank you for your time. Kara will be in touch to gather the information we need to send you your payment. We look forward to share the launched version of the website with you in January. |

Appendix C

Phase Three Electronic Survey Questionnaire

![]()