Review Article

Examining the Meaning and Methodological Characteristics of the Systematized Review Label: A Scoping Review

Leyla Cabugos

Academic Services

Robert E. Kennedy Library

California Polytechnic State University

San Luis Obispo, California, United States of America

Email: lcabugos@calpoly.edu

Zahra Premji

Health Research Librarian

Advanced Research Services

University of Victoria Libraries

Victoria, British Columbia, Canada

Email: zahrapremji@uvic.ca

Received: 09 Apr. 2025 Accepted: 06 Nov. 2025

![]() 2026 Cabugos and Premji. This

is an Open Access article distributed under the terms of the Creative Commons‐Attribution‐Noncommercial‐Share

Alike License 4.0 International (http://creativecommons.org/licenses/by-nc-sa/4.0/),

which permits unrestricted use, distribution, and reproduction in any medium,

provided the original work is properly attributed, not used for commercial

purposes, and, if transformed, the resulting work is redistributed under the

same or similar license to this one.

2026 Cabugos and Premji. This

is an Open Access article distributed under the terms of the Creative Commons‐Attribution‐Noncommercial‐Share

Alike License 4.0 International (http://creativecommons.org/licenses/by-nc-sa/4.0/),

which permits unrestricted use, distribution, and reproduction in any medium,

provided the original work is properly attributed, not used for commercial

purposes, and, if transformed, the resulting work is redistributed under the

same or similar license to this one.

Data Availability: Premji, Z., & Cabugos, L. (2026). Supplemental data for the scoping review titled: Examining the meaning and methodological characteristics of the systematized review label: A scoping review. Borealis. https://doi.org/10.5683/SP3/YOGBXQ

DOI: 10.18438/eblip30757

Abstract

Objective – In 2009, a typology by Grant and Booth introduced the concept of a systematized review in which authors (typically students) selectively employ various elements of the systematic review process. As academic librarians who help research teams to select and conduct a variety of evidence synthesis types, we have fielded inquiries from teams interested in conducting systematized reviews for publication. While the typology is widely referenced, we were aware of no formal methodological guide to a systematized review type.

The objective of this scoping review is to identify and describe the extent of published systematized reviews, and to 1) identify and collate, where available, sources used for the conceptualization and conduct of the systematized reviews, 2) determine if explanations provided were based on constraints, and 3) describe common methodological characteristics.

Methods – Articles titled as a systematized review that attempted the collocation and synthesis of literature and included an adequate description of their methodology were included. We searched on September 1, 2023, the following five sources: Google Scholar, Lens.org, Web of Science Core Collection, Scopus, and MEDLINE. We performed screening and data extraction in duplicate. Data extraction elements included common methodological characteristics relating to various steps of the evidence synthesis process. Descriptive, aggregate statistics, and categorization of reasons for selecting the systematized review type were the primary analysis for this review.

Results – Our review found 171 systematized reviews that met inclusion criteria. These were published between 2013 and 2023, and the number has increased each year. Resources or constraints were mentioned in only 32 included reviews, and only 19 had a single author. The methodological attributes of published systematized reviews vary significantly. A small number (15) of reviews searched only one database or source, while the majority searched between 2 and 6 sources. The majority (134) provided no search details or a non-reproducible search strategy. Only 36 included reviews mentioned librarian involvement.

Conclusion – Librarians

should stay abreast of emerging evidence synthesis practices and the context in

which researchers operate. It is unclear why and how authors choose the

systematized review type because most did not report a source for their

conceptualization, nor justify their choice. The majority of published reviews

did not connect their methodological departures to the kinds of resource

constraints typically associated with student work. The expectation that the

elements of the systematic review process that authors chose to incorporate

should be conducted and reported according to standards was not widely met.

Librarians,

as methodological guides, participants, or evaluators can play a key role in

reinforcing the expectations of this review type and the standards that should

be met when publishing a systematized review. Publishers and consumers of

systematized reviews are advised to consider how each modification of standard

systematic review methodology may introduce bias and affect the value of the

evidence presented in published systematized reviews.

Introduction

Synthesis of evidence can take many forms, ranging from well-established methodologies, such as systematic reviews or scoping reviews, to lesser-known types, such as rapid reviews and realist reviews. Many typologies of evidence syntheses exist, each of which may include a number of distinct review types ranging from a handful to, in one case, 48 types (Grant & Booth, 2009; Munn et al., 2018; Sutton et al., 2019). Arguably, the most robust current effort to develop and maintain a comprehensive taxonomy of evidence synthesis types, the Evidence Synthesis Taxonomy Initiative (ESTI), identified a staggering 1,010 unique review types present in the literature (Munn & Pollock, 2024).

The systematized review is a type that was initially reported by Grant and Booth (2009) in their typology of reviews, characterized chiefly as a way for students to model their understanding of the systematic review process while modifying it to accommodate the needs and constraints of their academic program. This characterization matches what we have observed in our professional contexts. Given the attention this typology received, as evidenced by its more than 11,000 citations (Google Scholar, December 23, 2024), it stands to reason that review types mentioned within this typology have received significant exposure. Grant and Booth’s (2009) typology provides a basic description of each review type and its perceived strengths and weaknesses, but not enough detail to be used as a methodological guide. In the subsequent typology, Sutton et al. (2019) provided examples of each review type along with known official guidance or methodological guides, where available. Other than the characterization in Grant and Booth (2009), we were aware of no additional guidance documents for systematized reviews.

As academic librarians, we regularly help researchers, including students, identify and select the appropriate type of review for their topic and context. Discernment of how to align the research question with the most appropriate review type is a core competency for librarians supporting or participating in evidence syntheses (Townsend et al., 2017). Tools specifically designed to provide guidance on selecting an appropriate review methodology, such as the Right Review tool (Amog et al., 2022) which covers 41 types of reviews, are frequently incorporated into library consultations and online resource guides. Despite the lack of inclusion of systematized reviews as a type in the Right Review tool, there is a growing number of this type of review being published in scholarly literature.

We were therefore interested in understanding what is being published as systematized reviews, what are their methodological characteristics, what documents are authors citing for their methodology, and why authors of these reviews are choosing this type of review. The answers to these questions may help librarians who support evidence synthesis teams to proactively address the factors that lead authors to pursue a systematized review or to label it as such. By examining researcher and publisher practices as reflected in published reviews, in the context of the most relevant standards, we aim to help librarians be better prepared for conversations with research teams who express interest in conducting, publishing, or using systematized reviews.

Review Objectives

The purpose of this scoping review is to describe the extent and state of systematized reviews published in the scholarly literature (Khalil et al., 2024) and to describe their methodological characteristics. Additionally, this study sought to identify and collate the reasons why researchers chose the systematized review.

Methods

We published our proposed methods in the form of a scoping review protocol (Premji & Cabugos, 2023). The exploratory nature of our research questions makes a scoping review an appropriate method for this research. Scoping reviews can be conducted to identify and map key characteristics related to a concept (Peters et al., 2020) and have been implemented in the past to characterize and describe methodological elements of a specific review methodology (Khalil et al., 2024). Below, we describe the final methods implemented in our study, making specific reference to any deviations from the published protocol.

This scoping review was conducted following the JBI scoping review methodology (Peters et al., 2020) and is reported according to the Preferred Reporting Items for Systematic Reviews and Meta-Analysis (PRISMA) Scoping Review guidelines (Tricco et al., 2018).

Eligibility Criteria

Population

In keeping with the Population Concept Context (PCC) framework, we have retained the Population heading for this element. However, in our review, the population is our sample, namely, published systematized reviews explicitly titled as such.

During the pilot screening stage, it became clear in some cases that the authors do not appear to have intended to conduct a systematized review, even when this term is used in the title. Because we are interested in the characteristics of systematized reviews as a specific review type, we added an exclusion criterion related to the intent to conduct a systematized review. We implemented this by excluding studies that did not use the term systematized review or its variants (with reference to their study) beyond the title or abstract. We interpreted the lack of use of the term specifying the type of review in the body of the article as a lack of awareness of a distinction between systematized reviews and other codified synthesis methods or traditional literature reviews. For the same reason, we also excluded reviews that did not describe any methodological departures from those of a systematic review.

Concept

The concept of interest in our scoping review is the methodological elements or characteristics typically found in evidence synthesis reviews, as selected from the PRISMA 2020 reporting guideline (Page et al., 2021) or the critical appraisal tool, AMSTAR-2 (Shea et al., 2017). Specifically, we extracted elements relating to the search, selection, data extraction, and risk of bias steps of the review process, as well as other characteristics, such as number of authors and the involvement of other specialists, such as librarians. Since we were interested in extracting details relating to the methodology implemented in these reviews, we excluded reviews that did not provide a fulsome description of the methodology for collocating and synthesizing existing literature for their review. Furthermore, we excluded reviews whose synthesis was primarily bibliometric.

Context

The context of our review is global. We did not limit the sample to systematized review publications from any specific region or from any discipline. We did not limit the searches by language filters and screened all articles (with the help of Google Translate, for articles written in a language other than English). Due to the inability of the author team to assess research in other languages, we did not extract data from non-English language articles, instead collating those that clearly met the inclusion criteria in a citation list that is included in the supplemental data.

Publication Characteristics

We included published systematized reviews. The reviews must have been published in a scholarly journal or conference proceedings. We excluded theses, dissertations, preprints, and publications from journals that only published graduate student work from a given institution. We added this additional exclusion criterion due to the significant number of theses and articles from student journals noted during the screening process. We made this modification to our criteria to explore the attributes of systematized reviews that editors and peer reviewers accept for publication.

Information Sources and Search Strategies

To locate systematized reviews, we searched five sources:

● Web of Science Core Collection (Clarivate), specifically, Science Citation Index, Social Sciences Citation Index, Arts & Humanities Citation Index, and Emerging Sources Index

● Scopus (Elsevier)

● MEDLINE (Ovid)

● Google Scholar (via Publish or Perish)

● Lens.org

We used the search strategies published within our protocol and conducted the final searches on September 1, 2023 (Table 1). We did not conduct any additional supplementary searches in the grey literature or via citation chaining.

Table

1

Search Strategies and Number of Results as Run on September 1, 2023

|

Source |

Search query |

Results |

|

Web of Science Collection |

TI=( ("systemati?ed") NEAR/3 ("review") ) |

168 |

|

Scopus (Elsevier) |

TITLE (("systematized" OR "systematised") W/3 ("review")) |

201 |

|

MEDLINE (Ovid) |

(("systemati?ed") adj4 (review)).ti |

106 |

|

Google Scholar (via Publish or Perish) |

Title words field: ("systematized review" OR "systematized * review" OR "systematized * * review" OR "systematized * * * review" OR "systematised review" OR "systematised * review" OR "systematised * * review" OR "systematised * * * review") |

525 |

|

Lens.org |

title:(( "systematized review" ~3 )) OR title:(( "systematised review" ~3 )) |

370 |

The search results were exported in RIS format and deduplicated in Covidence software.

Screening

We conducted a pilot title-abstract screening test using 50 random records in Excel (instead of the 25 originally planned). Following the pilot, each record was screened in Covidence in duplicate. We resolved conflicts via discussion and consensus. We retrieved the full-text articles of the records included after title-abstract screening and uploaded them to Covidence. We conducted full-text screening in duplicate and resolved conflicts through discussion and consensus. The implemented inclusion and exclusion criteria, including the reasons for exclusion at the full-text screening stage, are shown in Table 2.

Table 2

Inclusion/Exclusion Criteria as Implemented During the Screening Stages

|

|

Inclusion criteria |

Exclusion criteria |

|

Population |

Publications explicitly titled as a systematized review.

|

Publication not identified as a systematized review in the title.

Reason for exclusion code: Not titled systematized review |

|

Additional mention of systematized review in the article as an indication of the intent to conduct a systematized review.

|

No mention of systematized review in the body of the article, indicating a lack of intent.

Reason for exclusion code: No intent |

|

|

Concept (primarily assessed at the full-text screening stage) |

To complete data extraction, the publications must provide details of the methods used in the review, including at minimum: the search, selection, extraction methods and a synthesis that is not purely bibliometric in focus. |

Systematized reviews that did not include a methods section or did not provide a basic description of the search, selection, and extraction steps of their review or reviews that appeared to be bibliometric or content analysis.

Reason for exclusion code: Insufficient/lacking methods Reason for exclusion code: Bibliometric or other focus |

|

Context |

Any geographic region and any language, as long as it is possible to paste the text into Google Translate to evaluate whether it is a systematized review. |

Non-English systematized reviews were excluded at full-text screening, but have been collated and listed in the Appendix.

Reason for exclusion code: Non-English systematized reviews |

|

Publication characteristics |

Published in a journal or as a complete conference proceeding paper. |

Preprints, protocols, theses, self-published/archived documents, or articles from journals that publish only student work.

Reason for exclusion code: Wrong format |

Extraction

We refined our data extraction template based on dual independent extraction of ten reviews. A full list of the data categories extracted can be found in the extraction instrument (provided in the Appendix). Data items are defined in a data dictionary provided in the supplemental data.

We completed all data extraction for this study, in duplicate, in Google Sheets. We resolved conflicts through discussion and consensus.

Data Analysis and Presentation

Analysis of the results was done with simple counts, percentages, and frequencies using pivot tables and formulas in Google Sheets. For a select number of data items, we performed further categorization or coding prior to analysis. In analyzing data items that referenced source documents, we excluded in-text citations that lacked a corresponding full citation in the reference list.

Results / Analyses

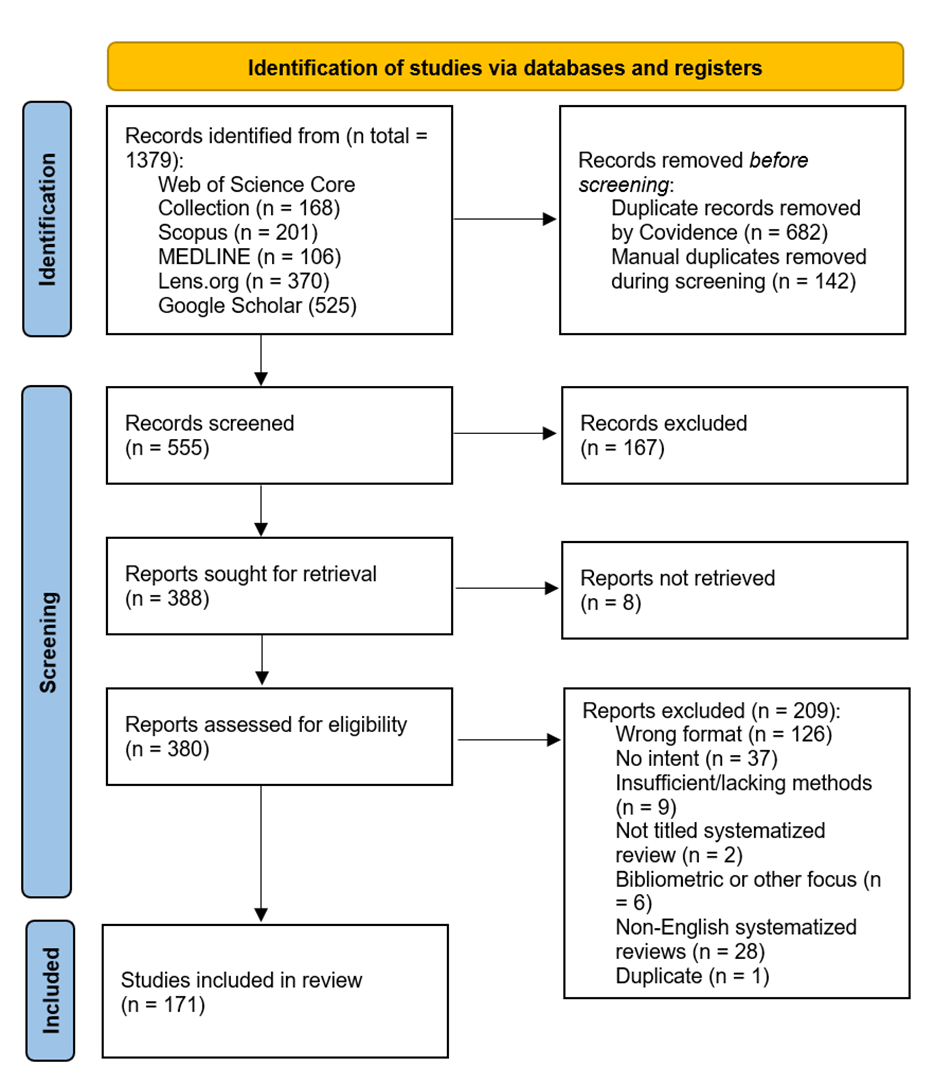

The search of five sources resulted in 1,379 records. Of these, 682 duplicates were automatically identified by Covidence. A further 142 duplicates were manually identified during screening and removed. The inter-rater agreement level from the pilot screening test of 50 random records was 96%. Both authors independently screened a total of 555 records using the title and abstract information in Covidence; 167 records were excluded during this stage of screening. The proportionate agreement for the title-abstract screening was 96.6%, and the Cohen’s Kappa reported by Covidence for this stage of screening was 0.918. We were unable to retrieve full-texts for 8 records. The full-text of 380 records were retrieved and assessed in duplicate. After evaluating eligibility, 171 systematized review publications were included. The proportionate agreement for the full-text screening was 88.5%, and the Cohen’s Kappa as reported by Covidence was 0.769.

A PRISMA flow diagram of the results from searching and screening is presented in Figure 1.

Figure 1

PRISMA flow diagram.

Publication Characteristics

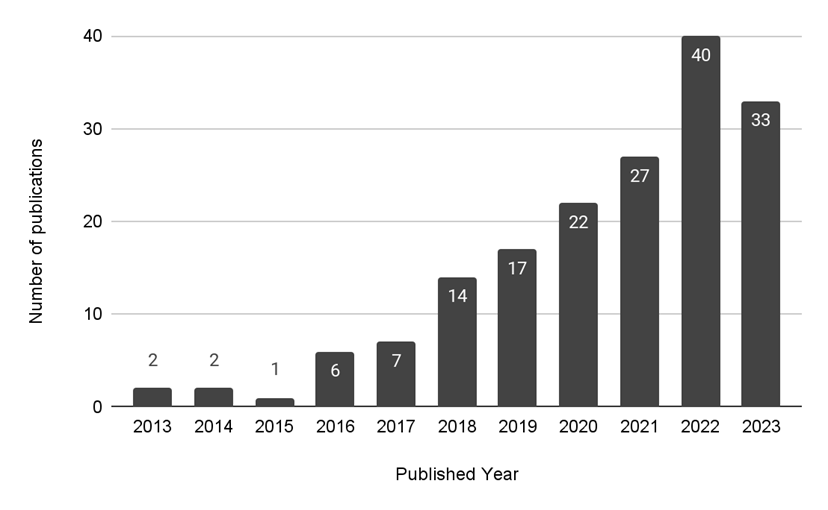

The included systematized reviews were published between 2013 to 2023, and the number of publications per year has generally increased over time (Figure 2).

Figure 2

Bar chart showing the number of included systematized reviews by publication year (N total = 171).

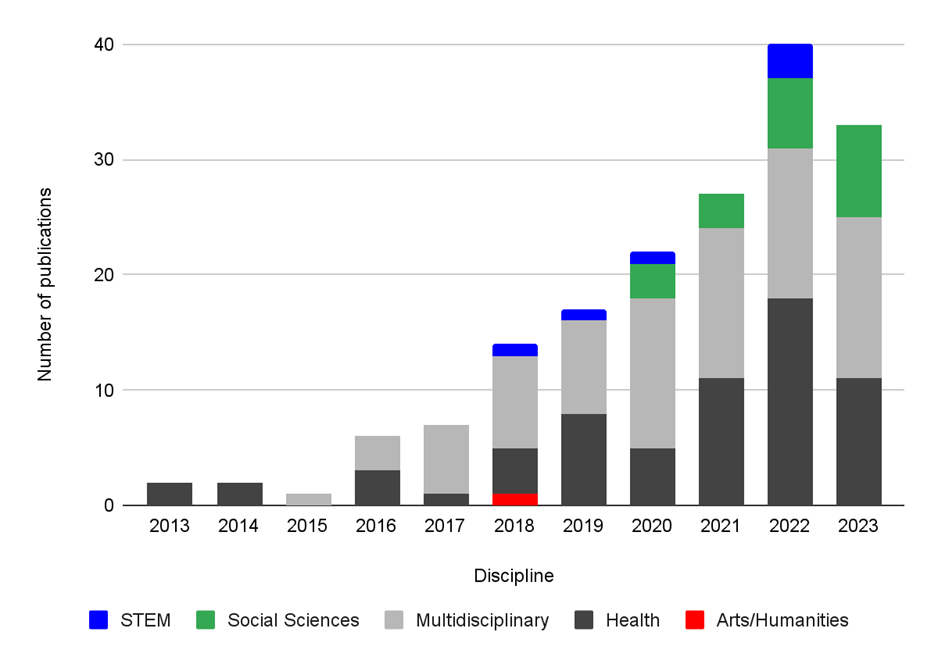

We characterized the discipline of the journal in which each included review was published. The greatest proportion of included reviews was categorized as multidisciplinary (n = 79, 46.2%), followed by Health (n = 65, 38.0%), Social Sciences (n = 20, 11.7%), STEM (n = 6, 3.5%), and Arts/Humanities (n = 1, 0.6%).

We analyzed the disciplines against publication year to identify any trends in uptake of systematized reviews in specific disciplines over time (Figure 3). The earliest systematized review publications in our included studies list, which were published in 2013 and 2014, were from health disciplines.

Figure 3

Breakdown of discipline of included studies by publication year (N total = 171).

Grant and Booth’s (2009) typology (hereafter referred to as “the typology”) contains the first and most widely referenced characterization of systematized reviews as a type, and therefore provides a framework for our analysis of the methodological characteristics of published systematized reviews.

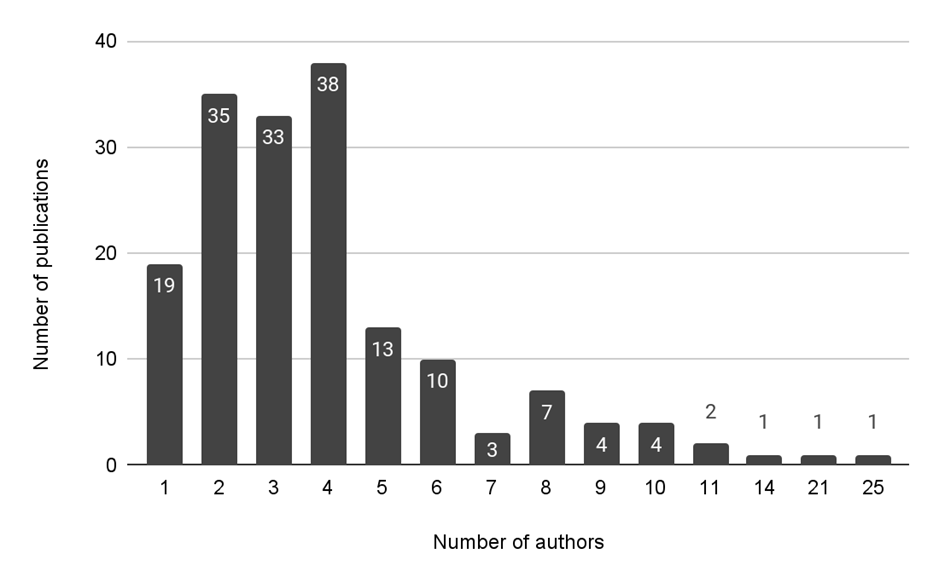

The availability of multiple reviewers is identified in the typology as a common constraint (typically faced by students) that motivates authors to pursue a systematized review. Thus, we were interested in the number of such reviews that have a single author. Figure 4 shows the number of reviews plotted against the number of authors listed on each included systematized review. Only 19 of the systematized reviews had a single author (11.1%). We did not code for whether the authors were students when the work was conducted. Most (106, 62%) of the reviews had between two and four authors.

Figure

4

Figure

4

Chart showing the count of included reviews by number of authors listed (N total = 171).

Inconsistent Terminology

Authors sometimes refer to their studies parenthetically as systematic reviews even when they include systematized review in the title (Grant and Booth, 2009). Noting this occurrence in the screening phase, we became interested in identifying how frequently authors of included reviews referred to their review as something other than a systematized review (e.g., systematic review or scoping review) in one part of their manuscript while still titling their review a systematized review. We found 22 systematized reviews (12.9%) that referred to their review as another type of evidence synthesis review at least once in their manuscript, most frequently as a systematic review.

Conceptualization

We were interested to see what authors cited for the conceptualization, definition, or description of the systematized review type to gain insight into how the concept of systematized reviews is propagating. Of the 116 studies that included a reference for their conceptualization of systematized reviews, 114 cited a source that offers methodological guidance for some element of the review process, including choice of methodology. Grant & Booth (2009) was the most commonly cited source for conceptualization (n = 73). Eight reviews cited a published study. Types represented among these were systematized review, systematic review, systematic mapping review, narrative review, primary research article, and a review method of the author’s own conception with systematic elements. Some reviews cited multiple sources in either or both of these categories, so they are not mutually exclusive.

Conducting Standards Cited

Given Grant and Booth’s (2009) characterization of the systematized review type as a modification that contains elements from a systematic review, we were interested in what guided authors’ conduct of the included reviews. Of the 54 (31.6%) included systematized reviews that cited a source of guidance for their overall methodological conduct, 52 cited a source that offers methodological guidance for some element of the review process, including selection of methodology. A further 3 cited published systematic reviews, which we assume were used as models. One included review cited both a methodological guidance document and a published review and is therefore counted in both categories. We classified the cited references into these broad categories because the multifaceted nature of many resource types would otherwise have resulted in almost as many categories as references. Various versions of the PRISMA statement, which are designed to guide reporting rather than conduct of the methodology, were nevertheless cited as a guide for overall conduct of the review. These include the 2009 PRISMA statement (11 citations), the 2020 PRISMA statement (7 citations), the 2009 PRISMA statement for health care interventions (4 citations), and the PRISMA-Protocol statement (3 citations). Grant & Booth (2009) was cited 10 times. Two other notable mentions included a paper offering methodological guidance on systematic review conduct adapted for the field of engineering (Borrego et al., 2014) (seven citations) and three documents with a common author (Codina, 2020, 2018b, 2018a). Codina 2018a and 2020 offer guidance on the conduct of systematized reviews for social science research, and were collectively cited in three included reviews. Codina 2018b offers guidance on searching, and was cited in one included review.

Reporting Standards Cited

Versions of the PRISMA reporting standards were explicitly cited for reporting the entire review in 22 reviews (12.9%). An additional 62 reviews included a PRISMA flow diagram, but only 39 reviews (22.8%) cited a PRISMA document in association with the PRISMA flow diagram, but not the rest of the review. Most (110 reviews, 64.3%) did not cite any reporting standards for guiding any element of the reporting in their review.

The various versions of the 2009 and 2020 PRISMA statements, PRISMA extension, or articles about PRISMA were the primary documents referenced as reporting guidance, as shown in Table 3.

Table 3

Documents Cited for the Reporting Guidance Within the Included Systematized Reviews

|

Document cited |

Number of reviews citing |

|

2020 PRISMA Statement |

7 |

|

2009 PRISMA Statement |

6 |

|

2009 PRISMA for Health Care Interventions |

4 |

|

PRISMA-ScR |

3 |

|

Vrabel (2015) |

1 |

|

Urrútia & Bonfill (2010) |

1 |

Explanation for Choosing Systematized Review Label

We also extracted details on whether authors justified their choice to label their study a systematized review and if they related it to some type of constraint mentioned in the typology, such as lack of time or resources. While many of the studies in our review included a description of the typical characteristics of systematized reviews, we only coded such a description as an explanation if the authors explicitly connected it to the conditions of their study. Less than a third (n = 47, 27.5%) of the included studies explained their choice to use the systematized review label in their review.

We further categorized what the explanation was related to, with categories not being mutually exclusive, so a single review could mention multiple categories. Of the 47 reviews that explained their choice of review type, 32 of these mentioned resource constraints. Reviewer availability (such as a second screener) was the most frequently mentioned constraint (n = 25, 53.2%), and time (n = 6, 12.8%) was the least mentioned. Some reviews mentioned constraints but did not specify what those were (n = 7, 14.9%). A further 15 reviews stated reasons that were not resource based (31.2%). These included the perceived suitability of the systematized review type to the study’s discipline or target corpus, or to the intended scope of the research question.

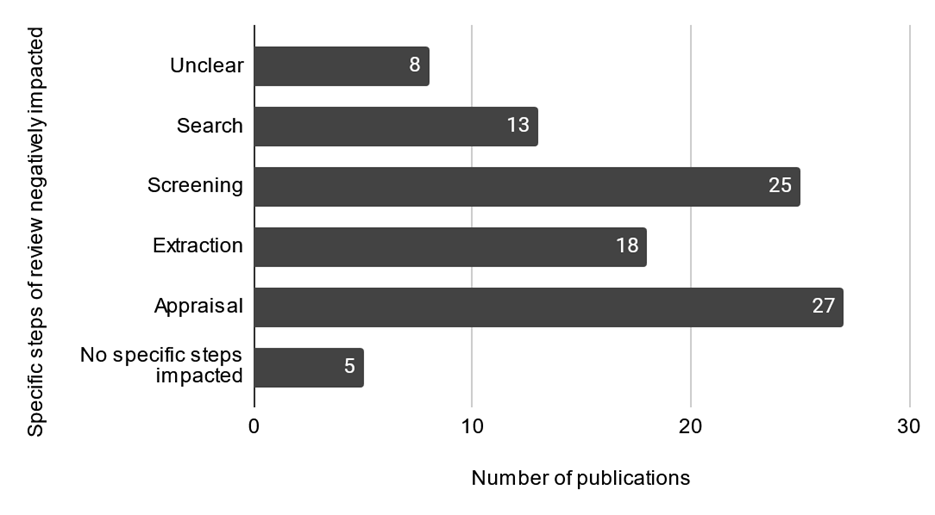

We then coded for all the steps of the review process that were affected by the stated constraint (Figure 5). We relied on author reporting of the steps impacted, except in the case of solo author publications where we could anticipate that the availability of a second screener and extractor would be impacted.

Figure 5

Steps of the review impacted by the modifications associated with the choice of the systematized review type (N total = 47 reviews that explained their choice of review type).

Protocols

The production of an independently verifiable research protocol prior to data collection is an expected step in codified evidence synthesis methodologies, including the systematic review. As this is an element of review quality (reflected in the AMSTAR-2 instrument), we wanted to examine its presence in systematized review implementation, even though this is not a step explicitly mentioned in the typology. The majority (n = 161, 94.2%) of included studies did not mention a protocol. Of the 10 reviews in which a protocol was mentioned, 3 (1.8%) linked to a published protocol, and another 2 (1.2%) mentioned a protocol in a manner that left its publication status unclear. Notably, five (2.9%) mentioned the absence or lack of a protocol, indicating awareness of this requirement for other evidence synthesis review types.

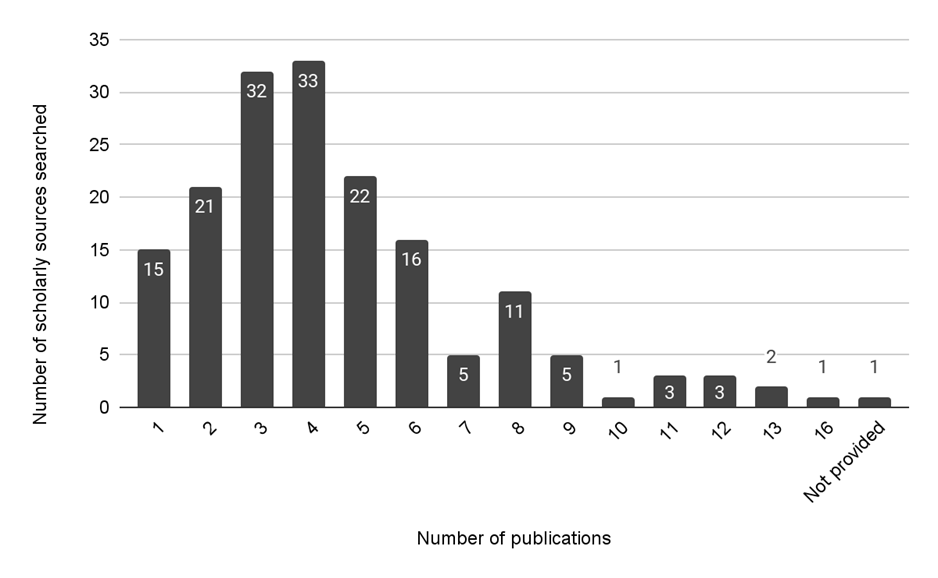

Bibliographic Sources Searched

Reduction in the number of sources searched is a possible modification explicitly mentioned in Grant and Booth’s (2009) conceptualization of systematized reviews, relative to the expectations of systematic review methodology. The number of sources searched (not including grey literature sources) by the included reviews is charted in Figure 6.

Figure 6

Number of scholarly sources listed by authors of included reviews as part of their search strategy (N total = 171).

Transparent reporting of sources in a review and appropriate selection of sources to minimize bias are important in all evidence synthesis types. Reporting only the name of a vendor platform (such as ProQuest or Ovid) or reporting a given database without mentioning the vendor platform is a common error in search reporting and reduces transparency and reproducibility of the search methods. We extracted the exact list of scholarly sources reported by authors of each systematized review. From this, we coded for mentions of searching a vendor platform or journal publisher site (such as Taylor & Francis or Wiley) as a source, as well as whether reviews included Google Scholar in their list of databases searched (as it is well known that Google Scholar does not provide an adequate level of reproducibility in searches). Of the 171 included reviews, 56 (32.7%) mentioned either a vendor platform (n = 31), a journal publisher (n = 28), or Google Scholar search (n = 25).

Search Date

The date of the search is a required element of the PRISMA reporting standard (Page et al., 2021) and helps readers understand how current the data collection is in a review. Close to one third (n = 55, 32.2%) of included reviews provided no search date, whereas 49 included reviews (28.7%) provided exact search dates. The remaining 67 reviews (39.2%) provided either a range of dates exceeding a two-week period or provided only the month and year of the search.

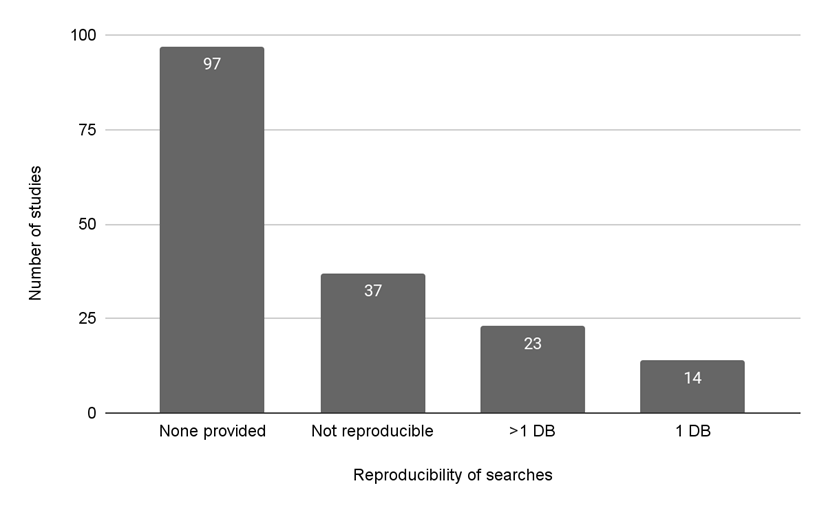

Search Strategies

We were interested in whether the authors provided sufficient information about the search strategy to allow it to be reproduced. We defined this as having, at a minimum, a search string that could theoretically be executed as written in the appropriate search tool with information about the fields searched and the search date. A list of keywords or a narrative description of how search terms were combined was not coded as a reproducible search. Only 37 (21.6%) of included reviews reported at least one reproducible search strategy (Figure 7).

Figure 7

Reproducibility of search strategies reported in the included systematized reviews (N Total = 171).

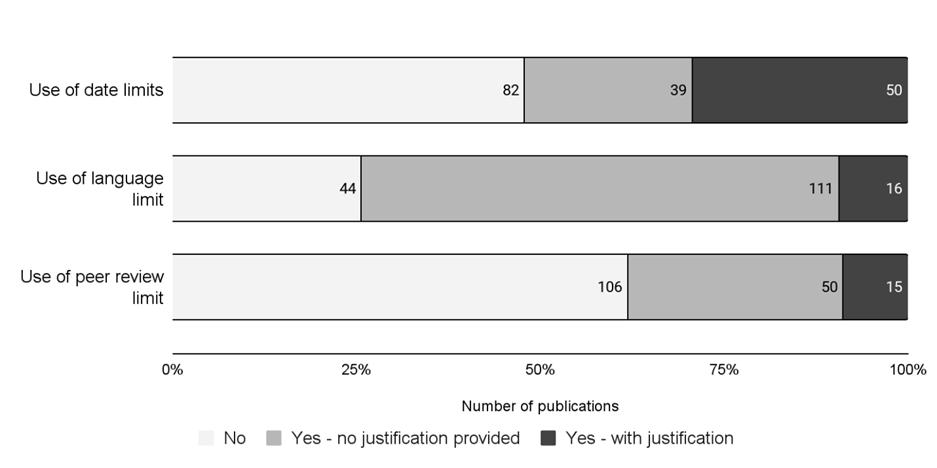

Use of Limits

Strategies to increase the feasibility or narrow the scope of a systematized review are common practices in systematized reviews, as conceptualized in the typology. These could be done using a variety of limits, such as limiting to peer-reviewed publications, limiting the publication dates or language in included studies. We recorded the frequency of use of the above-mentioned limits in the included reviews (Figure 8). We further coded whether the use of a limit was accompanied by a justification. We did not evaluate the justifications provided, only noted their presence or absence. Of the limits we coded, the most used was the language limit (n=127, 74.3%, 16 (12.6%) of which bore a justification. A little over half of included reviews (n = 89) used a date limit, with 56.2% (n = 50) of those providing a justification. The peer-reviewed limit was applied in 38.0% of included reviews (n = 65), 23% of which were accompanied by a justification.

Figure 8

Use of date, language, and peer-review limits in included reviews (N total = 171).

Note: The data labels show the absolute number of included reviews, while the horizontal axis shows the percentage.

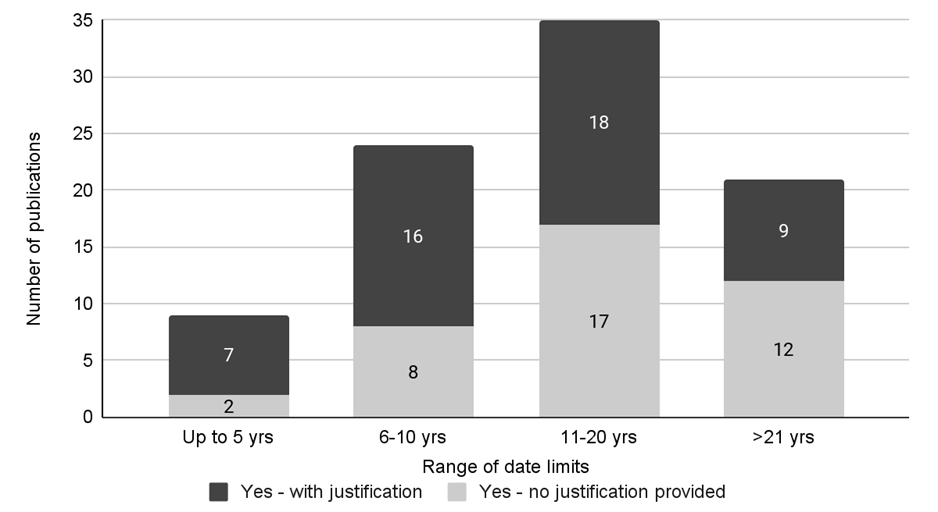

For those reviews that used date limits (n = 89, 52%), we extracted the range of limits used (Figure 9). We rounded partial years up to the next full year. We inferred a justification for date limits that we readily recognized as associated with a topic, namely, the start of the recent COVID-19 pandemic.

Figure 9

Range of date limits used in included reviews (N total = 89).

As shown in Figure 9, shorter ranges of dates (up to 5 years and 6-10 years) were accompanied by a justification more frequently than longer ranges.

We also extracted and categorized mentions of other limits. Format (for example, excluding books, theses, etc.) was the most commonly mentioned limit (n = 34), followed by access (n = 10). Limiting to human studies was mentioned only once. The use of a cap on the number of results screened was mentioned in three reviews. The use of a journal list or limiting to specific Web of Science categories was mentioned in two reviews. Five additional limits were not amenable to coding as they were multi-faceted or we were unable to interpret their description.

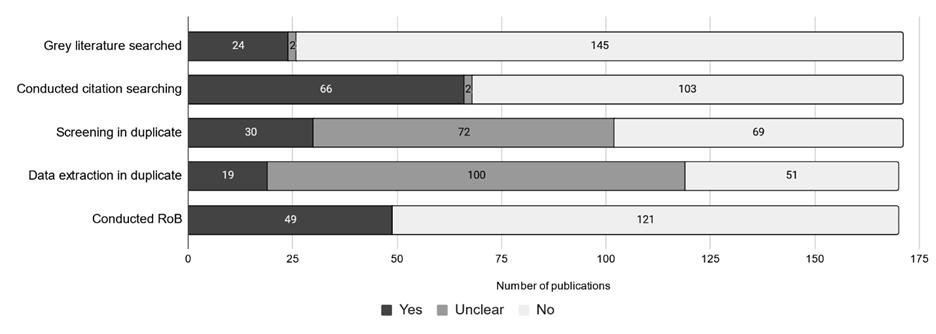

Grey Literature, Citation Searching, Tasks in Duplicate and Risk of Bias

We also coded for the presence or absence of several best practices in the conduct of systematic reviews. Some were steps identified in the typology as commonly impacted in systematized reviews, including screening or extraction in duplicate, or a formal Risk of Bias assessment. Additionally, we recorded whether reviews mentioned grey literature searching and citation searching.

As shown in Figure 10, separate grey literature searching was done in 24 reviews (14.0 %), and citation searching was mentioned in 66 reviews (38.6 %). Confirmed duplicate screening and extraction was mentioned in 30 (17.6 %) and 19 (11.1 %) reviews, respectively. Less than a third of included reviews (49, 28.8%) conducted quality appraisal (Risk of Bias assessment), while 121 (70.8%) did not. While we did not record the exact number, we did notice that some authors attributed their choice not to do quality appraisal to the description in the typology.

Figure 10

Inclusion of grey literature searching, citation searching, duplicate screening, duplicate data extraction and inclusion of Risk of Bias (RoB) within the included reviews.

Note: N total = 171 for grey literature, citation searching, and duplicate screening; however, N total = 170 for duplicate data extraction and inclusion of RoB as one included review was an empty review (i.e., no included studies).

Librarian Involvement

Involvement of librarians in evidence syntheses is mentioned in conduct standards, including evidence of the value they add to the quality of published evidence synthesis (Lefebvre et al., 2024). 78.9% of included reviews (n = 135) did not mention a librarian in any way (Table 4). Of the remaining 36 reviews, the most frequent mention came in the form of an acknowledgment (n = 17, 9.9%), followed by a mention elsewhere in the manuscript (n = 10, 5.8%), most often the methods section. The codes were mutually exclusive, and we applied the code corresponding to the highest level of involvement mentioned in each review. We designated the inclusion in the acknowledgments to represent a more substantive involvement than a mention in the body of the paper. Three reviews (1.8%) had a clearly identifiable librarian co-author, and six reviews (3.5%) were studies from within the Library and Information Sciences disciplines where librarians and information sciences professionals were expected to make up the majority of the review author team.

Table 4

Librarian Involvement Mentioned in the Included Reviews (N total = 171)

|

Librarian involvement |

Count |

Percentage |

|

Co-author |

3 |

1.8% |

|

Librarian or IS mentioned in manuscript |

10 |

5.8% |

|

Librarian thanked in acknowledgements |

17 |

9.9% |

|

LIS/IS study |

6 |

3.5% |

|

Not mentioned |

135 |

78.9% |

|

Total |

171 |

100.0% |

Discussion

In this scoping review, we summarized some of the common methodological characteristics of systematized reviews published in the peer-reviewed scholarly literature, within the conceptualization of which we include both conference proceedings and journal articles. Some notable points are discussed below.

How is the Idea of Systematized Reviews Propagating?

Grant and Booth (2009) do not appear to claim the coinage of “systematized review” in their typology, but it is clearly considered a foundational document or an introduction to this terminology/concept for authors of the included reviews. A substantial number (73, 42.7%) of our included studies cite the typology for conceptualization or methodology. That the example provided in the 2009 typology is not titled a systematized review, and that the earliest of our included studies is from 2013, suggests the term was at least not prevalent until the publication of the typology.

How Are Review Authors Using the Grant and Booth (2009) Typology?

Grant and Booth’s (2009) typology of reviews provides a useful overview of prominent types present in the health information landscape. In a field of proliferating and evolving review methods, they note the value of this inventory and characterization “given the importance evidence-based practice places upon the retrieval of appropriate information, such diverse terminology could, if unchecked, perpetuate a confusion of indistinct and misapplied terms” (p. 93). While their typology aids the reader and prospective review author alike in anticipating strengths and weaknesses of the methods associated with each review type, we observe that the characterization of the systematized review phenomenon therein is frequently being mistaken for a standard for conduct, akin to what is articulated in guidance documents like the Cochrane Handbook for Systematic Reviews of Interventions (Higgins et al., 2024). While we did identify one author of guidance documents for the conduct of systematized reviews (Codina, 2020, 2018a), these are not among the commonly cited guides in our included reviews. More frequently cited are evidence synthesis reporting standards, or Grant and Booth’s typology itself.

Are Systematized Reviews Modeled on Systematic Reviews?

Most of the conceptualizations of systematized reviews in the included reviews appear to have systematic reviews as the standard from which their methods depart, which comports with Grant and Booth’s (2009) characterization of the type. While we did not extract the content of conceptualizations because we did not anticipate their regular provision, we did observe some variability in interpretation. A few of the conceptualizations mentioned in the reviews include particular suitability for the synthesis of social science literature or non-traditional publication formats, as well as both narrowly and broadly scoped questions.

Grant and Booth (2009) observed that the development of novel review types is in part driven by the need to synthesize a wider variety of works as more disciplines adopt evidence based practice. As systematized reviews gain greater representation in the peer-reviewed literature (as suggested by the modest but steady annual increase in publications we observed), the conceptualization of the systematized review type as a modification with variable expressions may make it amenable to becoming a catch-all label. Conversely, various reviews that take inspiration from the typology attribute disparate particulars to the systematized review type that we could not find in Grant and Booth’s (2009) description. Many studies did not justify choosing a systematized review, suggesting this type is seen as a standard option in its own right, albeit with little consistency in methodological attributes. In some cases, we suspect it is conflated with the systematic review, which we observe is sometimes viewed as the generic evidence synthesis type. Interestingly, the frequency of justifying the choice of systematized review is, if anything, increasing over time.

During the scoping search and screening phase of this study, we encountered several related review names, some of which are listed below. Those in which “systematized” and “review” were within three words of each other were in our search results and therefore screened, but most did not meet all our inclusion criteria.

● Systematized scoping review

● Systematized bibliographic review

● Systematized narrative review

● Systematised literature review

● Semi-systematic review

● Systematized methodological review

● Rapid systematized literature review

● Systematized survey

The variants that include narrative, literature, and bibliographic can be expected, but the variants that include scoping and rapid may imply that systematized is a label that some authors perceive as being applicable to review types beyond the systematic review. Or it could simply be that authors use systematized and systematic as synonyms without knowing the specific implications of the terms. We gather from examples that a systematization, a term that also appeared in our screening set, may have discipline-specific meaning distinct from that of a systematized review, but with which we lack familiarity. Future research could further explore the motivations of authors of published systematized reviews.

Are Modifications to Systematic Review Methodology in Systematized Reviews Prompted by Resource Constraints?

A key component of the characterization of systematized reviews is that they are typically conducted by students, who generally cannot involve co-authors at the level expected for full systematic reviews (Grant and Booth, 2009). While we did not have the means to identify which reviews had student authors, we noted that the included reviews did in some cases mention that the paper was initially conceived or conducted by a postgraduate student. However, this was not the case for the majority of included reviews. Only 19 of the included systematized reviews had a single author (though not necessarily a postgraduate student), and 25 reviews mentioned the lack of a second person for screening or extraction as a constraint that contributed to their choice of the systematized review type. It should be noted that we intentionally excluded theses, and articles from student journals, to examine reviews that enter the peer-reviewed literature. These were a substantial number of the systematized reviews excluded based on format/publication status.

The relative frequency of various departures from systematic review standards was surprising because, counter to the characterization in the typology, they are not primarily resource-based. Time was the least mentioned constraint (n = 6 studies), and elements we expected to see attenuated relative to systematic review methodology, such as the number of databases searched, were not frequently affected. Conversely, relatively non-resource dependent elements, such as observance of reporting standards, could be improved in many of the systematized reviews in this sample. The substantial number of included reviews where we had to code “unclear” for whether they had done screening and data extraction in duplicate is just one example. The prevalence of unclear reporting is despite the frequency with which reporting guides, such as PRISMA variants, were cited for the conduct of reviews, which indicates a lack of differentiation between methodological and reporting guidance.

Together, our observations suggest that, to ensure review methods are well matched to research goals, building familiarity among review authors, publishers, and consumers of the variety of codified review types and their particular trade-offs between “rigour and relevance” (Grant & Booth, 2009, p. 92) is at least as important as addressing resource constraints.

Are Published Systematized Reviews Utilized to Model the Systematic Review Process While Stopping Short of Conducting a Full Review?

The search stage, as Grant and Booth (2009) observe, offers an opportunity for authors to demonstrate awareness of systematic review standards while adapting the method to fit resource constraints by developing a robust search string but limiting the scope to a single database. We therefore expected that the search stage, and any modifications thereto, would be well documented.

Based on the results we observed, it appears most authors of systematized reviews are not choosing to limit their search to one database, as only 15 of the included reviews (8.8%) searched only a single source/database. The majority (n = 134, 78.9%) of the reviews searched 3 or more scholarly sources. However, the number of reviews that provided reproducible search strategies for at least one database (n = 37, 21.6%) was low. Our results show that there is room for improvement in the demonstration of the technical proficiency of the search step, especially in the reporting of the search, as we did not assess the comprehensiveness of the search strategies provided.

Limitations

We sought explicitly titled systematized reviews that provided a minimum description of the methods used and synthesized traditional sources of information, such as scholarly and grey literature, as would be expected for a literature review, rather than a content or bibliometric analysis. Furthermore, we only included reviews published in sources where we expected editorial and peer review, to characterize what journal editors and academic peer reviewers would accept as a publishable systematized review. These explicit requirements would result in some systematized reviews being excluded, either because their description of the method therein did not meet our threshold, or they were published as theses or in primarily student-oriented journals from a single institution. These requirements led to a final sample size of 171. We note that the majority of the 126 records we excluded due to format (theses, student journals) were indeed student work. Due to this choice of scope, we did not characterize what may be a substantial proportion of publicly available systematized reviews.

Our search strategy included only English language keywords. A cursory search for translations of “systematized review” that appear in Portuguese and Spanish language reviews (“revisão sistematizada”, “revisión sistematizada”) respectively retrieve 372 and 269 records in an all fields search for the Scholarly Works category of the Lens.org database (on December 22, 2024). These retrieval numbers suggest there is a substantial corpus we could not consider given that we did not search using terms for systematized reviews in other languages. Furthermore, while we did not exclude systematized reviews in languages other than English until the full-text screening stage, we recognize that any specific trends that may appear in systematized reviews in another language (such as common citing documents or methodological processes) would not be captured in our results.

In keeping with expectations for scoping reviews, we did not update the search prior to publication as would be required for a systematic review of a fast-developing evidence base (Aromataris et al., 2024). Our review provides a picture of scholarly practice at a defined point in time and, therefore, is not in jeopardy of being superseded by recent publications. Moreover, our review did not show temporal methodological trends, nor are we aware of any emerging conditions that suggest our recommendations would cease to apply in the face of the most recent publications as they largely serve as a focused reminder of existing standards.

A challenge we encountered frequently during data extraction was missing or unclear information. In those cases, we attempted to be consistent in our approach, and where possible, generous in our interpretation; however, it is possible that in certain cases, we may have misinterpreted what authors reported based on the word choice used.

While it is not a limitation of this study, readers should be aware that, despite its use across a variety of disciplines, the Grant and Booth (2009) typology that introduced the systematized review type was focused on reviews in the health and health information literature, as is the subsequent typology (Sutton et al., 2019) that placed it in the context of review families.

Conclusion

Implications for Practice

Librarian awareness of these attributes of published systematized reviews can help direct their educational efforts, and their attention as peer reviewers and authors. Below, we provide some actionable recommendations to improve the quality of published systematized reviews and ensure that knowledge users understand how to properly evaluate this type of review. These recommendations are based on the findings of this scoping review and our awareness of evidence synthesis standards.

For Review Authors

Consult resources, such as the Right Review Tool, and research librarians to select a review type best suited to research goals.

We applaud the inclination of researchers undertaking a narrative review to add systematic elements. It is quite acceptable to include a methods section and demonstrate elements of systematicity without adding the label of “systematized review.” If they choose to label the resultant study as a systematized review, the utility of the study will be supported by adherence to key standards, including to:

1. Publish/deposit a research protocol prior to initiation of the study and describe which steps of the underlying (typically systematic) review type the authors will attempt to implement to a standard, and which steps will not be completed to the same extent or omitted altogether.

2. Utilize a methodological conduct guidance document to guide the steps of the review that are to be done systematically.

3. Justify or explain the choice to do a systematized review in the methods section and explain the purpose and constraints within which the review is being conducted, if any exist.

4. Increase the adherence to reporting standards for the steps/elements of the review that were conducted systematically. Explicitly report deviations from methodological practice or absence of a step/requirement (e.g. we only used a single screener, or we did not have an a priori protocol). Good reporting allows readers to understand exactly what methodological steps were done and how.

For Readers/Consumers of Systematized Reviews

Readers should understand that, despite the similarity of terms, systematic and systematized refer to two different types of reviews. Despite the characterization of systematized reviews as having systematic elements modelled on systematic review methodology (Grant & Booth, 2009), the most recently published typology (Sutton et al., 2019) that mentions both these review types places systematized reviews in a category called Purpose Specific Reviews, rather than the Systematic Review Family. Systematized reviews are most frequently not designed with rigour as the prioritized value, so they are not necessarily appropriate for practitioner decision-making. However, if a systematized review is the best available information source, then the reader should assess the methodological quality of the review using an appropriate, validated, quality appraisal tool to understand the risk of bias of the review, as would be expected in evidence based practice.

For Journals and Peer Reviewers

Since the underlying review type for the systematized review is typically the systematic review, expect the same level of reporting as one would expect from a systematic review. Where specific steps were omitted or modified, require the authors to explicitly report that a given step was not done. While a systematized review cannot be held to the conducting standard of a systematic review for all steps, it can and should be held to transparent reporting using a standard such as PRISMA (Page et al., 2021).

In conclusion, the typology of reviews that introduced the systematized review label closes with a call to action for “an internationally agreed set of discrete, coherent and mutually exclusive review types” (Grant and Booth, 2009, p. 106) to inform various interested parties in the commissioning, production, and use of evidence synthesis products. The methodological heterogeneity among the systematized reviews included in this scoping review comports with Grant and Booth’s (2009) characterization of this review type. Our findings highlight that it remains incumbent upon review authors to make their methods clear through both title selection and thorough reporting, and for reviewers/readers/consumers of systematized reviews to be cognizant of the implications thereof.

Author Contributions

Zahra Premji: Conceptualization (equal), Methodology (equal), Formal analysis (equal), Investigation (equal), Data curation (equal), Visualization, Writing – original draft preparation (equal), Writing – review & editing (equal) Leyla Cabugos: Project administration, Funding acquisition, Conceptualization (equal), Methodology (equal), Formal analysis (equal), Investigation (equal), Data curation (equal), Writing – original draft preparation (equal), Writing – review & editing (equal)

Funding statement

The fees associated with publishing the pre-registered protocol were supported by the Research, Scholarly and Creative Activities Program awarded by the Cal Poly Division of Research to Leyla Cabugos. The funder did not play a role in the study design, data collection and analysis, decision to publish, or preparation of the manuscript.

Competing Interests

The authors have no conflicts to declare and are not authors of any of the included studies.

References

Amog, K., Pham, B., Courvoisier, M., Mak, M., Booth, A., Godfrey, C., Hwee, J., Straus, S. E., & Tricco, A. C. (2022). The web-based “Right Review” tool asks reviewers simple questions to suggest methods from 41 knowledge synthesis methods. Journal of Clinical Epidemiology, 147, 42–51. https://doi.org/10.1016/j.jclinepi.2022.03.004

Aromataris, E., Lockwood, C., Porritt, K., Pilla, B., & Jordan, Z. (Eds.). (2024). JBI manual for evidence synthesis. JBI. https://doi.org/10.46658/JBIMES-24-01

Borrego, M., Foster, M. J., & Froyd, J. E. (2014). Systematic literature reviews in engineering education and other developing interdisciplinary fields. Journal of Engineering Education, 103(1), 45–76. https://doi.org/10.1002/jee.20038

Codina, L. (2020). Revisiones sistematizadas en ciencias humanas y sociales. 3: Análisis y Síntesis de la información cualitativa. In C. Lopezosa, J. Díaz-Noci, & Ll. Codina, (Eds.), Methodos anuario de métodos de investigación en comunicación social, 1 (pp. 73–87). Universitat Pompeu Fabra. https://doi.org/10.31009/methodos.2020.i01.07

Codina, L. (2018a). Revisiones bibliográficas sistematizadas: Procedimientos generales y framework para ciencias humanas y sociales [Master's Thesis, Universitat Pompeu Fabra]. e-Repositori upf. http://hdl.handle.net/10230/34497

Codina, L. (2018b). Sistemas de búsqueda y obtención de información: Componentes y evolución. Anuario ThinkEPI, 12, 77–82. https://doi.org/10.3145/thinkepi.2018.06

Grant, M. J., & Booth, A. (2009). A typology of reviews: An analysis of 14 review types and associated methodologies. Health Information & Libraries Journal, 26(2), 91–108. https://doi.org/10.1111/j.1471-1842.2009.00848.x

Higgins, J. P. T., Thomas, J., Chandler, J., Cumpston, M., Li, T., Page, M. J., & Welch, V. A. (Eds.). (2024). Cochrane handbook for systematic reviews of interventions (Version 6.5). Cochrane. www.training.cochrane.org/handbook

Khalil, H., Campbell, F., Danial, K., Pollock, D., Munn, Z., Welsh, V., Saran, A., Hoppe, D., & Tricco, A. C. (2024). Advancing the methodology of mapping reviews: A scoping review. Research Synthesis Methods, 15(3), 384–397. https://doi.org/10.1002/jrsm.1694

Lefebvre, C., Glanville, J., Featherstone, R., Littlewood, A., & Metzendorf, M. I. (2024). Chapter 4: Searching for and selecting studies. In Higgins, J. P. T., Thomas, J., Chandler, J., Cumpston, M., Li, T., Page, M. J., & Welch, V. A. (Eds.), Cochrane Handbook for Systematic Reviews of Interventions (Version 6.5). Cochrane. https://cochrane.org/handbook

Munn, Z., & Pollock, D. (2024). SPECTRAL Database: Guidance document [PDF]. https://osf.io/vwmfd

Munn, Z., Stern, C., Aromataris, E., Lockwood, C., & Jordan, Z. (2018). What kind of systematic review should I conduct? A proposed typology and guidance for systematic reviewers in the medical and health sciences. BMC Medical Research Methodology, 18, 1-9. https://doi.org/10.1186/s12874-017-0468-4

Page, M. J., McKenzie, J. E., Bossuyt, P. M., Boutron, I., Hoffmann, T. C., Mulrow, C. D., Shamseer, L., Tetzlaff, J. M., Akl, E. A., Brennan, S. E., Chou, R., Glanville, J., Grimshaw, J. M., Hróbjartsson, A., Lalu, M. M., Li, T., Loder, E. W., Mayo-Wilson, E., McDonald, S., … Moher, D. (2021). The PRISMA 2020 statement: An updated guideline for reporting systematic reviews. Systematic Reviews, 10, 1–11. https://doi.org/10.1186/s13643-021-01626-4

Premji, Z., & Cabugos, L. (2023). Examining the meaning and methodological characteristics of the systematized review label: A scoping review protocol. PLOS ONE, 18(9), 1–7. https://doi.org/10.1371/journal.pone.0291145

Peters, M. D. J., Godfrey, C., McInerney, P., Munn, Z., Tricco, A. C., & Khalil, H. (2020). Chapter 10: Scoping reviews. In E. Aromataris & Z. Munn (Eds.), JBI Manual for Evidence Synthesis. JBI. https://doi.org/10.46658/JBIMES-24-09

Shea, B. J., Reeves, B. C., Wells, G., Thuku, M., Hamel, C., Moran, J., Moher, D., Tugwell, P., Welch, V., Kristjansson, E., & Henry, D. A. (2017). AMSTAR 2: A critical appraisal tool for systematic reviews that include randomised or non-randomised studies of healthcare interventions, or both. BMJ, 358, 1–9. https://doi.org/10.1136/bmj.j4008

Sutton, A., Clowes, M., Preston, L., & Booth, A. (2019). Meeting the review family: Exploring review types and associated information retrieval requirements. Health Information & Libraries Journal, 36(3), 202–222. https://doi.org/10.1111/hir.12276

Tricco, A. C., Lillie, E., Zarin, W., O’Brien, K. K., Colquhoun, H., Levac, D., Moher, D., Peters, M. D. J., Horsley, T., Weeks, L., Hempel, S., Akl, E. A., Chang, C., McGowan, J., Stewart, L., Hartling, L., Aldcroft, A., Wilson, M. G., Garritty, C., … Straus, S. E. (2018). PRISMA extension for scoping reviews (PRISMA-ScR): Checklist and explanation. Annals of Internal Medicine, 169(7), 467–473. https://doi.org/10.7326/M18-0850

Urrútia, G., & Bonfill, X. (2010). Declaración PRISMA: Una propuesta para mejorar la publicación de revisiones sistemáticas y metaanálisis. Medicina Clínica, 135(11), 507–511. https://doi.org/10.1016/j.medcli.2010.01.015

Vrabel, M. (2015). Preferred Reporting Items for Systematic Reviews and Meta-Analyses. Oncology Nursing Forum, 42(5), 552–554. https://doi.org/10.1188/15.ONF.552-554

Appendix

Data Extraction Instrument Built in Google Sheets

|

Column heading |

Input type |

Options, if applicable |

|

Citation |

Open text |

N/A |

|

DOI |

Open text |

N/A |

|

Publication year |

Open text |

N/A |

|

Discipline |

Dropdown |

Health, Humanities, Social Sciences, Science, Multidisciplinary |

|

Number of authors |

Open text |

N/A |

|

Number of scholarly sources searched (AMSTAR 2 - item 4) |

Open text |

N/A |

|

Exact text of scholarly sources list |

Open text |

N/A |

|

Grey literature searched |

Dropdown |

Yes, No, Unclear |

|

Search date provided (PRISMA 2020 Expanded Checklist - item 6) |

Dropdown |

Yes, No, Range provided |

|

Citation searching (AMSTAR 2 - item 4) |

Dropdown |

Yes, No |

|

Reproducible search included (PRISMA 2020 - item 7) |

Dropdown |

None, Not reproducible 1 DB, >1 DB |

|

Protocol (PRISMA 2020 - item 24b) |

Dropdown |

Mentioned absence, Not mentioned, Mentioned, Published link provided |

|

Date limit used |

Dropdown |

Yes, No |

|

Range of limit used |

Dropdown |

5 yrs or less, 6-10 years, 11-20 years, >20 years |

|

Date limit justification reason |

Open text |

N/A |

|

Other limits found |

Open text |

N/A |

|

Screening in duplicate (AMSTAR 2- item 5) |

Dropdown |

Yes, No, Unclear |

|

Data extraction in duplicate (AMSTAR 2 - item 6) |

Dropdown |

Yes, No, Unclear |

|

Risk of Bias conducted |

Dropdown |

Yes, No, Unclear |

|

Conducting guide |

Dropdown |

Guide or handbook referenced, published example referenced, None |

|

Reporting guide |

Dropdown |

Guide or handbook referenced, published example referenced, None |

|

Justification for selecting a systematized review mentioned |

Dropdown |

Yes, No |

|

Exact text used in justification for choice of methodology |

Open text |

N/A |

|

Citation provided for conceptualization of a systematized review (e.g. Grant & Booth, 2009) |

Open text |

N/A |

|

Librarian involvement |

Dropdown |

Not mentioned, Librarian coauthor, Librarian mentioned in methods, Librarian mentioned in acknowledgement, LIS/IS study |

|

Additional comments |

Open text |

N/A |