Research Article

Developing an OPEN Framework for Asking EBLIP Questions in Open Education

Emilia

C. Bell

Manager, Research & Digital Services

Murdoch University Library

Perth, Western Australia, Australia

Email: Emilia.Bell@murdoch.edu.au

Adrian

Stagg

Manager, (Open Educational Practice)

University

of Southern Queensland

Toowoomba, Queensland, Australia

Email: Adrian.Stagg@unisq.edu.au

Received: 12 Aug. 2025 Accepted: 11 Dec. 2025

![]() 2026

Bell and Stagg.

This is an Open Access article distributed under the terms of the Creative

Commons‐Attribution‐Noncommercial‐Share

Alike License 4.0 International (http://creativecommons.org/licenses/by-nc-sa/4.0/),

which permits unrestricted use, distribution, and reproduction in any medium,

provided the original work is properly attributed, not used for commercial

purposes, and, if transformed, the resulting work is redistributed under the

same or similar license to this one.

2026

Bell and Stagg.

This is an Open Access article distributed under the terms of the Creative

Commons‐Attribution‐Noncommercial‐Share

Alike License 4.0 International (http://creativecommons.org/licenses/by-nc-sa/4.0/),

which permits unrestricted use, distribution, and reproduction in any medium,

provided the original work is properly attributed, not used for commercial

purposes, and, if transformed, the resulting work is redistributed under the

same or similar license to this one.

DOI: 10.18438/eblip30867

Abstract

Objective – This paper proposes a novel framework for asking questions in evidence based library and information practice (EBLIP) called OPEN (Objective, Purpose, Evidence, and Narrative). It responds to the question: How can a framework for asking EBLIP questions be developed and applied to open educational practices (OEP)?

Methods – Using a single-case study design, the framework was developed using an inductive approach throughout a three-year collaborative project to create a data dashboard. The project was documented, and these qualitative project artefacts were analyzed. The analysis was used to develop the framework and determine its value.

Results – Arising concurrently from the data dashboard project, the OPEN framework comprises four elements - Objective: What do we need to know?; Purpose: Why do we need to know this?; Evidence: What evidence do I have or need?; and Narrative: How will I communicate this evidence? These elements guide library and information professionals to define what they need to know, collect, and communicate to make evidence based decisions. The case study demonstrates how the framework can be applied to OEP.

Conclusion – Existing evidence based practice (EBP) frameworks developed for clinical environments are difficult to apply to the wide range of EBLIP initiatives, which are often more exploratory, or more immediate in their evidence needs. UniSQ Library's collaborative data dashboard project highlighted the need to develop a flexible framework that diverse stakeholders, including OEP practitioners, with differing levels of experience in evidence based practice, could apply. This research found that the collaborative and reflective nature of the project was instrumental in developing both a useful data dashboard to empower authors to tell their own data stories, and a new framework that contributes to the future of EBLIP and enhances the ability of OEP practitioners to meaningfully engage with EBP.

Introduction

A culture of evidence based library and information practice provides opportunities to demonstrate the value and impact of open educational resources in libraries and higher education and facilitate service improvement for open educational practice. Asking evidence based questions is a crucial part of an EBLIP culture and evidence based decision-making. Frameworks for questions, however, are often based on search strategies designed for health sciences research (Davies, 2011). This paper proposes a novel and accessible framework for asking questions in EBLIP called OPEN (Objective, Purpose, Evidence, and Narrative). Using the University of Southern Queensland (UniSQ) Library, a regional university library in Australia, as a case study, it explores how an OPEN framework supports the development of a local evidence base to meet the strategic needs of program managers in the Library. The outcomes support the use of an OPEN framework for value and impact and continuous improvement initiatives in open educational practice.

This paper proposes the OPEN framework as an approach to asking questions. It is complementary to existing EBLIP models, with a specific focus on supporting practitioners to develop questions as part of the 6As EBLIP model. EBLIP is an approach to evidence based practice and evidence based decision making in libraries and is applicable across all library sectors and functional areas. The “6As” model includes the following stages: Articulate, Assemble, Assess, Agree, Adapt, and Advocacy (or Announce). The original five “A’s” were proposed by Koufogiannakis (2013) and the last “A” was proposed by Thorpe (2021) and recognises the importance of communicating evidence and outcomes.

One area of libraries, and the focus of this paper, that the EBLIP model can support is open educational practice (OEP). The case study follows a collaborative project between UniSQ Library’s Evidence Based Practice team and Open Educational Practice team. In the process of developing a data dashboard these teams identified the need for a new framework for asking EBLIP questions. The dashboard used web analytics data to show the reach of UniSQ’s open textbooks and provided data access to the open textbook authors. The dashboard project highlighted the need for new approaches to formulate questions for evidence based practice in organisational contexts.

Background

Open educational resources (OER) have been a part of the learning and teaching landscape internationally for over fifteen years. Specifically, OER refer to:

teaching, learning and research materials in any medium, digital, or otherwise, that reside in the public domain or have been released under an open license that permits no-cost access, use, adaptation, and redistribution by others with no or limited restrictions. Open licensing is built within the existing framework of intellectual property rights as defined by relevant international conventions and respects the authorship of the work. (UNESCO, 2012, p. 1).

More recently, open practitioners have embraced a wider application of OER, expanding and maturing the field to consider how the affordances of open education (notably open licences) can be leveraged to enhance learning and teaching, and reposition the student experience as co-creators of knowledge. Open educational practices (OEP), is defined, therefore, as:

teaching and learning practices where openness is enacted within all aspects of instructional practice; including the design of learning outcomes, the selection of teaching resources, and the planning of activities and assessment. OEP engage both faculty and students with the use and creation of OER, draw attention to the potential afforded by open licences, facilitate open peer-review, and support participatory student-directed projects. (Paskevicius, 2017, p.127).

The wider application of OER and maturation of the field reflects similar developments in EBLIP that have seen a shift away from evidence hierarchies, broadening of evidence types, and appreciation for local contexts (Wilson, 2025).

In August 2021, UniSQ Library’s Coordinator (Evidence Based Practice) and Open Educational Practice team initiated a project focused on data and open textbooks that remains in place to this day. Upon first meeting, the two teams established the potential for a data dashboard to support the continuous improvement and communicate the value and impact of open textbook services across UniSQ. Existing frameworks for asking questions did not entirely capture the exploratory nature of this work or the diverse range of internal and external stakeholders who would access the data dashboard, such as library professionals, academics and authors, editorial and publishing staff, and administrators and senior leaders. This misalignment of existing question frameworks reflected the experiences of the Coordinator (Evidence Based Practice) in engaging diverse library teams and professionals, across different roles and levels, who were often initially interested in engaging with EBLIP in informal and exploratory ways.

The continuing development of data dashboards provided opportunities to empower open textbook authors, including academics, to have agency around their own data. By using the dashboard, authors could communicate narratives about the reach and use of their open textbook. The data shared often extended into stories of value and impact for students. One of UniSQs most popular open texts, Fundamentals of Anatomy and Physiology, is read by a diverse cohort, including students impacted by geographic barriers. In Australia, the Great Dividing Range is a mountain range that stretches along the east coast of Australia, from Victoria to North Queensland. In Queensland it marks a digital divide between the east coast and the rural and remote inland west (Thomas et al., 2025). West of the range, internet connectivity is poor, presenting barriers to ebook access for online students studying in those regions. The data dashboard showed that students within this cohort preferred to download PDF versions of the text and read them on mobile phones. Using an evidence based approach enabled us to better understand students’ reading strategies and their educational reality. This ensured they had access to the formats needed for their studies. Through the narrative stage, the Library and open textbook authors shared data stories that were tailored to university stakeholders to communicate key insights from our evidence.

Focusing on UniSQ Library's Open Educational Practice and Evidence Based Practice teams’ collaboration, this paper provides an opportunity to reflect on the values and voices of open practitioners, communities, and stakeholders through focused questions that guide data collection on open textbooks. The findings of this paper also reinforce how developing these questions can highlight the underlying values that surround library practice and build trust with the communities we work with.

Aims

This paper responds to the research question: How can a framework for asking EBLIP questions be developed and applied to open educational practice in a library? Using a case study approach, this paper presents a new framework that was developed for open educational practice reporting tools in libraries. The OPEN framework arose from a need to guide decisions about what practitioners need to know, collect, and communicate when it comes to evidence in open educational practice. The OPEN framework is presented as an alternative to existing question frameworks (such as PICO, SPIDER, and SPICE) to better align with the needs of open education practitioners and library professionals.

The scholarly contribution of this paper, however, goes beyond the development and application of a new question framework. It seeks to contribute to future directions for EBLIP and an evidence based profession by demonstrating a collaborative and accessible approach to EBLIP. The case study provides an example of how the authors approached building an evidence base collaboratively through the design of a robust question framework that is widely applicable and accessible at all levels and stages of a practitioner's career or place in the library. This paper aims to demonstrate the practical application of this framework and approach.

Literature Review

Evidence remains a foundational and significant aspect of decision-making and continuous improvement in libraries. Formulating and asking questions has long been recognised as key to this process and embedded in EBLIP models. How questions are formulated has shifted over time. In taking a holistic view of EBLIP, Koufogiannakis and Brettle (2016, pp. 19–20) describe how:

We need to ensure that the question allows us to capture what we already know and incorporates local evidence and our professional knowledge. Therefore, it is more appropriate that the wording and content of the question will allow us to consider all the relevant evidence that we may want to use in order to answer the question.

A holistic view of EBLIP reflects the need for evidence in libraries to be relevant to local contexts and include professional knowledge. It indicates a shift in EBLIP maturity as it has evolved from its origins in evidence based medicine, into evidence based librarianship, and onward into the EBLIP model. Frameworks for formulating questions, however, have not evolved in the same fashion, and remain tied to health sciences, clinical medicine, and health librarianship contexts (2016, pp. 20–21). While familiar to librarians working in or with health disciplines, such question frameworks do not immediately translate to other academic library contexts and are not always responsive to more exploratory or impromptu EBLIP needs.

Frameworks for formulating EBLIP questions rely heavily on health sciences and clinical medicine approaches. The PICO model, for example, arose from evidence based medicine and has been shown to be overly clinically oriented in its structure and terminology (Koufogiannakis & Brettle, 2016, p. 21). As such, it is not as effectively adapted to library and information science contexts. Other frameworks like SPIDER, ECLIPSE, and SPICE are better suited to qualitative research questions or project and service evaluations. These, however, are often still framed within formal research and systematic review contexts, presenting limitations and barriers. The Library’s EBP and OEP team recognised the need to support diverse stakeholders in EBLIP, whether as library professionals evaluating their own operations or as stakeholders and administrators requiring access to data for reporting. It was recognised that the OPEN framework could support those in the exploratory stages of their EBLIP journey and those requiring more immediate access to evidence through dashboards, such as for business intelligence or academic career progression reporting.

From an OEP perspective, a purposeful, deliberate, and collaborative approach to data collection progressed a maturation of practice institutionally (simultaneously building awareness in a range of fora), whilst providing an evidence base for teaching and learning decision-making. When focusing on OER alone, an institution’s questions will predominantly address matters of storage and access, reliant on purely quantitative data. At this stage, the data is often driven by a need to show cost savings for students, the extent to which OER are used by faculty, and the potential reach and attention of OER outside the institution. These applications described the initial data collection and communication practices at UniSQ. The disadvantages were revealed through a lack of nuance in the usage and reception of OER, and whether those using OER had made a positive contribution to student learning and teaching. The narratives were therefore simplistic and purely quantitative, often raising more questions that could not be answered by the available evidence.

As EBLIP continues to mature at an organisational level (Thorpe & Howlett, 2020), there is a need to ensure capabilities are embedded across all levels and areas. Alongside developing capabilities in formulating evidence based questions across all levels, values play a role. In decision-making with evidence, Koufogiannakis (2013, p. 14) poses an important question as being: “Is the decision in keeping with our organization’s goals and values?” Additionally, Bell (2023, p. 128) describes how “[t]rying to determine user values and perspectives, without critically reflecting on our own, can mean inadvertent consequences for users from otherwise well-intentioned questions and decisions in EBP.” It is at this intersection of evidence and values that a relationship between EBLIP and OEP is found.

The relationship between open education and evidence has been interlinked against a backdrop of advocacy and educational change. Emerging open education initiatives (usually manifesting as open textbook programs) require funding and resourcing (Stagg et al., 2023). The Australian higher education climate is presently characterised by a lack of funding opportunities federally, compounded by consistent reductions in institutional budgets. Managerialist performance metrics further confound the environment, particularly approaches to student throughput, academic workload, and research performance (Sarpong & Adelekan, 2023). Such measures contribute to rendering the purpose of the university to an “irreducible minimum” of quantitative data with little recognition of the critical human element of education (Morley, 2023). Open education is, therefore, heavily reliant on proving value as a survival mechanism. It is perhaps an evolution from 'mere survival' to 'nurturing ecology' that charts not only the maturity of open education institutionally (Stagg, 2024), but the quality of evidence used (or even available). As institutional focus shifts from Open Educational Resources (OER) to Open Educational Practices (OEP), so too do the requirements for evidence.

As open educational practice (OEP) matures, so too must the methods by which practitioners collect and communicate data. As a relatively new phenomenon in Australia, OEP requires advocacy backed by a robust evidence base (Stagg et al., 2018), and institutions with early-phase OEP initiatives often opt for purely quantitative metrics that act as proxies for impact, usually associated with student and course/unit numbers, and cost savings to support claims of enhanced access and affordability (Colvard et al., 2018). Despite this quantitative focus, OEP is deeply embedded in human-centered responses to barriers affecting access to information (Giblin & Weatherall, 2022) and underlying financial issues directly influencing the university experience (Aijawi et al., 2025; Russell et al., 2025; Salisbury et al., 2023). This focus on human issues and human-centric responses therefore demands different approaches to not only the types of the information collected, but the manner of its’ presentation, and the agency of stakeholders.

As a human-centered approach (Bali et al., 2020) OEP requires complex, multi-tiered inter-relationships across the institution that support students and staff to engage with openness. Libraries are the most common centre of university OEP advocacy and coordination (Foley, 2021; Enders & Naidoo, 2022), yet are reliant on relationships with faculty, student support, copyright services, learning designers, media producers, and senior leadership for success. Non-human resources such as policy, learning and teaching priorities, mission and vision, and student attraction and retention strategies likewise offer affordances to the open practitioner seeking to establish value (Stagg, 2024). Frameworks designed to explain engagement with open education tend to be linear and sequential (Armellini & Nie, 2013; Masterman & Wild, 2013; Nascimbeni et al., 2024), and whilst research on 'barriers and enablers' is plentiful, few holistically link these factors, or offer practical strategies to contextualise findings, or acknowledge the breadth of evidence types required to support sustainable practices.

This 'ecology of open practice' necessitates a wider range of evidence, with tailored communication based on stakeholder need. Importantly, there is an opportunity to build relationships with stakeholders concerning the types and uses of data that support their engagement with OEP. As the understanding of, and engagement with, OEP deepens, the scope of required data broadens, and co-design of data collection instruments becomes imperative to drive OEP as a whole-of-institution initiative. The University of Southern Queensland encapsulates this as 'open as everyone's business', remixing and building upon Sally Kift's assertion with transition pedagogy that 'first year experience is everybody's business' (Kift et al., 2010). Kift's reframing of the first-year experience as transcending disconnected or generic orientation and rather encompassing the entire institution informs much of the educational transformation framing for OEP at UniSQ, and extends to the evidence based foundation for this work.

The human-centredness of OEP is a key strength that translates across all facets of activity (Hamilton, & Hansen, 2024). Practices such as pedagogy of care (Zamora & Bali, 2025) and the role of empathy are gaining momentum in higher education (Bali & Zamora, 2022); OEP offers mechanisms that authentically engage practitioners and students with these concepts. Curating OER to replace commercial learning resources, and positioning students as co-creators of knowledge within society are both examples of student-centred care and respect that inform teaching practices and leverage OEP. A synthesis of the complexity of human-centred approaches, coupled with an emerging ecology of open practice illuminated the need for similarly aligned, community-driven and co-created evidence collection frameworks, and it is the exploration of this space that is the focus of this paper.

Methods

This research engages a single-case study methodology. Case studies are defined by Creswell (2015, p. 485) as "an in-depth exploration of a bounded system (e.g., an activity, event, process, or individuals) based on extensive data collection". Robert Stake’s approach to case studies is adopted, which advances a constructivist epistemology and uses qualitative data (Stake, 1995). The single-case study design allows flexibility to examine the single project that prompted the development of OPEN as a new framework for asking EBLIP questions. Additionally, the single-case study design provides a formative approach to the development of the OPEN framework, allowing for exploration and understanding of its value.

The case study research method allows a focus on how the development of the OPEN framework was a collaborative process between two library teams and provided value to both, alongside the wider library and university, with application to the fields of both EBP and OEP more broadly. It studies the Evidence Based Practice team and the Open Educational Practice team at the University of Southern Queensland Library, a regional university library in Australia. This project developed over a three-year period, beginning in August 2021 and ending in June 2024. The first two authors of this article were each part of the two library teams during this period, with the third author stepping into leadership of the Evidence Based Practice team in early 2025 and continuing this work. Those first two authors were actively engaged in determining not only how to develop a data dashboard but also the data-driven questions that would guide it. This process of developing questions to guide the dashboard is captured through artefacts, such as reports and documents, that arose in the process.

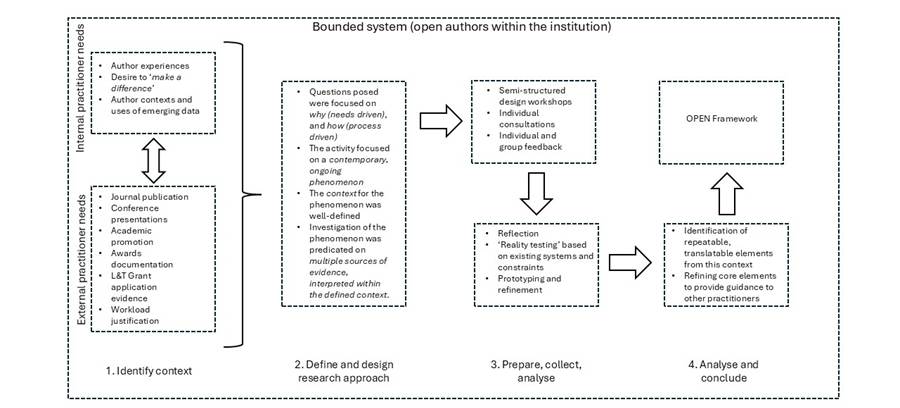

The research design comprised four stages (Yin, 2014; Figure 1, below):

Identifying the context. Single-case study methodology relies on the articulation of a bounded environment (the ‘case’) and a clearly identified need. In this instance, the need was for seamless access to reliable, trustworthy data concerning open textbook reach and attention driven by specific applications identified by the community (such as authoring journal articles, applying for promotion, evidencing impact for learning and teaching)

Define and design the research approach. Case study methodology is best employed in environments where questions focus on how (the processes) and why (the needs). The approach requires a contextualised environment examining a contemporary phenomenon in progress. The context for this study was bounded by the UniSQ institutional environment, included the population of open textbook authors and the staff supporting them, and focused on the emerging and continuing need for data collection and communication. Lastly, the design draws together multiple sources of data, such as practitioner experience and evidenced needs, reach and attention data secured through analytics, and the process of questioning within the community.

Prepare, collect, and analyse. During this phase, semi-structured design workshops were facilitated, as well as the collection of individual feedback. Participants reflected on early prototypes, refined, and tested the data dashboard, and engaged with iterative design and problem-solving toward a final implementation.

Analyse and conclude. An analysis of the immediate outcomes within context was conducted, ultimately aiming to create a transferable, repeatable process (the OPEN Framework) adaptable to other contexts by open practitioners, and those involved in evidence based practice.

Further details for these processes will be discussed in subsequent method sections

Figure

1

Research design for the OPEN Framework. Adapted from Yin (2014).

An important aspect of this research included recognising positionality and incorporating reflexivity. Positionality is the social reality that a researcher or professional is positioned in that reflects the understandings, values, beliefs, and assumptions they bring with them (Robinson & Wilson, 2022). It is reflective of the socio-cultural and political identities and positions that influence how we engage with research. Reflexivity is critically examining these influences on our work and research. It includes developing an understanding of the motivations, power dynamics, and privileges that come with our positionality and taking account of these when conducting research (2022). Both positionality and reflexivity impact researcher approach to interpretation, and Stake (1997, p. 97) emphasises the role of case study researcher as interpreter to find new connections and meanings. This is a key part of the constructivist methodology and interpretation of findings (1997, pp. 99-100).

Within this case study, each of the authors held leadership positions with potential to influence the directions of EBLIP and OEP at UniSQ and in the wider profession. The authors were at different stages of their careers and part of different, though intersecting, professional communities. This meant they held diverse experiences across a variety of library areas. Two of the authors were completing PhDs and engaged in academia outside of their UniSQ roles. Additionally, while the initial project team of three oversaw the development of the data dashboard and communications around it, this was followed by changes in roles, team structures, and the institutions that the authors worked at.

Data Collection

Qualitative data collected for this study were obtained through autoethnographic material from observations and documents, along with digital artefacts as outputs from using the framework for open education library projects requiring evidence. Digital artefacts and autoethnographic material collected as data included the Power BI dashboard, meeting notes and data related questions that were recorded, and feedback from key stakeholders (predominantly academic open textbook authors). The data collected covers three components of the project.

Three components of the project covered in data collection include, (i) the motivation for developing an OPEN framework, (ii) the collaborative process workshopping this framework, and (iii) the value and impact of the framework’s application in practice. Individual authors’ reflections from the dashboard process also provided data and demonstrated the need for a new approach to asking EBLIP questions. Documentation of early discussions around Google Analytics data and dashboard development were a key source of evidence, allowing an inductive approach to developing this OPEN framework.

Data Analysis

An inductive approach has been taken to the development of the OPEN framework. That is, a bottom-up approach, starting from observations and data, and then developing the OPEN framework as a culmination of their project and case study. Conversely, a deductive process would have seen an OPEN framework developed first and then applied to the project. The autoethnographic material and digital artefacts that came from the creation of an open textbook data dashboard have been analysed by the authors to develop and determine the value of this OPEN framework. This analysis was predominantly through joint discussions and individual reflections during the write up process, consistent with a collaborative autoethnographic (CAE) approach that services a tool for researcher reflexivity and professional development (Miyahara & Fukao, 2022). Following Stake’s approaches to qualitative data analysis in case studies, the authors emphasise the importance of context and sensemaking in their analysis and interpretation. Analysis started from the very early stages of the project, and continued during the writing of this paper, with reflective conversations and correspondence about the case and its data.

Results and Discussion

It was in analysing the course of UniSQ Library's open textbook dashboard project that the OPEN framework has been derived, mapping project stages to a corresponding step as part of a new framework. This results and discussion section walks through each element of OPEN, positioning it in the context of the open textbook project. The discussion also draws from literature on existing frameworks for evidence based questions to situate OPEN as an alternative.

The Open Textbook dashboard project was an exploratory initiative, and UniSQ Library’s Open Educational Practice and Evidence Based Practice Teams, needed to make decisions about what data and visualisations should be included in the dashboard. While consultations and partnership with the academic community were a part of this process (Bell et al., 2024), it was also necessary to formulate questions to define the scope of this work and guide intentional data practices for decision-making and reporting. Previous frameworks, such as PICO and SPICE, focused on formulating questions for clinical environments and didn’t meet the operational and strategic needs of a library workplace. Following the creation of the dashboard, the OEP and EBP teams returned to create an OPEN framework, to support developing questions at any staff level or area across the library.

The OPEN framework encompasses four elements to help define an evidence based question for library decision-making. These elements are Objective, Purpose, Evidence, and Narrative (OPEN). Together, these elements support library and information professionals to define what they need to know, collect, and communicate for an evidence based decision. Each stage of OPEN has a prompt helping professionals understand what each stage contributes and guiding their response (Table 1). The following sections outline each stage, its prompt, and how it helps guide professionals to ask evidence based questions in libraries.

Table

1

OPEN Framework Stages and Prompts

|

Stage |

Prompt |

|

Objective |

What do I need to know? |

|

Purpose |

Why do I need to know this? |

|

Evidence |

What evidence do I have or need? |

|

Narrative |

How will I communicate this evidence? |

Objective

What do I need to know? The objective phase helps to narrow our focus and determine the scope of our data collection activities and analysis. In the OEP context, this question extends into asking:

· What does the library or parent organization need to know?

· What do open textbook authors and contributors need to know?

· Who or what is the subject of our inquiry?

For our dashboard, consultation sessions with open textbook authors indicated they were primarily interested in questions related to access, use, and impact (Table 2). This included who was visiting their open textbooks and where those visitors were from (access), what the texts were being used for and the extent of use (use), and the difference that was being made (impact). Once it was determined what the OEP team and academic authors wanted to know, the project group turned their attention to the purpose behind the information and evidence needs to support building the dashboard.

Table

2

Assessing ‘Objective’ for the Open Textbook Dashboard

|

Objective theme |

What do I need to know? |

|

Access |

Who is visiting the open textbooks and from where? |

|

Use |

What is the open textbook being used for and how much? |

|

Impact |

What difference is being made from use and access to the open textbook? |

Purpose

Why do I need to know this? This prompt for the purpose phase helps library professionals to understand their reasons for collecting data. Without purpose, data collection activities can become habitual but meaningless. That is, ‘We’ve always collected this data, but I don’t know why, and we’re not really using it.’ Purpose helps us to understand the strategic value of data and can inform the kinds of evidence collected.

Purpose is about determining the meaning behind data collection and its objectives. In the OEP context, the purpose of data collection and reporting can vary and may be multifaceted. Purpose may encompass:

· Understanding the needs of users to better meet those needs

· Identifying opportunities to develop new resources and services

· Measuring use and impact to demonstrate value and maintain stakeholder engagement

· Resource management and improvements

· Wider advocacy for OEP within an institution or the higher education landscape

· Assessing usefulness and usability of OER to inform decision making

Purpose assists in refining the objective and defining the audience. For the case study, the overarching purpose was to help authors measure the use of their open texts to demonstrate the value of these resources.

Evidence

What evidence do I have or need? After identifying the objective and purpose, the framework calls for identifying what evidence is needed to achieve these. If the purpose and objective is to demonstrate the value of open textbooks through student engagement, then evidence needs may include identifying the number of referrals from learning management systems or course reading lists and the time students from that institution spend viewing a specific chapter or page. If the purpose and objective is to focus on understanding what students need to gain from an open textbook, a survey or interview will provide more useful data. Depending on purpose, evidence may include:

· Access statistics such as downloads, page views, and unique users (web-analytics)

· Reviews and course adoptions

· Location data

· Student surveys, interviews, and testimonials

· User engagement (e.g. web page visit duration, referrals)

· Adaptations and remixes of the resource

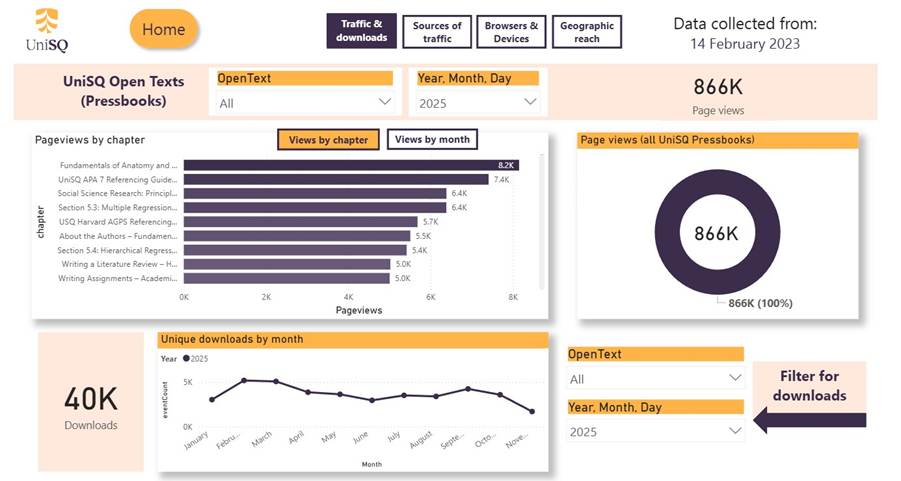

Within the case study context, the EBP and OEP teams already had access to web analytics data that could meet the objectives and purpose they had identified. Statistics from this data were being shared in email format to open textbook authors, however, there had not been prior work to determine relevant data to share, until now. Internal team discussions and further consultation revealed several categories of data that were identified and requested by the community of open textbook authors. These were traffic & downloads; sources of traffic; browsers & devices, and geographic reach. Data categories can be seen in Figure 2, which highlights each page of the dashboard (grouped by data category).

Narrative

How will I communicate this evidence? In the last stage of the OPEN model, narratives are used to communicate evidence to a specific audience. This includes thinking through how evidence is used to tell a story that meets our objective and purpose. The ways in which data are communicated stories vary, and data dashboards provide opportunities to share stories in new ways.

At UniSQ Library, the EBP and OEP teams recognised that web analytics data would meet their objectives of measuring access and use so that authors could start to demonstrate the value of their resources. Power BI reports and dashboards were the best tools available for us to visualise and communicate granular data required by our audience. It allowed a hands-on, interactive, and flexible narrative that could be adjusted to the needs of different stakeholders. It was designed to reflect multiple narratives depending on the user at hand, while providing multiple data points to support users in communicating their own narratives beyond the dashboard.

OPEN

OPEN (Objective, Purpose, Evidence, and Narrative) presents an alternative approach to asking questions that steps outside of health-science frameworks. Existing frameworks, such as PICO (Patient/Problem, Intervention, Comparison, and Outcome) are designed for answering health-related questions (Davies, 2011). While they have been modified to be more relevant for library professionals (exchanging patient for population), they are also heavily reliant on database and literature searching approaches for evidence. Yet, library professionals continue to adopt a variety of techniques for evidence based practice, including user experience (UX) research methods, analytics, data dashboards, and participatory approaches, often partnering with professionals with different backgrounds and lived experiences. This is consistent with a broadening of EBLIP evidence and research types and a local context lens (Wilson, 2015). The OPEN framework sees practitioners go beyond identifying a ‘problem’ or ‘population’ to study and allows more flexibility in articulating exploratory and practice-based evidence needs. It was in undertaking the open textbook dashboard project that the need for a flexible and accessible approach to asking practice-based questions was realised for projects of an exploratory nature. Through the dashboard project, the EBP and OEP teams understood and were able to shape the questions needed for a dashboard to support evidence needs (Figure 2). A sample of these final questions is outlined in Table 3.

Table

3

Example Dashboard Questions Using the OPEN Framework

|

Objective |

Purpose |

Evidence (web analytics) |

Example dashboard questions |

|

Use (what is the OER being used for and how much) |

Demonstrate the reach of UniSQ open textbooks. |

Number of page views and users. |

What are the total number of users and unique users? |

|

Understand patterns of use and engagement. |

Top most accessed chapters. |

What are the top ‘n’ most accessed chapters? |

|

|

Access (who is visiting and from where)

|

Understand where users are being referred to an open textbook from. |

Sources of access |

Which social media sites generate the most user referrals?

What proportion of users are referred from university learning management systems? |

Figure

2

UniSQ

Open Texts dashboard.

The development of the OPEN framework was a key finding from this case study. The significance of this framework lay in both the collaborative process used to develop it and its output as a tool that can support exploration and a maturation of EBLIP practice institutionally. The collaborative nature of this project (academic, OEP, and EBP), allowed for an intermingling of practices that benefit all participants, and represent informal knowledge-sharing practices that can be formalised, and replicated in other institutions or contexts. The collaboration helped to build trust with the open textbook community and revealed the values underpinning the work of the library and open educational practitioners. It ensured the dashboard was a shared process, with benefits across each area, driven by shared values and purpose. The project has already illuminated potential future partnerships, for example with marketing (using data for student attraction), policy (especially as it relates to recognition of learning of teaching, and academic promotion), and opportunities to combine this with other forms of data (such as those related to access and affordability of learning resources institutionally, or library expenditure on textbooks to mitigate access issues).

For the EBP team, the collaborative benefits included seeing the maturation of EBLIP across the Library. It not only saw the OPEN framework produced as an output and an EBLIP tool but also produced the partnerships that helped to drive an evidence based culture forward. The EBP Coordinator observed and recorded an increased interest with what EBLIP could achieve for different teams. The dashboard itself had prompted curiosity and the EBP Team’s collaborative approach and emphasis on partnership allowed for increased exploration of evidence based practice.

Internally, the OEP team benefitted from data that evidenced reach, attention, and impact both within, and beyond, the institution. The co-created evidence base revealed avenues for investigation desired by the academic staff which in turn (i) reinforced the partnership with the Library in open education, (ii) established the value of the Library as part of their professional data ecology, (iii) provided insight for the Library in terms of the types of narratives valued by the Faculties as a strategy to increase awareness of, and engagement with OEP. The evidence base can be leveraged in the future to influence key stakeholders, bid for institutional resources, raise general awareness, lobby for policy change, and improve internal processes for open publishing (such as gathering feedback, attracting new authors or collaborators for open texts, and refining published outputs to better meet access and affordability goals).

Implications and Future Directions

Several implications and future directions were identified across the OEP project and in the development of the OPEN framework. These were both broader EBLIP and LIS contributions as well as local institutional ones. Looking to broader implications, being complementary to the existing EBLIP 6A’s model, the OPEN framework was designed to have wide applicability. It is not only limited to OEP projects but is transferrable and can be recontextualized across various library teams, functions, and evidence requirements. Additionally, the framework could be repurposed by practitioners researching OEP. The OPEN framework builds on such developments as the broadening of evidence types in EBLIP (Wilson, 2015) but it also seeks to make EBLIP more accessible, including for collaborative and exploratory projects.

Looking to local implications, across the course of this project, the OEP and EBP teams were mindful of data and dashboard limitations. In particular, they were careful to communicate how web analytics data might show reach (how widely open textbook content was being disseminated and viewed) but this did not necessarily reflect engagement with or impact of the content. In some instances, reach could be a proxy for engagement, however, the limitations of web analytics data could not be ignored. This was especially important in the narrative stage of OPEN. Such considerations propelled the project teams to consider what other evidence might complement or be included in the dashboards in future and better demonstrate impact. These discussions reflected the broadening and holistic development of EBLIP in the profession and the maturation of EBLIP locally at UniSQ Library.

Another future direction highlighted by the data dashboard is the lack of connectivity across data sources institutionally. For example, a key area for investigation is the role of OEP in student engagement, achievement, retention, and progression. The Library’s data is currently disconnected from the institutional data warehouse, as well as being disconnected internally, meaning a holistic and integrated view of the student and staff experience is, at this point, not possible without labour-intensive, manual processes. However, the stakeholder discussions have confirmed this evidence would be extremely valuable and fill important gaps in the continuing narrative of the impact of OEP at UniSQ and thus represents a high-value strategic focus for the future. The EBP team at UniSQ is working toward a connected data ecosystem to enable such insights.

Conclusion

The OPEN framework provides a novel structure for asking questions in an EBLIP context and contributes to future directions for EBLIP. As EBLIP continues its development, as its own practice that is distinct from its clinical origins, the applicability of existing frameworks can present challenges for EBLIP practitioners. This led to UniSQ Library’s EBP and OEP teams developing a new framework that could be used by anyone, regardless of their role or experience in EBP. In the process of collaboratively designing a data dashboard, the lack of a suitable framework for framing their questions in an EBLIP context prompted them to critically examine, reflect, discuss, and analyse their approach. This analysis led to the development of the OPEN framework. The four elements of OPEN: Objective, Purpose, Evidence, and Narrative, provides accessible and flexible guidance for asking EBLIP questions. The development of the OPEN framework across UniSQ Library’s open textbook dashboard project saw the project team develop the necessary questions to produce a dashboard responsive to operational and strategic needs. UniSQ’s Open Textbooks data dashboard remains an important tool for authors to share data stories about their published textbooks. Through the case study, this paper has demonstrated how not only the development of the framework but, in doing so, also its application and impact on open education practice and evidence based practice at UniSQ.

Author Contributions

Emilia Bell: Conceptualisation, Methodology, Writing Adrian Stagg: Conceptualisation, Methodology, Writing

Acknowledgements

The authors thank Nikki Andersen and Stacey Larner for their valuable contributions to the conception of this paper and to the research project. Nikki’s and Stacey’s insights and their collaborative spirits have greatly enriched this work.

References

Ajjawi, R., Crawford, N., Bearman, M., Brett, M., Dollinger, M., & Tai, J. (2025). The house of cards: Equity-group students’ experiences of structural inequity in higher education. Higher Education Research & Development, 44(4), 793–807. https://doi.org/10.1080/07294360.2025.2456819

Armellini, A., & Nie, M. (2013). Open educational practices for curriculum enhancement. Open Learning: The Journal of Open, Distance and e-Learning, 28(1), 7–20. http://dx.doi.org/10.1080/02680513.2013.796286

Bali, M., Cronin, C., & Jhangiani, R. S. (2020). Framing open educational practices from a social justice perspective. Journal of Interactive Media in Education, (1), Article 10. https://doi.org/10.5334/jime.565

Bali, M., & Zamora, M. (2022). The Equity-Care Matrix: Theory and practice. Italian Journal of Educational Technology, 30(1), 92–115. https://doi.org/10.17471/2499-4324/1241

Bell, E. C. (2022). Values-Based Practice in EBLIP: A Review. Evidence Based Library and Information Practice, 17(3), 119–134. https://doi.org/10.18438/eblip30176

Bell, E., Andersen, N., & Stagg, A. (2024). Values based approaches to evidence for OER Advocacy. In A. Barber, M. Fatayer, R. McLennan, A. Luetchford, S. McQuillen & A. Williamson (Eds.), Open Education Down UndOER: Australasian Case Studies. Council of Australian University Librarians. https://doi.org/10.70802/6931-b0p0

Beauchamp, R; & Webb, H. (1927). Resourcefulness, an unmeasured ability. School Science and Mathematics, 27(5), 457–465. https://doi.org/10.1111/j.1949-8594.1927.tb05722.x

Colvard, N. B., Watson, C. E., & Park, H. (2018). The impact of open educational resources on various student success metrics. International Journal of Teaching and Learning in Higher Education, 30(2), 262–276.

Creswell J. W. (2019). Educational research: Planning, conducting, and evaluating quantitative and qualitative research (6th ed). Pearson Education

Davies, K. S. (2011). Formulating the evidence based practice question: A review of the frameworks. Evidence Based Library and Information Practice, 6(2), 75–80. https://doi.org/10.18438/B8WS5N

Giblin, R., Kennedy, J., Pelletier, C., Thomas, J., Weatherall, K., & Petitjean, F. (2019). Available- but not accessible? Investigating publisher e-lending licensing practices. Information Research, 24(3). https://ssrn.com/abstract=3346199

Giblin, R. & Weatherall, K. (2022). Taking control of the future: Towards workable elending. In J. Coates, V. Owen & S. Reilly (Eds.), Navigating Copyright for Libraries (pp. 351–377). Walter de Gruyter. https://doi.org/10.1515/9783110732009

Gillham, B. (2000). Case study research methods. Continuum.

Hernaus, T.; & Černe, M. (2021). Academic work design as a three- or four-legged stool. In T. Hernaus, & M. Černe (Eds.), Becoming an organizational scholar: navigating the academic odyssey, (pp. 15–29). https://doi.org/10.4337/9781839102073.00008

Hamilton, D., & Hansen, L. (2024). An artful becoming: The case for a practice-led research approach to open educational practice research. Teaching in Higher Education, 29(7), 1757–1774. https://doi.org/10.1080/13562517.2024.2336159

Johnson, B., & Christensen, L. (2012). Educational research: Quantitative, qualitative and mixed approaches. (4th ed.). Sage.

Kift, S., Nelson, K., & Clarke, J. (2010). Transition pedagogy: A third generation approach to FYE-A case study of policy and practice for the higher education sector. Student Success, 1(1), 1–20. https://doi.org/10.5204/intjfyhe.v1i1.13

Koufogiannakis, D. (2013). EBLIP7 keynote: What we talk about when we talk about evidence. Evidence Based Library and Information Practice, 8(4), 6–17. https://doi.org/10.18438/B8659R

Koufogiannakis, D., & Brettle, A. (Eds.). (2016). Being evidence based in library and information practice. Facet Publishing. https://doi.org/10.29085/9781783301454

Masterman, L., & Wild, J. (2013, March 26). Reflections on the evolving landscape of OER use. [Paper presentation]. OER13: creating a virtuous circle, Nottingham, England. http://repository.alt.ac.uk/id/eprint/2260

Morley, C. (2023). The systemic neoliberal colonisation of higher education: A critical analysis of the obliteration of academic practice. The Australian Educational Researcher, 51(2), 571–586. https://doi.org/10.1007/s13384-023-00613-z

Nascimbeni, F., Burgos, D., Brunton, J., & Ehlers, U. D. (2024). A competence framework for educators to boost open educational practices in higher education. Open Learning: The Journal of Open, Distance and e-Learning, 39(2), 150–169. https://doi.org/10.1080/02680513.2024.2310538

Paskevicius, M. (2017). Conceptualizing open educational practices through the lens of constructive alignment. Open Praxis, 9(2), 125–140. https://doi.org/10.5944/openpraxis.9.2.519

Ponte, F., Lennox, A., & Hurley, J. (2021). The evolution of the open textbook initiative. Journal of the Australian Library and Information Association, 70(2), 194–212. https://doi.org/10.1080/24750158.2021.1883819

Robinson, O., & Wilson, A. (2022). Practicing and presenting social research. UBC Library. https://doi.org/10.14288/84SB-8T57

Russell, J., Austin, K., Charlton, K. E., Igwe, E. O., Kent, K., Lambert, K., O’Flynn, G., Probst, Y., Walton, K., & McMahon, A. T. (2025). Exploring financial challenges and University support systems for student financial well-being: A scoping review. International Journal of Environmental Research and Public Health, 22(3), Article 356. https://doi.org/10.3390/ijerph22030356

Salisbury, F., Julien, B.L., Loch, B., Chang, S. & Lexis, L. (2023). From Knowledge Curator to Knowledge Creator: Academic Libraries and Open Access Textbook Publishing. Journal of Librarianship and Scholarly Communication, 11(1), Article eP14074. https://doi.org/10.31274/jlsc.14074

Sarpong, J., & Adelekan, T. (2023). Globalisation and education equity: The impact of neoliberalism on universities’ mission. Policy Futures in Education, 22(6), 1114–1129. https://doi.org/10.1177/14782103231184657

Schramm, W. (1971). Notes on case studies of instructional media projects. Stanford University. https://eric.ed.gov/?id=ed092145

Stagg, A., Nguyen, L., Bossu, C., Partridge, H., Funk, J., & Judith, K. (2018). Open educational practices in Australia: A first-phase national audit of higher education. International Review of Research in Open and Distributed Learning, 19(3). https://doi.org/10.19173/irrodl.v19i3.3441

Stagg, A., Partridge, H., Bossu, C., Funk, J., & Nguyen, L. (2023). Engaging with open educational practices: Mapping the landscape in Australian higher education. Australasian Journal of Educational Technology, 39(2), 1–15. https://doi.org/10.14742/ajet.8016

Stake, R. E. (1995). The art of case study research. SAGE.

Thorpe, C. (2021). Announcing and advocating: The missing step in the EBLIP model. Evidence Based Library and Information Practice, 16(4), 118–125. https://doi.org/10.18438/eblip30044

Thorpe, C., & Howlett, A. (2020). Understanding EBLIP at an Organizational Level: An Initial Maturity Model. Evidence Based Library and Information Practice, 15(1), 90–105. https://doi.org/10.18438/eblip29639

Thomas, J., McCosker, A., Parkinson, S., Hegarty, K., Featherstone, D., Kennedy, J., Ormond-Parker, L., Morrison, K., Rea, H., & Ganley, L. (2025). Measuring Australia’s Digital Divide: 2025 Australian Digital Inclusion Index. ARC Centre of Excellence for Automated Decision-Making and Society, RMIT University, Swinburne University of Technology, and Telstra. https://doi.org/10.60836/mtsq-at22

UNESCO. (2012). 2012 OER Declaration. World Open Educational Resources Congress. https://unesdoc.unesco.org/ark:/48223/pf0000246687

Wilson, M. (2006). Scholarly activity redefined: balancing the three-legged stool. OCHSNER Journal, 6(1), 12–14, https://www.ochsnerjournal.org/content/6/1/12

Wilson, V. (2015). Evidence, Local Context, and the Hierarchy. Evidence Based Library and Information Practice, 10(4), 268–269. https://doi.org/10.18438/B8K595

Yin, R. (2014). Case study research: design and methods (5th ed.). Sage.