PRODUCT REVIEW / ÉVALUATION DE PRODUIT

JCHLA / JABSC 46: 42-46 (2025) doi: 10.29173/jchla29854

Product: Undermind.ai URL: https://www.undermind.ai/

Undermind.ai is a “next generation, AI-powered information retrieval system that researches a complex topic for you” [1]. The tool mimics a human searcher’s multi-step discovery process and adapts its searching dynamically by leveraging artificial intelligence (AI). In 2023, as a Silicon Valley start-up, Undermind was described as “Google for scientific research” [2], its founders saying: “as researchers ourselves [we wanted to build] a search engine that could handle extremely complex questions… geared at experts, like research scientists and doctors, who need to find very specific resources to solve high-stakes problems” [2].

Undermind.ai performs searches in Semantic Scholar (https://www.semanticscholar.org), an interdisciplinary database of 225 million citations [3], via an application programming interface (API)–paired with a large language model (LLM)–to process results [4]. Instead of executing a single search, “Undermind’s algorithm conducts multiple iterative searches, dynamically adjusting its approach based on previously retrieved results, and carefully reading and following citation trails” [5].

Undermind is geared towards scientists and researchers with expert level information needs [6]. According to Shaurya Pednekar, an engineer at Undermind, “many users are research scientists at pharmaceutical and biotech companies in addition to academics” [5]. Librarians working with faculty, and upper level undergraduate and graduate students may find it useful in teaching AI search technologies, but probably not as a starter tool. Undermind is not suitable for clinicians needing quick answers at point-of-care, or for those doing quick Googling.

Here are a few notable features of Undermind.ai:

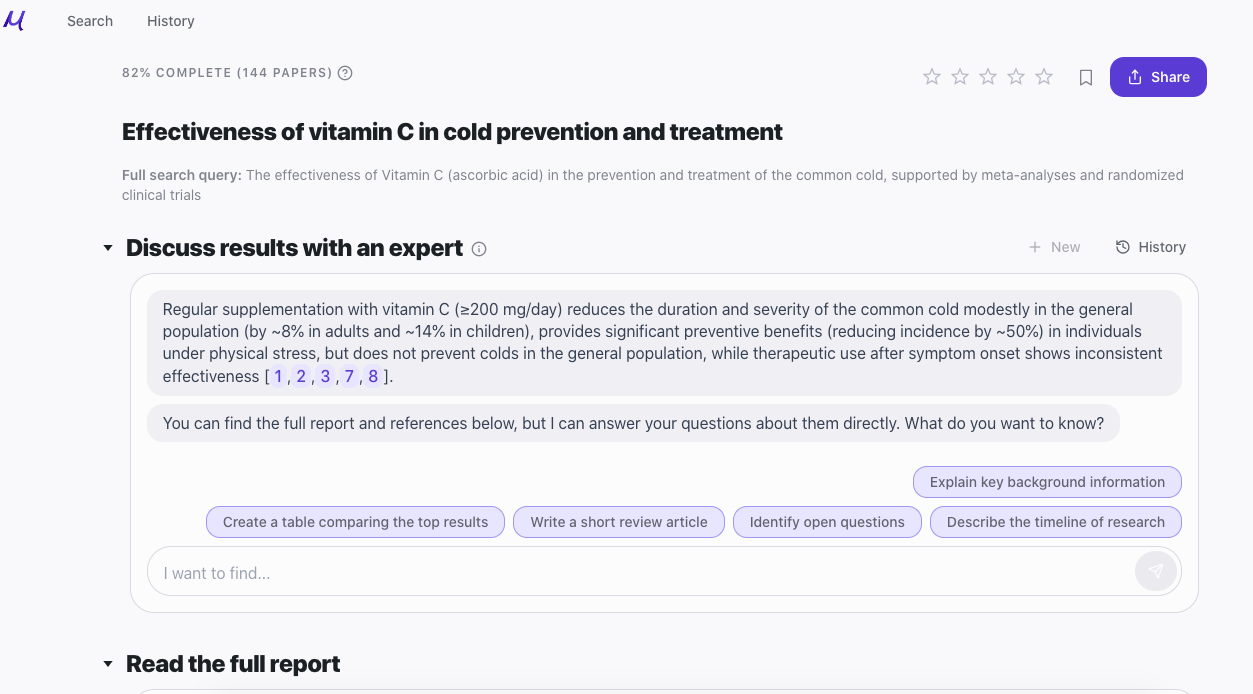

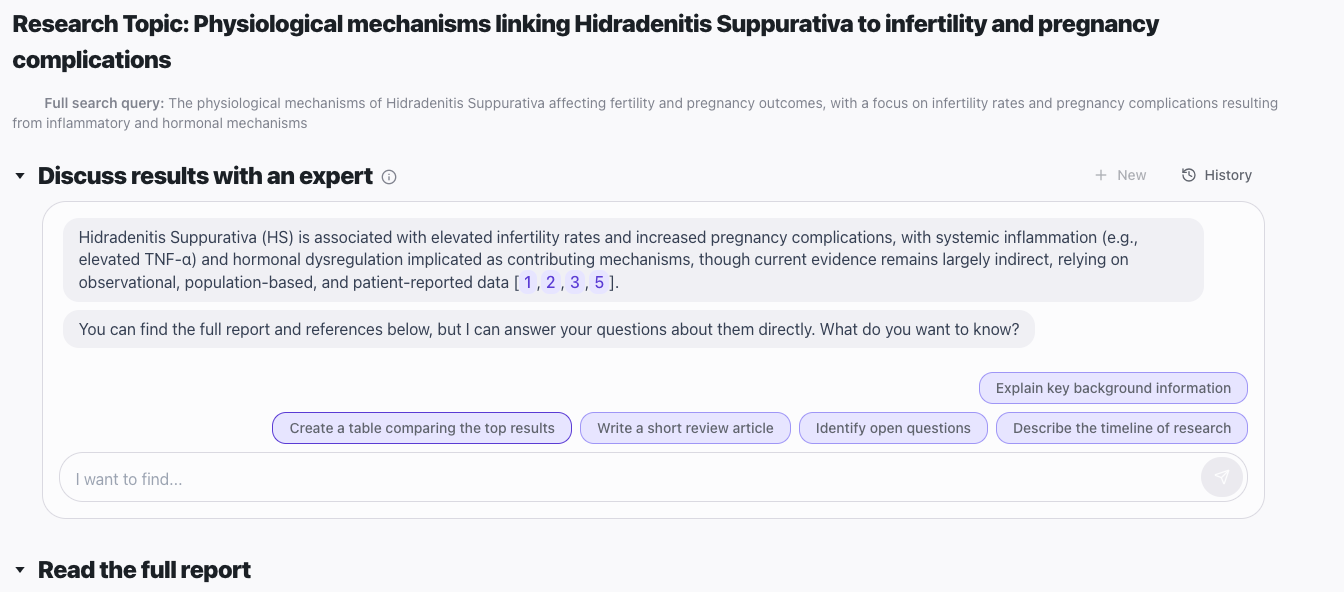

Here are two examples of searches in Undermind —a basic search on the effectiveness of treating a common cold and an advanced search on a complex biomedical question:

Undermind requires no local software installation, or downloads. Signing up for a free account at the website is recommended, and a reliable Internet connection is essential. Pro users can export results directly into a citation manager (e.g., Zotero, EndNote) or screening platform (e.g., Covidence) using a standard file format (e.g., CSV, BibTeX and RIS). Evidence based clinicians would benefit from a simpler mobile version, given the interface’s complexity. Browser compatibility is acceptable in Chrome, Firefox, and Edge.

No downloadable client is needed. Searches are saved on the platform, with shareable links. The interface has a minimal feel initially but gets cluttered quickly. Librarians accustomed to thesaurus based Boolean searching may find the user interface inflexible, but novices may prefer the platform’s ability to search and synthesize papers. Authentication is via username and password. The “Terms of Use” states that you can “freely share, distribute, and disseminate search results…with any third parties, including but not limited to non subscribers, other institutions, and the general public” [9].

Undermind relies on the user to communicate their information needs to the research assistant. The system mitigates this by asking users to clarify their research question, and reframe it in several steps. The 8-10 minute wait may frustrate those expecting faster results, though the relevance scores and “Summary of Key Findings” in the emailed report are informative. It is unclear whether searchers will find the detailed relevancy tables helpful, but they will provide some utility and transparency. Documentation is sparse for this tool, with only one video and the site FAQs. Finally, editing searches is not possible, but searchers can return to reports via history to ask new questions of the system, which increases the usability of search histories.

Undermind’s use of an AI agent or chatbot to refine a query is a strength, especially for complex topics. Another is the system’s statistical model or “discovery curve” that estimates the total number of relevant papers on any given scientific topic–even for cutting edge topics where there may only be a handful of relevant papers. Aaron Tay, librarian at Singapore Management University, said the model is based on the idea that “when users start to exhaust relevant papers in an index, they will start getting fewer and fewer relevant papers. This feature offers users confidence that they aren't missing highly relevant content” [6].

The inability to report a reproducible search strategy is Undermind’s Achilles heel, but most AI search platforms have this limitation. The cognitive load to learn an AI based user interface and system is high, but manageable via trial and error testing. Given the algorithms in this product, librarians will want to evaluate whether their search results reveal or perpetuate biases of various kinds [10]. The use of OpenAI GPT-4 may raise ethical questions about how to ethically use AI tools, and librarians should be prepared to have those conversations [11]. Due to the computationally intensive nature of the system, the platform prioritizes precision over speed. Some researchers will find the wait for results to be too long.

Undermind.ai uses Semantic Scholar’s updated database in real time, ensuring its currency. The platform’s AI driven search process enhances its currency by adaptively exploring full text and citation networks, similar to a human researcher’s process. Undermind continues to evolve—the 2023 version searched arXiv.org only but it now includes Semantic Scholar’s content from PubMed, arXiv, and other databases. If there is a time lag, it is likely a day or two only. The use of GPT-4 and Deep Search Technology suggest the system is current.

Undermind.ai offers a free version with a five search limit per month with each search analyzing 50 papers. The subscription pro version analyzes more than 150 papers. The pro version pricing is based on usage or simultaneous users (e.g., $60USD per month billed annually per user), and includes pricing for “Teams” and “Enterprise”. For librarians, the return on investment will depend on the time saved in synthesizing papers. Compared to free tools such as Google Scholar or subscription databases (Ovid MEDLINE), I would call this a boutique item for high end researchers, but not appropriate for general reference or undergraduate students unless they are interested in AI enabled searches.

Undermind.ai is a worthy, niche competitor in the AI search space, and researchers will benefit from using it. For health sciences librarians, it offers an AI powered way to start a literature review in biomedicine, and to find highly relevant (seed) papers in support of knowledge syntheses. The system’s slow response time limits its utility in some contexts, despite helpful summaries, match scores, and a final report. Similar to Elicit.com, Undermind is a sophisticated option for researchers, and represents the future direction of AI search tools [12]. Users should weigh buying a subscription and the platform’s shortcomings against the time savings offered by the final report.

I declare no conflicts of interest.

Dean Giustini, MLIS, MEd

UBC Biomedical Branch Librarian

Liaison librarian: UBC Faculty of Medicine, St. Paul's and Vancouver hospitals

VGH Diamond Health Care Centre, UBC Library

Vancouver, BC, Canada

Email: dean.giustini@ubc.ca

![]() Giustini.

Giustini.

This article is distributed under a Creative Commons Attribution License: https://creativecommons.org/licenses/by/4.0/